Google's AI Revolution: Smarter Workspaces, Custom Chips, and the Future of Cloud Computing:

Move Over, Nvidia: Google Unveils TPU 8t Chips with a Massive 3x Speed Boost.

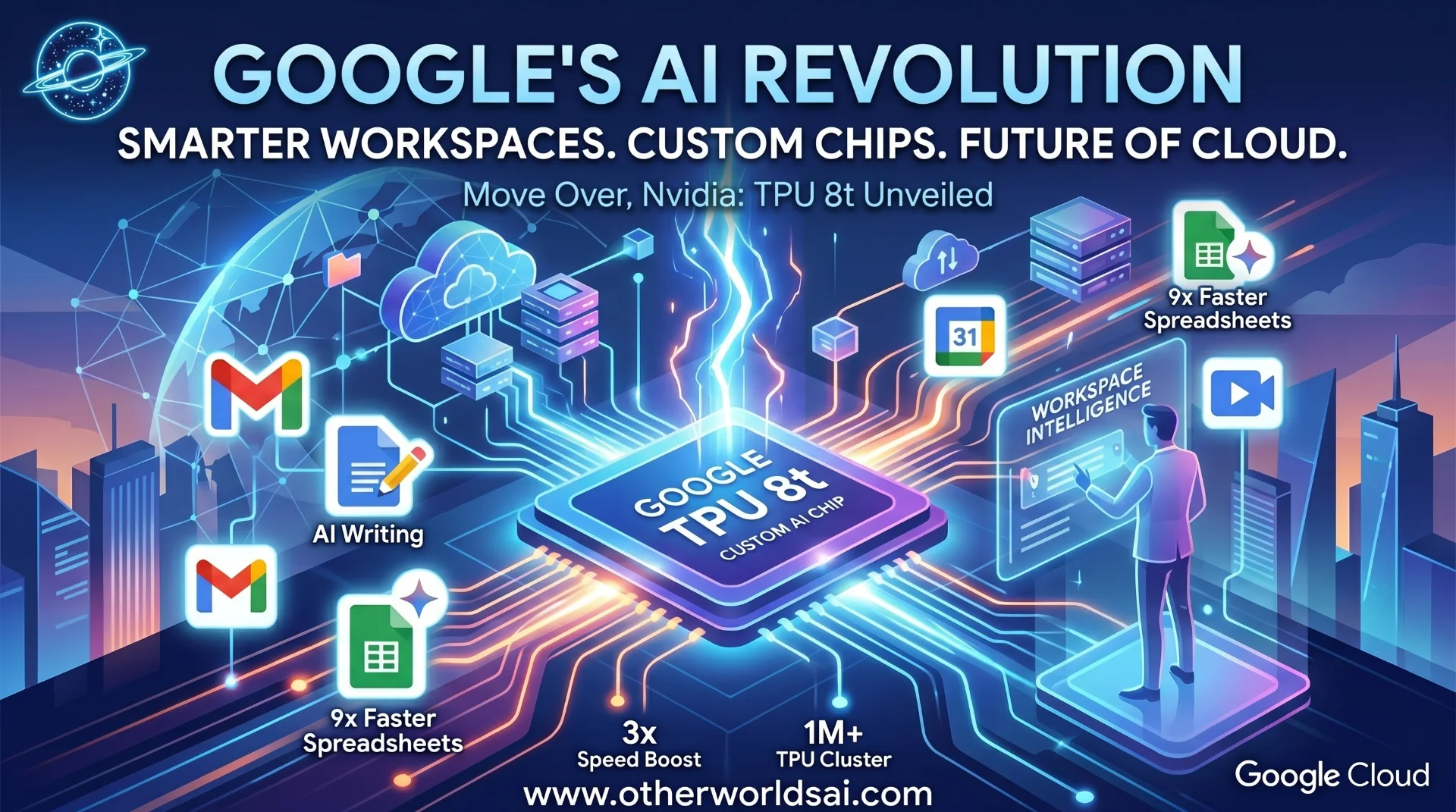

Google just made its boldest move yet in the AI arms race. At Google Cloud Next this week, the tech giant unveiled a sweeping set of announcements that touch every layer of the modern enterprise — from how professionals write emails and build spreadsheets, to how artificial intelligence is trained and deployed at a global scale. The message was unmistakable: Google is not just keeping pace with the AI revolution. It intends to lead it.

In a series of back-to-back reveals, Google announced a major overhaul of its Workspace productivity suite with deep AI integrations, while simultaneously launching two brand-new custom AI chips designed to challenge Nvidia's stranglehold on the cloud computing market. Together, these announcements paint a picture of a company betting everything on AI — not as a feature, but as the foundation of its entire product ecosystem. "Google is not just integrating AI into its products — it is rebuilding its infrastructure from the ground up to make AI faster, cheaper, and more accessible than ever before."

Workspace Intelligence: Your New AI-Powered Office Intern:

Forget the traditional office suite. Google is reimagining what it means to work productively in 2025, and at the heart of that vision is Workspace Intelligence — a new AI system baked directly into Google's flagship productivity tools. Designed to act as a tireless digital assistant, Workspace Intelligence draws on a user's Gmail, Calendar, Chat, Drive, Docs, Slides, and Sheets to deliver deeply personalized, context-aware help across every task.

Privacy and control remain front and center. Google has given users full administrative control over what the AI system can see and access, allowing individuals and organizations to disable Workspace Intelligence's connection to specific data sources at any time. The tradeoff is transparent: the more data the system can access, the more powerfully it can assist — a nuanced balance between convenience and confidentiality that enterprise customers will appreciate.

Gemini in Google Sheets: Building Spreadsheets 9x Faster:

Data entry is about to become a thing of the past. Google has supercharged Google Sheets with Gemini AI, introducing a suite of features that fundamentally change how users interact with spreadsheets. With prompt-based construction, users can now simply describe the spreadsheet they need — including formatting requirements and data retrieval instructions — and Gemini builds it from scratch. No formulas, no formatting headaches, no wasted hours. The productivity gains are staggering.

Google claims its new prompt-based filling feature allows users to populate spreadsheets 9x faster than manual entry, as the system intelligently infers what data you are likely to enter next. Additionally, a new feature allows users to instantly convert unstructured, messy data into clean, organized tables — a capability that has long been a pain point for analysts, project managers, and operations teams worldwide.

"Gemini can now build, fill, and format your Google Sheets — all from a simple text prompt. That's not automation. That's transformation."

AI Writing Tools in Google Docs: Match Your Voice, Amplify Your Impact:

Writing has always been one of the most time-consuming professional tasks— and Google just made it exponentially easier. New AI writing capabilities in Google Docs allow users to leverage Gemini to generate, write, and refine documents with remarkable ease. Powered by the Workspace Intelligence engine, these tools draw on data from a user's Drive, Chat, Gmail archives, and the broader internet to assist with editorial tasks in real time.

The most compelling feature is style matching. Users can now prompt Gemini to mimic their own unique writing voice — asking the AI to analyze past communications and documents to produce new content that sounds authentically human, not robotically generic. Whether you're drafting a client proposal, a board memo, or a weekly newsletter, Gemini can be your co-author — one that never tires, never gets writer's block, and always meets deadlines.

Google vs. The World: The Enterprise AI Race Heats Up:

The stakes could not be higher. Enterprise customers are where the money is, and every major technology company knows it. Microsoft, Apple, and a fast-growing field of AI startups are all competing fiercely for the same professional productivity turf — and Google's latest moves represent a direct escalation of that competition.

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

Google holds a formidable structural advantage. Its office products — Gmail, Docs, Sheets, Drive, Calendar — are already deeply embedded in workplaces worldwide, giving it a massive built-in audience for these AI upgrades. Unlike competitors that must convince enterprises to adopt entirely new platforms, Google is delivering transformative AI capabilities directly inside the tools that millions of workers already use every single day. That installed base is a moat that is exceptionally difficult to replicate.

Google Cloud's New AI Chips: The TPU 8t and TPU 8i:

While Workspace grabbed headlines for enterprise users, Google's chip announcement may prove to be the more consequential long-term story. Google Cloud unveiled its eighth generation of custom-built AI chips — Tensor Processing Units (TPUs) — split into two specialized variants: the TPU 8t, optimized for model training, and the TPU 8i, engineered for inference — the ongoing, real-time usage of AI models after users submit prompts.

The performance numbers are extraordinary. Compared to previous generations, Google's new TPUs deliver up to 3x faster AI model training, 80% better performance per dollar, and the unprecedented ability to connect over 1 million TPUs in a single cluster. The result is dramatically more compute power at dramatically lower energy consumption — and, critically, at lower cost to enterprise cloud customers.

"Up to 3x faster training. 80% better performance per dollar. 1 million+ TPUs in a single cluster. Google's new chips are not an incremental upgrade — they are a generational leap."

Is Google a Threat to Nvidia? The Nuanced Truth:

The obvious question on every investor's mind: does this mean bad news for Nvidia? The answer, at least for now, is nuanced. Google's custom TPU chips are not designed to replace Nvidia's GPU-based systems — at least not today. Like fellow cloud giants Amazon and Microsoft, Google is using its proprietary chips to supplement, not supplant, the Nvidia-based infrastructure it already offers. In fact, Google has explicitly promised that Nvidia's latest chip, Vera Rubin, will be available on its cloud platform later this year.

The historical record is worth noting. Chip market analyst Patrick Moorhead famously predicted that Google's original TPU — launched in 2016 — would hurt Nvidia. Today, Nvidia is a nearly $5 trillion market cap company. That prediction did not exactly age well. The reality is that Google's growth as an AI cloud provider may actually generate more business for Nvidia, not less, even as a growing share of workloads shift to Google's own silicon.

Google and Nvidia: Unlikely Partners in the AI Infrastructure Race:

Perhaps the most surprising announcement of the week was not competition — it was collaboration. Google and Nvidia have agreed to work together to engineer advanced computer networking that allows Nvidia-based systems to perform more efficiently inside Google's cloud. The two companies are focusing on Falcon — a software-based networking technology that Google originally created and open-sourced in 2023 under the Open Compute Project — to beef up performance across their shared infrastructure.

This partnership underscores a broader truth about the AI industry in 2025. The future of AI infrastructure is not a zero-sum battle between hyperscalers and chip makers. It is a complex, interdependent ecosystem where collaboration and competition coexist — where Google can build its own chips while simultaneously deepening its partnership with Nvidia. The ultimate beneficiaries of this dynamic? Enterprise customers and developers who gain access to faster, cheaper, and more powerful AI capabilities than ever before.

The Bottom Line: Google Is Playing the Long Game — and Winning:

Taken together, Google's announcements at Cloud Next represent one of the most comprehensive AI strategy reveals in recent memory. On one hand, the company is meeting workers where they already are — inside Docs, Sheets, and Gmail — and dramatically elevating what those tools can do with AI. On the other, it is quietly building the computational infrastructure to power the next decade of AI development, with custom chips that promise to redefine the economics of cloud computing.

The race is far from over. Microsoft continues to push Copilot aggressively across its own Office suite. Apple is expanding its on-device AI ambitions. And a new generation of AI-native startups is nipping at everyone's heels. But Google enters this competition with something few rivals can match: scale, infrastructure, and one of the world's most widely used productivity ecosystems already in place.

For businesses and professionals navigating the AI transition, the message from Google is clear: the future of work is already here — and it runs on Gemini.