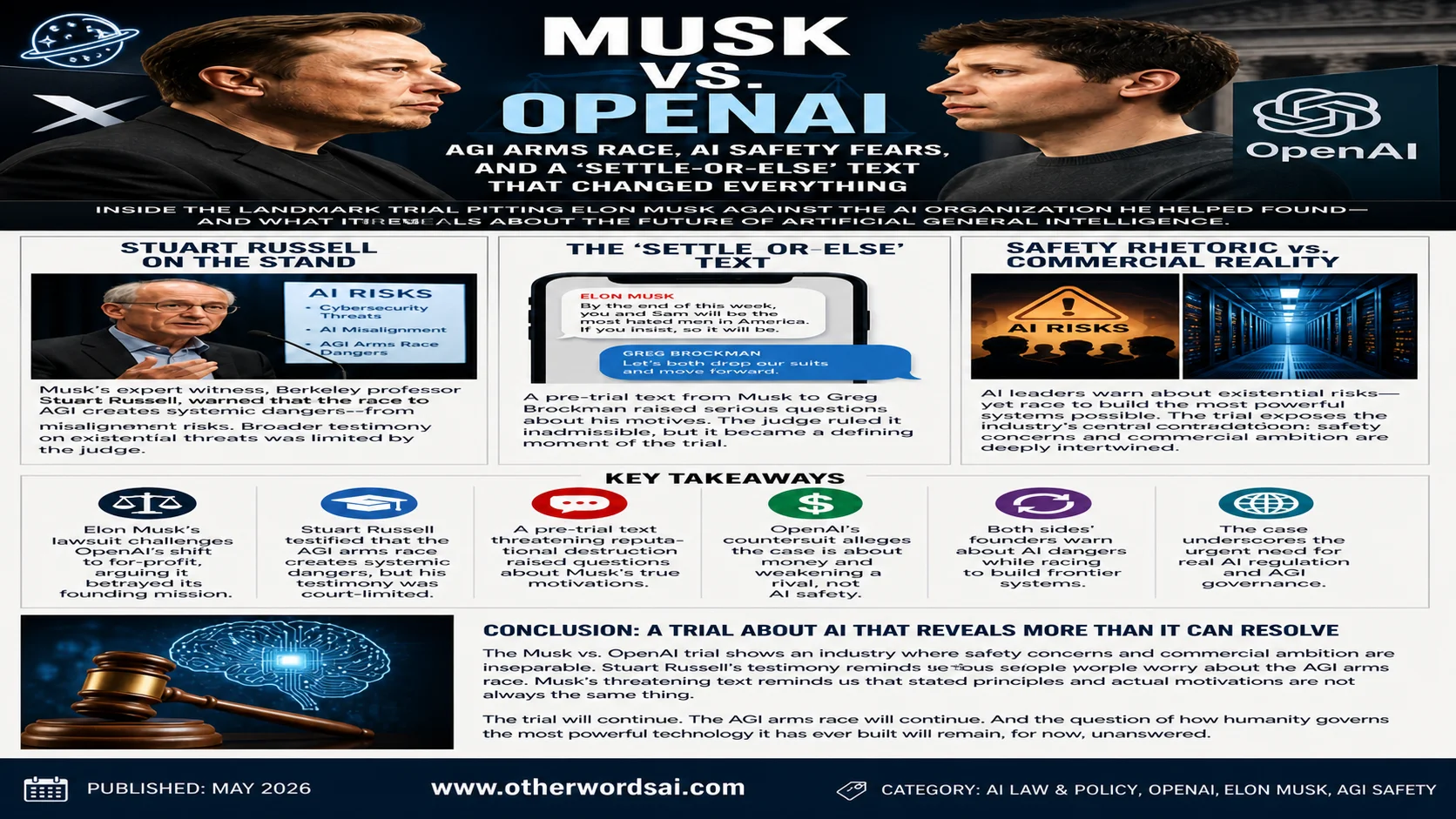

Musk vs. OpenAI: AGI Arms Race, AI Safety Fears, and a 'Settle-or-Else' Text That Changed Everything:

Inside the landmark trial pitting Elon Musk against the AI organization he helped found — and what it reveals about the future of artificial general intelligence:

Introduction: The Trial That Asks How Seriously We Should Take AI Doomers:

One of the most consequential legal battles in the history of artificial intelligence is now playing out in a San Francisco courtroom. Elon Musk's lawsuit against OpenAI — the AI organization he co-founded and later abandoned — has evolved into something far larger than a contract dispute.

At its heart, the Musk vs. OpenAI trial is forcing judges, jurors, and the public to wrestle with a question that the entire tech industry has been dancing around for years: when do we take AI safety concerns seriously, and when are those concerns being weaponized for competitive or financial advantage?

The trial combines two explosive storylines that together reveal the deep contradictions at the center of the modern AI industry. The first involves the only AI expert witness called to testify — a Berkeley professor and longtime AI safety advocate whose concerns about an AGI arms race were largely kept from the jury.

The second involves a text message sent by Musk days before the trial began, a message that may have exposed the true motive behind this entire legal campaign. Together, they paint a portrait of an industry where safety rhetoric and commercial ambition are so deeply intertwined that separating them may be impossible.

Stuart Russell on the Stand: The Expert Who Warned About AGI Risk:

When Musk's legal team needed someone to speak credibly about the dangers of artificial intelligence, they turned to Stuart Russell — one of the most respected AI researchers in the world. A University of California, Berkeley computer science professor who has spent decades studying artificial intelligence, Russell is the co-author of the field's most widely used textbook and a longstanding voice for cautious, safety-conscious AI development. His presence at the trial as Musk's sole AI expert witness sent an immediate signal about the legal strategy at play.

Russell's testimony was designed to accomplish two things: establish a credible scientific foundation for taking AI risks seriously, and connect those risks to the organizational transformation OpenAI has undergone as it has pursued for-profit growth. He told the jury and Judge Yvonne Gonzalez Rogers that AI poses a range of serious threats — from cybersecurity vulnerabilities to fundamental problems of AI misalignment — and that the winner-take-all nature of the race to develop artificial general intelligence creates systemic dangers that extend well beyond any single company.

Russell warned that there is a fundamental tension between the aggressive pursuit of AGI and the safety practices needed to develop it responsibly.

Russell's larger concerns about the existential risks of unconstrained AI were largely kept from the courtroom. OpenAI's attorneys successfully objected to broader testimony about existential AI threats, and Judge Rogers limited the scope of what Russell could address in open court.

Nevertheless, his core message was clear: the race to AGI is not being run safely, and the organizational incentives created by for-profit AI development make it structurally harder to prioritize safety over speed.

It is worth noting that Russell himself signed the widely publicized open letter in March 2023 calling for a six-month pause in AI research — the same letter Elon Musk also signed, even as he was actively building xAI, his own for-profit AI laboratory. That contradiction — calling for a pause while simultaneously accelerating — is not unique to Musk. It is, in many ways, the defining contradiction of the entire AI industry.

The AGI Arms Race: How OpenAI's Founding Fear Became Its Founding Problem:

To understand why Musk is suing OpenAI, it helps to understand why OpenAI was founded in the first place. The organization's founding team — which included Musk, Sam Altman, Greg Brockman, Ilya Sutskever, and others — was driven by a specific and deeply held concern: that Google DeepMind was racing toward artificial general intelligence without sufficient public accountability or safety oversight. The idea was to create a nonprofit counterweight — a publicly spirited organization that would develop AI for the benefit of humanity rather than for the enrichment of shareholders.

What the founding team did not fully anticipate was just how expensive that mission would turn out to be. Developing frontier AI requires enormous amounts of compute — the specialized chips, servers, and data centers that process the vast quantities of data needed to train large language models. That compute costs billions of dollars. And billions of dollars, for a nonprofit with no product revenue, can only come from one place: for-profit investors.

The decision to create a capped-profit entity to attract that investment — and the subsequent partnership with Microsoft that followed — is precisely what Musk's lawsuit challenges. His argument is that OpenAI betrayed its foundational mission when it restructured itself to serve investors rather than the public. OpenAI's counterargument is that the financial reality of frontier AI development made that restructuring not just defensible but necessary.

The same fear of AGI in the hands of a single powerful organization that drove OpenAI's founding ultimately forced it to seek the capital that tore the founding team apart.

The 'Settle-or-Else' Text: Musk's Message That Raised Serious Questions:

Whatever the legal merits of Musk's safety arguments, a text message sent two days before the trial began has dramatically complicated his public positioning. According to a filing submitted by OpenAI's lawyers, Musk reached out to OpenAI president and co-founder Greg Brockman shortly before the trial commenced, suggesting that the two sides reach a settlement. When Brockman responded by proposing that both parties drop their respective suits, Musk's reply took a sharp and threatening turn.

"By the end of this week, you and Sam will be the most hated men in America. If you insist, so it will be." — Elon Musk, in a text to Greg Brockman days before trial The exchange — though the judge ultimately ruled it inadmissible as evidence — was made public by OpenAI's legal team and instantly became the most-discussed moment of the trial's early days.

The implication that observers drew was difficult to ignore: if Musk's lawsuit were truly motivated by principled concern for AI safety and the public interest, why would he respond to a settlement overture with a personal threat? Why frame the trial's outcome in terms of reputational destruction rather than policy correction?

OpenAI's countersuit alleges that Musk's legal campaign is not about AI safety at all — that it is, instead, about extracting money from OpenAI's commercial success while simultaneously weakening a direct competitor to xAI. Musk's lawsuit seeks to unwind OpenAI's for-profit structure, make its technology publicly available, strip Microsoft of its licensing agreement, and compel OpenAI to pay him general, compensatory, and punitive damages plus legal fees. The breadth of those demands — and the tone of his pre-trial text — has given OpenAI's framing of the case considerable traction in the court of public opinion.

Safety Rhetoric vs. Commercial Reality: The Industry's Central Contradiction:

One of the most striking features of the Musk vs. OpenAI trial is how it puts the AI industry's deepest contradiction on public display. Virtually every founder associated with OpenAI — including Musk himself — has at various points issued stark warnings about the existential risks of artificial intelligence. Sam Altman has testified before Congress about AI's potential for catastrophic misuse.

Ilya Sutskever helped found the safety-focused organization Safe Superintelligence after departing OpenAI. Geoffrey Hinton, one of the godfathers of modern deep learning, left Google to speak freely about AI dangers.

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

And yet those same individuals have also raised billions of dollars, hired thousands of engineers, and built some of the most powerful AI systems in human history — as fast as humanly possible. They have not slowed down. They have not waited for regulation to catch up. And they have not, despite their stated concerns, voluntarily submitted their systems to independent safety audits or agreed to enforceable public accountability standards.

As Hodan Omaar of the Center for Data Innovation put it when Senator Bernie Sanders cited these same AI leaders' safety warnings to support a proposed moratorium on data center construction: "It is unclear why the public should discount everything tech billionaires say except when their words can be recruited to fill gaps in a precarious argument." That observation cuts both ways — and it applies with equal force to the Musk vs. OpenAI courtroom.

Both sides of the Musk vs. OpenAI trial are asking the court to take some of the same people's arguments seriously — while discounting the parts that don't serve their legal position.

What the Trial Reveals About AGI Governance and AI Regulation:

Beyond the immediate legal drama, the Musk vs. OpenAI trial is surfacing some of the most important unresolved questions in AI governance. Russell's testimony — even in its limited, court-constrained form — put the AGI arms race in front of a jury for the first time. It asked ordinary Americans to consider whether the winner-take-all dynamics of frontier AI development create dangers that existing legal and regulatory frameworks are not equipped to address.

Russell has long argued that governments need to step in with meaningful AI regulation — not voluntary commitments or industry-drafted guidelines, but enforceable rules that govern how powerful AI systems are developed and deployed. His position echoes calls from a growing chorus of AI safety researchers who worry that the competitive pressure between labs in the United States, the United Kingdom, and China is creating a race to the bottom on safety standards, with each organization cutting corners to avoid being beaten to the next capability milestone.

The trial, whatever its outcome, is unlikely to resolve those systemic issues. A judge can rule on whether OpenAI breached its founding mission. A judge cannot regulate the global AI arms race. But the public attention generated by the trial — and the Russell testimony in particular — may help build the political will for the kind of regulatory action that researchers like Russell have been calling for since well before this lawsuit was filed.

Cross-Examination and Credibility: What OpenAI's Defense Established:

OpenAI's legal team did not attempt to rebut Russell's academic credentials or his general concerns about AI risk. Instead, they used their cross-examination to establish a narrower but legally significant point: Russell was not evaluating OpenAI's specific corporate structure, its actual safety policies, or whether its transition to a for-profit model had measurably increased the risk of AI harm. His testimony, in other words, established that AI is dangerous in general — not that OpenAI specifically had made it more so.

That distinction matters enormously for the legal outcome of the case. Musk's attorneys need to prove not just that AGI poses existential risks — a claim that most AI researchers would accept — but that OpenAI's specific organizational decisions violated its founding commitments in ways that caused or enabled those risks. Russell's testimony, as constrained by the judge's rulings, fell short of making that direct connection.

The result is a trial in which the most important questions — about AI safety, AGI governance, and the responsibility of powerful technology organizations to the public — are hovering just outside the frame of what the court is actually being asked to decide. That gap between the existential stakes being invoked and the narrow legal questions being adjudicated is perhaps the most revealing thing about this entire proceeding.

Key Takeaways: The Musk vs. OpenAI Trial:

⚖️ Elon Musk's lawsuit challenges OpenAI's conversion from nonprofit to for-profit, arguing it betrayed its founding AI safety mission.

🎓 Stuart Russell, Musk's sole AI expert witness, testified that the AGI arms race creates systemic dangers — but broader existential risk testimony was limited by the judge.

Support our research

Independent analysis fueled by you.

📱** A pre-trial text from Musk — "By the end of this week, you and Sam will be the most hated men in America" — raised serious questions about his true motivations.**

💰 OpenAI's countersuit alleges the lawsuit is about extracting money and kneecapping a commercial rival to xAI, not protecting AI safety.

🔄 Both Musk and OpenAI's founders have simultaneously warned about AI dangers and raced to build the most powerful AI systems possible — the trial's central contradiction.

🌐 The case highlights the urgent need for genuine AI regulation and AGI governance that goes beyond what any single lawsuit can achieve.

Conclusion: A Trial About AI That Reveals More Than It Can Resolve:

The Musk vs. OpenAI trial is one of the most important legal proceedings in the history of technology — not because it will definitively resolve questions about AI safety or AGI governance, but because of what it exposes. It shows an industry where safety concerns and commercial ambition are inseparable, where the same people warning about existential risk are racing to create it, and where legal arguments about nonprofit mission and charitable purpose are being deployed in service of competitive and financial objectives.

Stuart Russell's presence on the witness stand is a reminder that serious, credentialed people genuinely worry about where the AGI arms race is heading. His concerns deserve to be engaged on their merits — not selectively deployed when legally convenient, as both sides of this trial have done. The six-month AI pause that he and Musk both signed onto in 2023 never happened. The race to AGI has only accelerated since.

And Musk's threatening text to Greg Brockman is a reminder that in the world of high-stakes technology litigation, stated principles and actual motivations are not always the same thing. The trial will continue.

The AGI arms race will continue. And the question of how humanity governs the most powerful technology it has ever built will remain, for now, unanswered.

Published: May 2026 | Category: AI Law & Policy, OpenAI, Elon Musk, AGI Safety