Harvard Study Reveals: AI Outperforms Human Doctors in Emergency Room Diagnoses — What This Means for the Future of Healthcare:

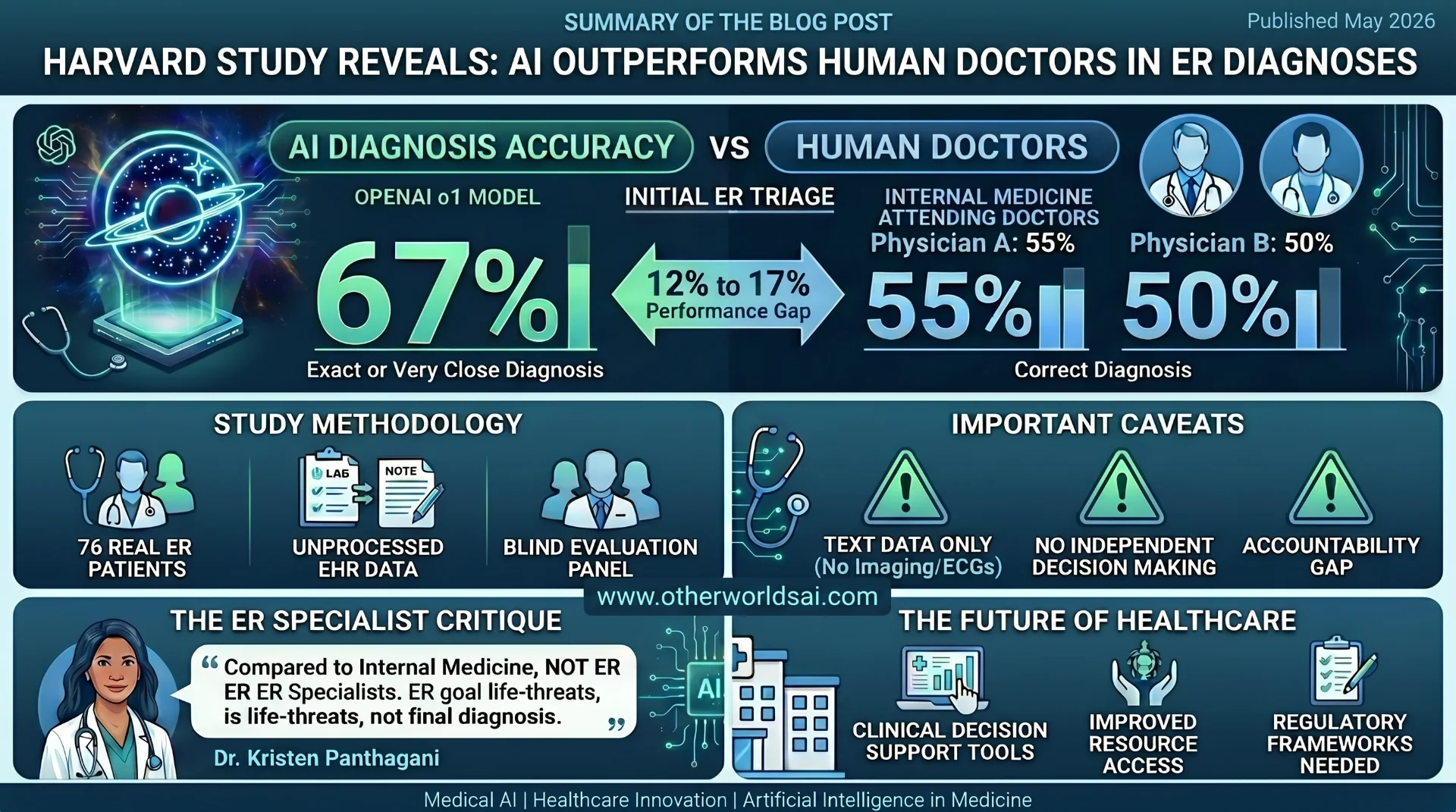

67% vs 55%: Why AI Just Beat Human Doctors in a High-Stakes Emergency Room Test:

A groundbreaking new study from Harvard Medical School has set the medical and technology worlds buzzing — and for good reason. Published in the prestigious journal Science, the research reveals that OpenAI's large language model o1 outperformed human physicians in diagnosing real emergency room cases, raising urgent questions about the future of AI in healthcare, clinical decision support, and the evolving role of doctors in high-stakes medical environments.

Whether you're a healthcare professional, a technology enthusiast, or simply a patient curious about AI-powered medicine, this study deserves your full attention. Here's everything you need to know — including the nuances the headlines may have missed.

The Harvard Study: An Overview:

Led by physicians and computer scientists at Harvard Medical School and Beth Israel Deaconess Medical Center, this landmark study systematically evaluated how large language models (LLMs) perform across a range of medical contexts — including real-world emergency room cases. The research team ran a series of carefully controlled experiments comparing OpenAI's o1 and GPT-4o models directly against experienced human physicians.

The core experiment focused on 76 real patients who were admitted to the Beth Israel emergency department. Two internal medicine attending physicians provided diagnoses at key touchpoints during each patient's visit. The AI models were given the same information available in the electronic medical records — no pre-processing, no data cleaning, no cherry-picking — and generated their own independent diagnoses. A separate panel of two attending physicians then evaluated all diagnoses blindly, without knowing whether they came from a human or a machine.

The Results: AI Diagnosis Accuracy vs. Human Doctors:

The findings were striking and, in some respects, historic. At every diagnostic touchpoint throughout the ER visit, OpenAI's o1 model either matched or outperformed both attending physicians.

Most impressively, the differences were greatest at the very first diagnostic moment — initial ER triage — the point where the least information is available and the stakes are highest. At this critical juncture:

• OpenAI o1: Delivered the exact or very close diagnosis in 67% of triage cases.

• Physician A: Achieved the correct diagnosis 55% of the time.

• Physician B: Hit the mark in 50% of cases.

"We tested the AI model against virtually every benchmark, and it eclipsed both prior models and our physician baselines." — Arjun Manrai, Harvard Medical School AI Lab

Why This AI vs. Doctor Study Matters: The Clinical Significance:

The importance of this research extends far beyond a simple win for artificial intelligence. The study represents one of the most rigorous real-world evaluations of AI-assisted diagnosis to date. Unlike many earlier studies that used curated datasets or synthetic cases, this research used actual patient records from a live emergency department — making its findings far more applicable to real clinical settings.

Crucially, the researchers emphasized that the data was completely unprocessed. The AI was presented with the same messy, complex, real-world electronic health record information that human doctors see — lab results, clinical notes, vital signs, patient history — without any artificial enhancements. This methodological rigor significantly strengthens the credibility of the results.

Important Caveats: What the Study Does NOT Claim:

Before drawing sweeping conclusions, it's essential to understand the study's own limitations — which the researchers themselves were careful to highlight. The study does not claim that AI is ready to make independent life-or-death clinical decisions. Instead, it calls for

"an urgent need for prospective trials to evaluate these technologies in real-world patient care settings."

Additionally, the study only evaluated AI performance on text-based medical data. The researchers noted that current foundation models remain more limited when reasoning over non-text inputs — such as medical imaging, ECGs, or physical examination findings. This is a meaningful gap in the current capabilities of AI-powered diagnostics.

There is also the critical issue of accountability. As study co-author and Beth Israel physician Adam Rodman pointed out, there is presently "no formal framework right now for accountability" around AI medical diagnoses. Patients, he noted, still want human physicians to guide them through life-or-death and complex treatment decisions — a deeply human need that no algorithm can yet replace.

The Specialist Question: A Critical Perspective on AI Medical Benchmarking:

Not everyone in the medical community is ready to celebrate the headlines. Emergency physician Kristen Panthagani offered a sobering — and valuable — counterpoint, describing the study as "an interesting AI study that has led to some very overhyped headlines." Her core criticism centers on who the AI was actually competing against. The study compared AI diagnoses to those of internal medicine physicians — not emergency room specialists. Panthagani argues this is a fundamental flaw in how the study has been presented to the public.

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

"If we're going to compare AI tools to physicians' clinical ability, we should start by comparing to physicians who actually practice that specialty."** — Dr. Kristen Panthagani, Emergency Physician.

She also reframes the fundamental goal of emergency medicine in a way that any AI benchmark must grapple with. "As an ER doctor seeing a patient for the first time," she explains, "my primary goal is not to guess your ultimate diagnosis. My primary goal is to determine if you have a condition that could kill you." This distinction — between diagnostic accuracy and life-threatening condition identification — is one the current benchmarks do not fully capture.

What This Means for the Future of AI in Healthcare:

Despite the caveats, this study represents a pivotal moment in the ongoing conversation about artificial intelligence in medicine. The implications span several critical domains in healthcare innovation:

-

Clinical Decision Support Systems: AI models could serve as powerful second-opinion tools, helping overworked ER staff catch diagnoses they might otherwise miss at triage.

-

Healthcare Equity and Access: In resource-limited settings — rural hospitals, underserved communities — AI diagnostic tools could bridge critical gaps in specialist access.

-

Medical Education and Training: LLMs could be used to train future physicians, providing diagnostic simulations and real-time feedback.

-

Regulatory and Ethical Frameworks: The study underscores the urgent need for formal AI accountability structures in clinical settings — a gap regulators and policymakers must urgently address.

Key Takeaways: AI Diagnosis vs. Human Doctors in 2026:

Here's what we know for certain after this landmark Harvard study:

✅ OpenAI's o1 model outperformed human internal medicine physicians in ER triage diagnosis accuracy (67% vs. 55% and 50%).

✅ The AI was tested on real, unprocessed electronic medical records — not synthetic data.

Support our research

Independent analysis fueled by you.

✅ The researchers are NOT claiming AI should replace doctors — they are calling for more prospective clinical trials.

✅ AI still has significant limitations: non-text medical data, accountability gaps, and specialty-specific clinical judgment.

✅ The comparison was made against internal medicine doctors, not ER specialists — a distinction that matters.

Conclusion: A Turning Point for AI-Powered Medicine:

The Harvard study is not a declaration that AI has arrived to replace your doctor. It is, however, one of the most compelling pieces of evidence yet that artificial intelligence has a meaningful, potentially life-saving role to play in the future of emergency medicine and clinical diagnostics.

The path forward is not human versus machine — it is human and machine, working together. As AI diagnostic tools grow more sophisticated, the most important next step is building the clinical trials, regulatory frameworks, and accountability structures that allow these technologies to be deployed responsibly, equitably, and effectively.

One thing is clear: the era of AI-assisted emergency medicine diagnosis is not a distant future. According to this Harvard study, it is already here.

Published May 2026 | Medical AI | Healthcare Innovation | Artificial Intelligence in Medicine.