Orbital Compute Is Open for Business: Inside the World's Largest Space-Based GPU Cluster:

How Kepler Communications and Sophia Space are building the infrastructure layer for in-orbit AI processing — and why it matters for the future of data centers on Earth and beyond.

Introduction: The Space Computing Race Has a New Leader:

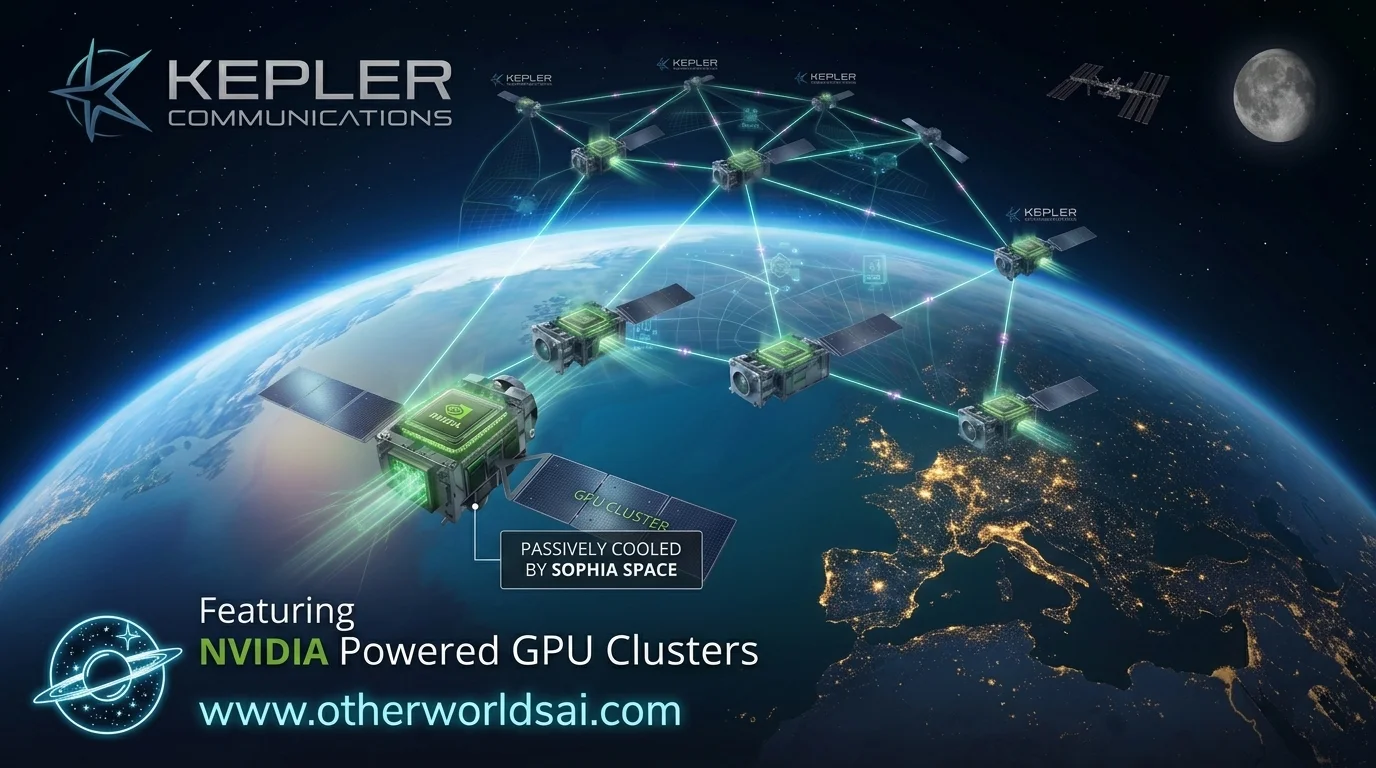

The era of orbital computing is no longer hypothetical — it has arrived. For years, the concept of data centers in space existed primarily as a bold vision championed by aerospace giants and deep-pocketed startups. But in early 2025, a Canadian company quietly made history, launching the largest compute cluster currently operating in Earth's orbit. That company is Kepler Communications, and its constellation of 10 operational satellites — powered by approximately 40 Nvidia Orin edge processors interconnected by laser communication links — is now open for business.

The significance of this milestone cannot be overstated. With 18 paying customers and a freshly announced partnership with orbital computing startup Sophia Space, Kepler is proving that edge processing in orbit is not a distant dream. It is a working commercial product — one that is reshaping how industries think about space-based sensors, AI inference, and the future of data infrastructure.

Kepler Communications: Infrastructure for the Space Economy:

Kepler Communications was founded with a single powerful idea: space needs infrastructure, just like the internet does. Rather than positioning itself as a data center company — a label that evokes images of massive server farms and industrial cooling towers — Kepler CEO Mina Mitry describes the company as "a layer that provides network services for other satellites in space, or drones and aircraft in the sky below."

This distinction matters enormously for understanding the near-term business of orbital compute. While companies like SpaceX and Blue Origin capture headlines with their visions of massive space-based data centers in the 2030s, Kepler is building the foundational plumbing: the networking, the edge processing nodes, and the laser-linked backhaul that will make those future data centers possible.

Today, Kepler's constellation is already doing meaningful work. The company carries and processes data uploaded from the ground and collected by hosted payloads on its own spacecraft. As the sector matures, the roadmap expands to linking third-party satellites into a shared orbital processing network — essentially becoming the AWS of low Earth orbit, but for edge compute rather than cloud storage.

"Because we have the belief it's more inference than training, we want more distributed GPUs that do inference, rather than one superpower GPU that has the training workload capacity. — Mina Mitry, CEO of Kepler Communications"

This inference-first philosophy is strategically significant. Rather than building toward the power-hungry training workloads that dominate terrestrial AI infrastructure, Kepler is betting on distributed, always-on inference. "If this thing consumes kilowatts of power and you're only running at 10% of the time, then that's not super helpful. In our case, our GPUs are running 100% of the time," Mitry told TechCrunch — a compelling argument for orbital compute economics.

Sophia Space: Solving the Thermal Challenge of In-Orbit AI:

If Kepler is building the orbital internet, Sophia Space is engineering the hardware that can survive the harsh environment of space without breaking the bank. The startup is developing passively-cooled space computers — systems designed to dissipate heat without the active cooling mechanisms that add weight, complexity, and cost to any spacecraft. This is not a minor engineering challenge: it is widely considered one of the key barriers to deploying large-scale data centers in orbit.

The partnership between Sophia and Kepler represents a significant technical proof-of-concept. Sophia will upload its proprietary operating system to one of Kepler's operational satellites and attempt to launch and configure it across six GPUs spanning two spacecraft. In a terrestrial data center, this kind of multi-node software deployment is routine — table stakes, as the industry calls it. In orbit, it has never been attempted before.

The stakes for Sophia extend well beyond the immediate test. The company has its first planned satellite launch scheduled for late 2027, and validating its software stack in a real orbital environment is a critical de-risking exercise. Every successful configuration, every stable boot sequence, every GPU that responds correctly to commands across the vacuum of space brings Sophia closer to that milestone.

Sophia CEO Rob DeMillo is clear-eyed about the broader opportunity. He points out that regulatory headwinds are already beginning to reshape the data center industry on Earth. Wisconsin recently adopted a ban on data center construction, and similar legislative proposals are gaining traction in Congress. For Sophia and its investors, every restriction on terrestrial data centers is a tailwind for the orbital alternative. "There's no more data centers in this country. It's gonna get weird from here. — Rob DeMillo, CEO of Sophia Space"

The Defense Connection: Orbital Compute Meets Missile Defense:

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

The commercial opportunity for orbital edge computing is compelling — but the national security dimension may be even more urgent. Satellite companies are increasingly planning future assets around the model that Kepler and Sophia are pioneering, according to Mitry. The driving use case: offloading processing for power-hungry sensors like synthetic aperture radar (SAR), which generates enormous volumes of data that need to be analyzed quickly.

The U.S. military is actively developing a new missile defense architecture predicated on satellites that can detect and track threats in real time. That requirement demands low-latency, high-bandwidth processing — exactly what orbital edge compute is designed to provide. Kepler has already demonstrated a space-to-air laser communication link in a proof-of-concept for the U.S. government, signaling that the company's infrastructure is already being evaluated for defense-grade applications.

Edge processing — handling data at the point of collection rather than downlinking it to Earth — is where orbital compute delivers its clearest value proposition. Reduced latency. Lower bandwidth costs. Faster decision cycles. These are not abstract benefits; for missile defense, weather monitoring, maritime surveillance, and disaster response, they could be operationally decisive.

The Competitive Landscape: How Kepler and Sophia Stand Apart:

The orbital computing sector is becoming increasingly crowded, but the players occupy distinctly different positions on the value chain. On one end sit the infrastructure giants: SpaceX and Blue Origin, both of which have announced ambitions to deploy large-scale, data center-style compute in orbit — but not until the 2030s at the earliest. On the other end are the specialist startups: Starcloud and Aetherflux, which are raising significant capital to build data center-grade facilities in space, complete with data center-style processors.

Kepler and Sophia occupy the middle ground — and arguably the most commercially viable near-term position. By focusing on distributed edge inference rather than centralized training, and on passively-cooled modular hardware rather than power-intensive monolithic systems, they are building for the orbital computing environment that exists today, not the one that may exist a decade from now.

Experts broadly agree that large-scale orbital data centers are a 2030s story. The first chapter — the one being written right now by Kepler and Sophia — is about proving that orbital edge processing works, that the software can be deployed and managed reliably in space, and that the economics make sense for real customers with real workloads.

What This Means for the Future of AI Infrastructure:

The broader implications of Kepler and Sophia's work extend far beyond the space industry. As artificial intelligence workloads continue to grow exponentially — and as terrestrial data center construction faces increasing regulatory, energy, and real estate constraints — the case for orbital compute strengthens. Space offers something Earth increasingly cannot: abundant solar power, natural radiative cooling, and freedom from zoning boards and grid capacity limits.

The inference opportunity is particularly significant. As AI models become more widely deployed, the ratio of inference compute to training compute will skew dramatically toward inference. Running inference workloads on distributed orbital GPU clusters — serving satellites, aircraft, ships, and remote sensors in real time — could emerge as one of the defining infrastructure stories of the late 2020s.

For enterprise customers, defense agencies, and intelligence services, the combination of Kepler's networking infrastructure and Sophia's thermally efficient compute hardware offers a pathway to capabilities that were simply impossible five years ago. Processing SAR imagery in orbit. Running anomaly detection on live sensor feeds. Coordinating autonomous systems without round-tripping data to the ground. These are not science fiction scenarios — they are the use cases Kepler's 18 current customers are actively exploring.

Conclusion: The Infrastructure Layer for the Space Economy:

The launch of Kepler's orbital compute cluster and its partnership with Sophia Space mark a genuine inflection point for the commercial space industry. Not because they represent the final form of space-based computing — they don't — but because they demonstrate that the foundational infrastructure is real, operational, and commercially viable today.

The road to large-scale orbital data centers runs through exactly this kind of unglamorous infrastructure work: deploying GPUs, proving software stacks, validating thermal management, and building the customer base that will justify the next generation of investment. Kepler and Sophia are doing that work now, quietly and methodically, while the industry's attention is focused on the bigger visions of the 2030s.

As Rob DeMillo suggested, things are going to get weird. But for those building the infrastructure of the orbital economy, weird is exactly where the opportunity lives.