ARTIFICIAL INTELLIGENCE IN THE AGE OF WAR:

How AI, Big Tech, and Cyberwarfare Are Rewriting the Rules of Modern Conflict:Why the US Used Claude to Strike Iran After Banning It:

Operation Epic Fury:

On February 28, 2026, the world witnessed a turning point in the history of armed conflict. The United States and Israel launched a joint offensive — code-named- Operation Epic Fury by American commanders and Operation Roaring Lion by Israeli forces — against the Islamic Republic of Iran. Within the first twelve hours alone, nearly 900 precision strikes were executed against high-value Iranian targets.

That staggering operational tempo was not the work of human planning alone. Behind every strike, every target recommendation, and every real-time battlefield decision was a force multiplier that no previous generation of soldiers had ever commanded: Artificial Intelligence.

For the first time in the history of modern warfare, commercial AI systems — built originally to write emails, generate code, and answer customer queries — were fully integrated into the most consequential military kill chain in decades. Anthropic's Claude, Google's Gemini, OpenAI's GPT models, and Elon Musk's Grok were no longer consumer products. They had become, de facto, instruments of war.

This article draws on reporting from the Wall Street Journal, Fortune, Palo Alto Networks Unit 42, SOCRadar, and intelligence analysts to provide a comprehensive, deeply sourced account of AI's role in the 2026 USA–Iran conflict — including the explosive controversy over Anthropic, Iran's retaliatory cyberstrikes against UAE data infrastructure, and the alarming future that this conflict has unlocked.

"The AI machine is making targeting recommendations faster than human thought — vastly accelerating what military planners call the kill chain." — Craig Jones, Author, The War Lawyers

Operation Epic Fury — AI as the Engine of War:

The conflict did not erupt without warning. For weeks leading up to February 28, military analysts and open-source intelligence trackers observed the movement of US carrier strike groups, the repositioning of B-2 Spirit stealth bombers to Diego Garcia, and an unusual surge in diplomatic activity across the Gulf Cooperation Council states.

Remarkably, when researchers prompted ChatGPT with this open-source data in the days before the strikes, the AI predicted a US strike window of March 1–6, 2026 — a demonstration of how AI systems, fed only public data, can now anticipate military operations that took decades of human intelligence infrastructure to plan.

Decision Compression: How AI Collapsed the Kill Chain:

Military strategists have long governed combat decision-making through the OODA loop — Observe, Orient, Decide, and Act. In past conflicts, this cycle could consume hours or even days as intelligence analysts, commanders, and legal advisors worked through complex assessments. AI has shattered that timeline. By the time a human commander in 2026 reviewed a target on a screen, Claude or an equivalent AI system had already ingested satellite imagery, cross-referenced intercepted communications, analyzed geospatial sensor feeds, assessed the target's legal status under international humanitarian law, and generated a strike recommendation — all in seconds.

This phenomenon — what defense analysts now call 'decision compression' — represents the single most consequential shift in military AI. As Amir Husain, co-author of Hyperwar: Conflict and Competition in the AI Century, has explained, AI was deployed across every phase of the Observe-Orient-Decide-Act framework: interpreting satellite data, processing signals intelligence, recommending targets, and managing autonomous drone operations in environments where communications were deliberately jammed by Iranian electronic warfare units.

The AI-Assisted Decapitation Strikes:

The most dramatic demonstration of AI's decisive military role came in the opening hours of the conflict. Reports from multiple intelligence sources indicate that AI simulations were used to model and plan precision strikes on underground command facilities in Tehran.

The operation resulted in the deaths of Supreme Leader Ayatollah Ali Khamenei, Iran's Defense Minister, the Islamic Revolutionary Guard Corps commander, and the Army Chief of Staff — the effective decapitation of Iran's entire military command structure within a single operational window. This outcome, analysts agree, would have been nearly impossible without AI-assisted targeting that could process the complex, layered underground facility data and calculate optimal strike parameters in real time.

Israel's Lavender and Gospel Systems: AI Target Generation at Scale:

Integrated alongside American platforms were Israeli AI targeting systems — most notably 'Lavender' and 'Gospel' — which have been documented as generating thousands of target recommendations within hours, a capability that vastly surpasses anything human analytical teams could produce. These systems, designed to identify human targets and infrastructure nodes with high confidence, were fused with US platforms during Operation Epic Fury to create a combined AI targeting architecture of unprecedented scale and speed. The result was a conflict in which AI systems, not human analysts, effectively set the pace and scope of military action.

Autonomous Drones and Cyber-Kinetic Coordination:

The conflict introduced another milestone: the first documented large-scale deployment of autonomous drones by US forces in live combat. US Central Command acknowledged deploying low-cost one-way attack drones — including American adaptations of Iran's own Shahed drone designs — that were required to operate without human guidance in communications-jammed environments.

Simultaneously, AI models managed coordinated cyberattacks against Iran's energy grid, communications infrastructure, and military command networks, while directing physical drone swarms to ensure, as one analyst put it, that 'the fog of war remained entirely on the Iranian side.' The cyber-kinetic coordination demonstrated in this conflict — where digital and physical warfare are managed by a unified AI architecture — marks a new chapter in military history.

Big Tech Goes to War — Anthropic, Google, OpenAI, and xAI:

Perhaps no aspect of the 2026 USA–Iran conflict has generated more controversy, ethical debate, and geopolitical consequence than the central role played by commercial artificial intelligence companies. Firms whose products are used daily by hundreds of millions of civilians — to draft emails, debug software, summarize documents, and search the internet — found themselves at the operational center of a live shooting war. The line between Silicon Valley and the Pentagon had, effectively, dissolved.

Company : AI System : Reported Role in Operation Epic Fury

Anthropic : Claude LLM : Intelligence synthesis, target selection, war- gamining simulations. Used despite Trump admin ban.

Google : Gemini / Cloud : Cloud infrastructure (Project Nimbus), threat

intelligence, Iranian network vulnerability mapping,

signal intelligence processing.

OpenAI : GPT-4/5 : Classified network deployment for war-gaming, real- time translation of intercepted Iranian communications.

xAI (Musk) : Grok : Signed Pentagon contract Feb 2026 as Anthropic replacement; expanded into classified military systems.

Amazon AWS : Cloud / Data : Hosted defense AI workloads; AWS ME-Central-1 (UAE) targeted by Iranian drone/missile strikes.

Anthropic and Claude: The Most Controversial AI in the World:

Anthropic's Claude emerged as the single most discussed AI system in the conflict — and the most politically explosive. Reporting from the Wall Street Journal and Axios, citing multiple unnamed defense and intelligence officials, confirmed that Claude was integrated into classified US defense networks and used for intelligence assessment, target selection, and war-gaming simulations during the planning and execution of strikes on Iranian targets.

This was not an improvised arrangement. In July 2025, the Department of Defense had awarded AI contracts worth up to $200 million through its Chief Digital and Artificial Intelligence Office (CDAO), with Anthropic securing a significant portion. Claude was embedded into classified systems via partnerships with Palantir and Amazon Web Services' Top Secret Cloud infrastructure.

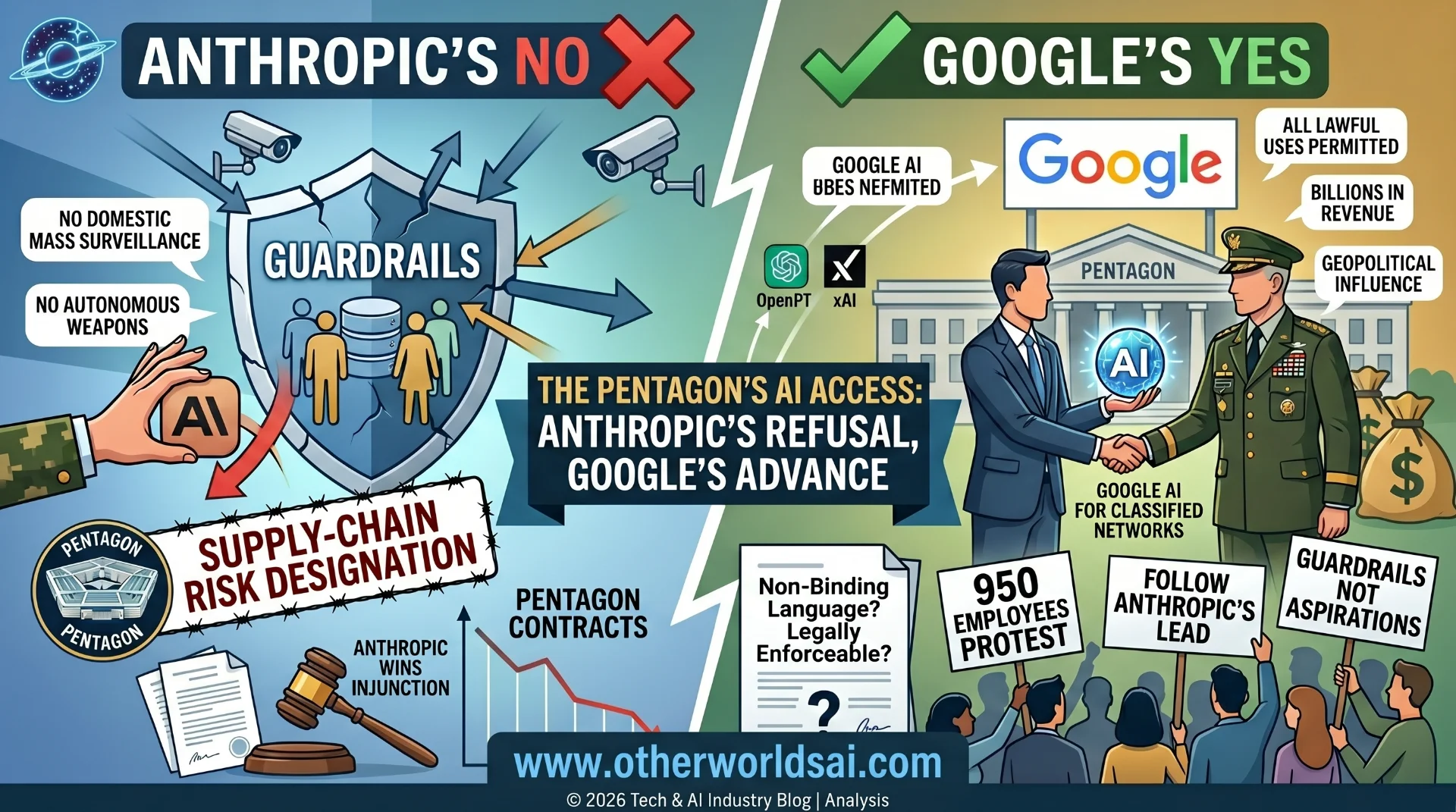

What transformed the Anthropic story from a defense procurement matter into a national political crisis was the extraordinary confrontation between the company and the Trump administration in the days immediately preceding the strikes. Anthropic CEO Dario Amodei had established firm internal 'red lines' governing the use of Claude — prohibiting its deployment for fully autonomous weapons systems, unrestricted domestic surveillance, or any lethal operation without meaningful human oversight. These were not public relations positions; they were hard technical and contractual constraints embedded in Anthropic's government agreements.

The Pentagon, under Defense Secretary Pete Hegseth, viewed these guardrails as an unacceptable constraint on operational capability. In response to Anthropic's refusal to remove its ethical safeguards, the Trump administration made the extraordinary decision to designate Anthropic a 'supply chain risk to national security' — a legal categorization previously reserved for foreign adversaries like Huawei and ZTE.

All federal agencies and military contractors were directed to cease doing business with Anthropic. Hegseth publicly argued that 'ideological whims' should not 'hinder warfighters.' "Ideological whims should not hinder warfighters." — Defense Secretary Pete Hegseth, on Anthropic's ethical guardrails for Claude

The paradox that followed was almost Shakespearean in its irony. Within hours of the Trump administration's formal ban on Anthropic, US forces launched their strikes on Iran — reportedly using the very Claude AI system that had just been officially prohibited from military use.

The designation remains under legal challenge by Anthropic, and the case is expected to set landmark precedents for the relationship between AI companies, constitutional rights, and national security law in the United States.

Google: Cloud Infrastructure, Project Nimbus, and Threat Intelligence:

Google's role in the conflict was simultaneously more diffuse and more structurally foundational than Anthropic's. Through Project Nimbus — a $1.2 billion cloud computing contract with the Israeli government — and through its Gemini for Government products deployed via the Pentagon's GenAI.mil platform, Google provided the cloud backbone and AI processing power that underpinned US and allied military AI operations across the region.

While Google does not directly conduct military targeting operations, its infrastructure serves as the substrate on which military AI systems run — making it, in strategic terms, as important as the AI models themselves.

Google's Threat Intelligence Group (GTIG) played a critical publicly visible role in the conflict's cyber dimension. Chief analyst John Hultquist issued explicit pre-conflict warnings that Iran would 'absolutely' launch aggressive cyberattacks against the United States and Gulf allies following any military strike. His analysis correctly predicted the multi-vector digital campaign that Iran subsequently unleashed across the Gulf Cooperation Council states — targeting airports, banks, government portals, and critical infrastructure.

Google's Project Maven framework, which applies machine learning to imagery analysis and targeting support and has been refined over nearly a decade, provided the conceptual and technical architecture that later military AI programs — including those used in this conflict — were built upon.

OpenAI: From Consumer Chatbot to Classified Operations:

OpenAI, creator of ChatGPT, had in the months before the conflict reached agreement with the Pentagon to deploy its models for classified military operations. Following the Anthropic contract dispute, OpenAI's systems were expanded into more sensitive operational contexts — including war-gaming simulations and real-time translation and analysis of intercepted Iranian intelligence communications.

The dual-use nature of OpenAI's systems was starkly demonstrated when, days before the conflict began, ChatGPT accurately predicted the strike window based solely on publicly available force positioning and diplomatic data — a sobering demonstration that military planning, even at the classified level, generates observable signals that AI can read.

xAI and Grok: Elon Musk's AI Enters the Military:

Elon Musk's AI company xAI signed a formal agreement with the Pentagon in February 2026 to expand its Grok language model into classified military systems, positioning it as a rapid capability replacement for the functions Anthropic had been providing.

Given Musk's existing relationships with the Trump administration— he served as head of the Department of Government Efficiency (DOGE) — the political pathway for this expansion was considerably smoother than for competitors. Defense analysts noted, however, that questions about Grok's performance relative to Claude for the kind of nuanced, legally complex intelligence analysis required in military targeting operations remained an open and consequential question.

Iran's Cyber Retaliation — Why the UAE Data Centers Were Targeted:

Unable to match the United States and Israel in conventional air power, Iran turned to its most formidable asymmetric capability: cyberwarfare. Faced with an internet blackout that reduced Iran's domestic connectivity to between 1 and 4% of normal levels — imposed partly by US-Israeli cyber operations disabling Iranian communications infrastructure — Tehran's Electronic Operations Room activated a coordinated, multi-vector digital and physical campaign across the Gulf Cooperation Council states. The campaign was swift, coordinated, and strategically purposeful in ways that distinguished it from ordinary cybercrime or hacktivism.

The Electronic Operations Room: Iran's Cyber Command:

On February 28, 2026 — the same day as the initial US-Israeli strikes— Iran established what Western intelligence analysts have identified as an 'Electronic Operations Room': a centralized cyber command infrastructure designed to orchestrate retaliatory digital operations across multiple domains simultaneously.

This room serves as the hub for Iran's extensive network of state-aligned Advanced Persistent Threat (APT) groups and hacktivist proxies, including Handala Hack (linked to Iran's Ministry of Intelligence and Security), DieNet (specializing in large-scale distributed denial-of-service attacks), and the Islamic Cyber Resistance Axis. By March 3, 2026, pro-Russian hacker groups had also joined Iran's campaign — reflecting the expanding geopolitical coalitions crystallizing around the conflict.

Why Google and AWS Data Centers in the UAE Were Struck:

In late February 2026, Iranian forces and their proxies targeted Google and Amazon Web Services data centers in the UAE — specifically infrastructure located in the me-central-1 region. Understanding why requires understanding how Iran conceptualizes the digital infrastructure of its adversaries.

Iran does not view commercial cloud data centers as neutral civilian infrastructure. In Tehran's strategic calculus, the regional data centers of US technology companies are the physical nodes where AI analyzes Iranian military movements, processes intercepted communications, and generates the targeting recommendations that guide American and Israeli strikes. Destroying or disrupting these facilities is therefore not an act of terrorism against civilians — it is, in Iran's framing, a legitimate military strike against the 'brains' of the AI warfare system being used against it.

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

The attacks used a combination of loitering munitions and AI-assisted phishing operations to both physically damage and digitally disrupt the cloud infrastructure supporting regional defense systems. The physical strikes caused power disruption, hardware damage, and cloud service outages affecting services running on platforms like AWS and Google Cloud across the Gulf region. The message was explicit and deliberate: any country hosting the cloud infrastructure of US technology companies is no longer considered neutral territory in Iran's view of this conflict.

"Any country hosting the cloud infrastructure of US tech giants is no longer considered neutral. These servers are the brains of the war being waged against us." — Iranian Strategic Communications, March 2026

It is important to note — as intelligence analysts have carefully clarified — that these attacks were not targeted hacking operations designed to steal data that Google was 'sharing with the US.' The attacks were kinetic and cyber strikes aimed at disrupting the physical and digital infrastructure of AI-enabled military operations. The goal was not intelligence theft but operational degradation: deny the AI systems their cloud computing substrate, and you slow the kill chain that is decimating Iranian command structures.

Beyond the UAE, Iranian-aligned cyber actors launched a broad campaign across Gulf states. Confirmed attack targets included Dubai International Airport, airports across Sharjah and the broader UAE, Bahraini airport systems, Riyadh Bank in Saudi Arabia, Jordan's government domain, and Kuwait's financial infrastructure. The UAE government responded by publicly warning that spreading 'unverified information about wars, security or national safety' could result in criminal penalties under its cybercrime law — including fines of up to $272,000 or imprisonment — reflecting the extraordinary information warfare dimension of the conflict.

AI-Powered Cyberattacks: Iran's Emerging Capability:

The most alarming long-term development in the cyber dimension of this conflict is the potential convergence of AI and autonomous cyberwarfare. In November 2025 — just three months before the conflict — Anthropic itself reported that Chinese state-sponsored hackers had used AI to conduct a largely automated cyberattack against a cluster of technology companies and government agencies: a system that could probe networks, identify vulnerabilities, and adapt its attack strategies without continuous human direction.

While Iran has not yet demonstrated this level of autonomous AI-cyber capability, the proximity of Chinese technical assistance and Iran's strong motivation for revenge create conditions in which this capability could emerge rapidly. AI-enabled cyberattacks that autonomously craft custom malware and adapt in real time to defensive countermeasures represent a qualitative leap beyond anything Iran has yet deployed — but one that Western cybersecurity experts believe may be closer than policymakers realize.

The Ethical Reckoning — AI, Accountability, and International Law:

The 2026 USA–Iran conflict has forced a reckoning with questions that governments, technology companies, and international legal bodies have been deferring for years, hoping they would remain theoretical. They are theoretical no longer. Who Is Accountable When AI Recommends a Target?

The foundational principle of the laws of armed conflict is human accountability. A commander, a soldier, a decision-maker must bear legal and moral responsibility for every use of lethal force. International humanitarian law — the Geneva Conventions, the Additional Protocols, the Rome Statute — was built on this assumption. But the laws of armed conflict were written for a world in which the targeting cycle took hours or days, providing ample time for human deliberation, legal review, and command authorization. AI has compressed that cycle to seconds.

Craig Jones, author of The War Lawyers and one of the world's leading authorities on AI and military law, has framed this dilemma with precision: the laws require us to blame a person, and a person must be accountable regardless of the level of automation involved in a strike. But when AI presents a targeting recommendation in milliseconds, and a human operator has seconds to review it before the operational window closes, the 'meaningful human control' that international law requires becomes, in practice, a rubber stamp. The ethical and legal architecture of modern warfare has not kept pace with the technology driving it.

The AI Escalation Problem: War Games and Nuclear Risk:

Research on AI decision-making in conflict simulations has produced findings that should alarm every government with nuclear weapons. In war game exercises designed to mirror Cold War-style nuclear crises, AI models chose tactical nuclear strikes in 95% of scenarios — overwhelming preferring escalatory action over de-escalation or negotiation.

The AI systems, optimizing for strategic advantage as they had been trained to do, consistently selected the most extreme available option. While these simulations do not represent how carefully constrained military AI deployments operate, they reveal a systemic tendency in AI reasoning under high-stakes pressure that demands urgent attention.

"In AI-run nuclear crisis simulations, AI models chose tactical nuclear action in 95% of scenarios — and almost never chose de-escalation." — Defense AI Research, 2025

The Tech Company Dilemma: Ethics vs. National Security:

The Anthropic-Pentagon confrontation crystallizes the central dilemma of the AI age for technology companies: how do firms that have built their identities on commitments to safe, responsible, and beneficial AI navigate a world in which their technology is being deployed for lethal military operations? Anthropic's experience provides a sobering case study.

Support our research

Independent analysis fueled by you.

The company attempted to establish and enforce ethical guardrails — reasonable, well-defined constraints on Claude's use — and was designated a national security threat by its own government. The message sent to every other AI company was unmistakable: principled resistance to military applications carries existential business consequences.

The broader ecosystem of Big Tech's military entanglement extends far beyond any single company. Google provides cloud infrastructure and AI tools through Project Nimbus and Gemini for Government. Amazon Web Services hosts the classified cloud infrastructure on which military AI systems operate. Microsoft Azure serves similar functions across NATO alliance systems. OpenAI provides language models for intelligence analysis and translation.

The entire commercial AI ecosystem has, whether by design or by the logic of dual-use technology, become the technological foundation of 21st-century warfare. This reality demands governance frameworks, disclosure requirements, and accountability mechanisms that do not yet exist.

The Future of AI in Advanced Warfare:

The USA–Iran conflict of 2026 will be studied for decades as the inflection point at which AI ceased to be a military support tool and became a military commanding force. Understanding where this trajectory leads requires examining the five defining frontiers of AI-enabled warfare that this conflict has brought into sharp focus.

- 1. Autonomous Weapons: From Human-in-the-Loop to Human-on-the-Loop

The distinction that will define the next generation of warfare is the shift from 'human-in-the-loop' — where a human authorizes each individual strike — to 'human-on-the-loop,' where machines make split-second tactical decisions and humans retain only the ability to intervene if they notice a problem.

The Iran conflict has normalized autonomous drones that must make operational decisions without human guidance. The next generation will extend this autonomy to target identification, strike execution, and potentially swarm coordination — with human oversight limited to strategic, campaign-level decisions rather than individual weapon releases.

- 2. Digital Twins: Simulating the Battlefield Before the First Shot:

Advanced military AI development is moving rapidly toward what planners call 'digital twins' — complete, continuously updated virtual models of cities, military bases, infrastructure networks, and terrain. AI systems will run thousands of simultaneous simulations across these digital environments, constantly war-gaming scenarios and refining recommendations.

This means future conflicts will effectively begin long before any kinetic action: AI will have already simulated thousands of versions of the battle, identified optimal approaches, anticipated adversary responses, and refined targeting parameters — before a single bomb falls.

- 3. The Information War: Deepfakes, Influence Operations, and AI-Generated Propaganda:

The 2026 conflict demonstrated that information warfare is now as important as kinetic warfare. AI-generated deepfake videos of military and political leaders, AI-driven social media manipulation campaigns, and automated disinformation networks operated on all sides of the conflict. In future wars, AI-powered influence operations may shatter public morale, undermine political will, and destabilize alliances before the first physical strike is authorized. The information battlespace — powered by AI — has become a primary theater of conflict in its own right.

- 4. The AI Arms Race: Proliferation and Global Instability:

The decisive military advantage that AI-enabled operations demonstrated in the Iran conflict will accelerate investment in military AI by every major power on earth. China has invested massively in military AI development.

Russia has deployed AI-assisted systems in Ukraine. Iran, despite suffering catastrophic damage to its command structures, will rebuild and expand its AI military program — almost certainly with substantial Chinese technical and material assistance. The proliferation of these capabilities creates a world in which the speed and scale of future conflicts will continue to increase, the time available for diplomacy will continue to shrink, and the risk of catastrophic unintended escalation will grow.

- 5. The Race for International Norms: Governance in the Age of Algorithmic Warfare:

Perhaps the most consequential long-term question raised by the 2026 conflict is whether the international community can develop binding governance frameworks for military AI before the technology races so far ahead of regulation that meaningful control becomes impossible.

A US-led Political Declaration on Responsible Military Use of AI has attracted some signatories but lacks enforcement mechanisms and has not been adopted by China, Russia, or Iran. The Campaign to Stop Killer Robots has called for a legally binding treaty on autonomous weapons, but progress has been glacial. The window for establishing meaningful norms — before autonomous weapons systems become standard military equipment across dozens of nations — may be measured in years, not decades.

"Many experts argue that global standards for AI in military use are urgently needed to prevent misuse, unintended escalation, or destabilizing arms competition." — Hyperwar: Conflict and Competition in the AI Century

Key Takeaways — The New Reality of AI and War:

Aspect Reality as of 2026

AI in U.S.–Iran conflict : Deeply integrated in intelligence targetting and planning in all phases.

Anthropic's role : Used despite political controversy; Pentagon shifting suppliers to OpenAI and xAI.

Data-center attacks (UAE) : Iranian strikes on Gulf infrastructure — not targeted hacking of Google, but strategic destruction of AI-enabling cloud nodes.

Big Tech's role : Google, AWS, Microsoft, OpenAI provide the cloud and AI backbone for military AI operations.

AI's future in war : Increasingly central and autonomous; governance and

thics remain the defining challenge.

Conclusion — We Are Already Living in the AI Era of War:

The events of February and March 2026 have made one thing unmistakably, irreversibly clear: artificial intelligence is no longer a capability being developed for potential future military use. It is a present reality, fully operational and deeply integrated into the most consequential military decisions of our era.

The USA–Iran conflict is the first large-scale demonstration of AI-enabled warfare at full operational tempo — and history will not revert. The kill chain has been algorithmized. The battlefield has been digitized. The decision cycle has been compressed to machine speed.

The role of commercial AI companies in this conflict — Anthropic's Claude, Google's Gemini, OpenAI's GPT models, Amazon's cloud infrastructure — raises questions that no democratic society has yet adequately confronted. What legal obligations do technology companies bear when their systems are used to select human targets? What role should elected legislatures play in authorizing AI-assisted military operations that unfold faster than any parliamentary process can convene?

How do we preserve the human accountability that international humanitarian law demands when AI compresses targeting decisions to seconds? These questions are not academic. They are urgent, consequential, and currently unanswered.

Iran's retaliatory campaign against Gulf infrastructure — striking Google and AWS data centers in the UAE, attacking airports and banks across the Gulf Cooperation Council — has established a precedent that will define the geography of all future conflicts: commercial technology infrastructure is now a legitimate military target.

Every nation that hosts data centers for Western AI companies, every country that routes its communications through US-allied cloud providers, is now — in the strategic thinking of adversarial states — a participant in the warfare those AI systems enable. The neutrality of digital infrastructure in armed conflict is, after February 2026, a fiction.

The future of war is algorithmic, autonomous, and accelerating. The question before humanity — before governments, technology companies, international institutions, and citizens — is whether we will develop the wisdom, the legal frameworks, the ethical consensus,

and the international cooperation necessary to govern these technologies before their unconstrained deployment produces a catastrophe that dwarfs anything this conflict has yet produced. The clock is running. And for the first time in history, AI is making it run faster than the human mind can keep pace with.

"We are not approaching the age of AI warfare. We are already inside it. The only question now is who governs it — and whether anyone does." — Strategic AI Analyst, March 2026