"The "Clash of Giants"

Google expands Pentagon’s access to its AI after Anthropic’s refusal:

Google Defies Employee Protest to Sign Pentagon AI Deal After Anthropic Said No.

Google has granted the U.S. Department of Defense access to its AI for classified networks, essentially allowing all lawful uses, according to multiple news reports.

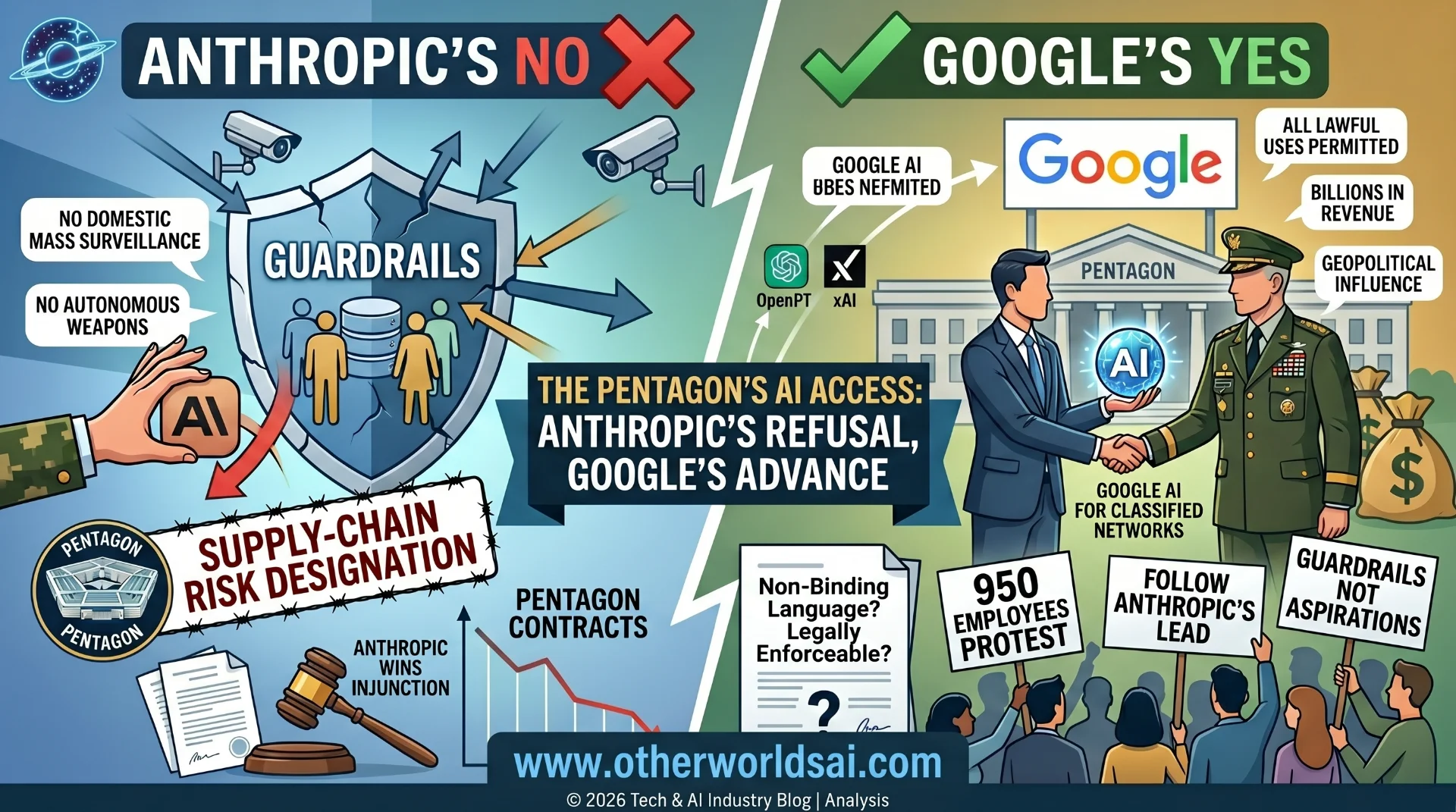

This deal follows Anthropic’s public stand against the Trump administration after the model maker refused to grant the DoD the same terms. The Pentagon wanted unrestricted use of AI, whereas Anthropic wanted guardrails to prevent its AI from being used for domestic mass surveillance and autonomous weapons.

Because Anthropic refused those use cases, the DoD branded the model maker a “supply-chain risk” — a designation normally reserved for foreign adversaries. Anthropic and the DoD are now embroiled in a lawsuit, with a judge last month granting Anthropic an injunction against the designation while the case proceeds.

Google marks the third AI company to try and turn Anthropic’s loss into its own gain. OpenAI immediately signed a deal with the DoD, as did xAI. Google’s agreement includes some language saying that it doesn’t intend for its AI to be used for domestic mass surveillance or in autonomous weapons, The Wall Street Journal reports, which is similar to contract language with OpenAI. But it is unclear whether such provisions are legally binding or enforceable, per the WSJ.

Google entered this deal even though 950 of its employees have signed an open letter asking it to follow Anthropic’s lead and not sell AI to the Defense Department without similar guardrails. Google did not respond to a request for comment.

Google Opens Pentagon's Door to AI — After Anthropic Slammed It Shut:

When Anthropic drew a line in the sand, Google stepped right over it. In a move that has ignited fresh controversy at the intersection of artificial intelligence, national security, and corporate ethics, Google has granted the U.S. Department of Defense unrestricted access to its AI tools for classified networks.

The deal positions Google as the third major AI company — after OpenAI and Elon Musk's xAI — to seize on Anthropic's very public refusal to hand the Pentagon a blank check on AI. The stakes could not be higher: the questions being asked here will define the ethical boundaries of AI deployment for years to come.

Anthropic's Refusal — The Spark That Started Everything:

The story begins with Anthropic taking a stand that few expected and fewer followed. When the U.S. Department of Defense approached Anthropic — maker of the Claude AI model — seeking unrestricted access to its AI for classified government use, Anthropic said no. The sticking points were significant: the Pentagon wanted blanket permission for all lawful uses of the AI, while Anthropic insisted on guardrails to prevent its technology from being used for domestic mass surveillance or autonomous weapons systems.

Rather than negotiate, the Pentagon retaliated. The DoD branded Anthropic a "supply-chain risk" — a designation that had previously been reserved almost exclusively for foreign adversaries and hostile state actors. It was an extraordinary escalation against an American AI company that had simply declined to waive its own ethical guidelines.

The Pentagon branded Anthropic a "supply-chain risk" — a label normally reserved for foreign adversaries — after the company refused to allow unrestricted AI use for domestic surveillance and autonomous weapons.

Anthropic fought back in court. A judge granted Anthropic an injunction last month against the supply-chain risk designation while the legal case proceeds — a significant early win, but one that has done little to slow the Pentagon's pursuit of AI partnerships elsewhere.

Google Steps In — What the Pentagon Deal Actually Covers:

Google's agreement with the Department of Defense grants access to its AI tools across classified networks, essentially permitting all lawful uses of the technology. The breadth of this access is substantial — classified networks handle some of the most sensitive military, intelligence, and national security operations in the United States government.

The deal does include some limiting language. According to reporting by The Wall Street Journal, Google's contract states that it does not intend for its AI to be used for domestic mass surveillance or in autonomous weapons systems — language that echoes similar provisions in OpenAI's Pentagon contract. However, a critical caveat looms large: it is unclear whether these provisions are legally binding or enforceable.

"It is unclear whether such provisions are legally binding or enforceable." — The Wall Street Journal.

In other words, the guardrails that Google has written into the deal may be aspirational rather than contractual. Unlike Anthropic, which walked away from the table entirely when it could not secure enforceable protections, Google chose to proceed — accepting language that critics argue provides more optics than oversight.

The AI Race to the Pentagon — OpenAI, xAI, and Now Google:

Google is not the first to fill the vacuum left by Anthropic's refusal — it is the third. The sequence of events has unfolded with remarkable speed, revealing just how intensely the major AI companies are competing for lucrative defense contracts.

• - OpenAI: Signed a deal with the DoD almost immediately after Anthropic's refusal, becoming the first major AI lab to formalize unrestricted access for classified government use.

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

• - xAI: Elon Musk's AI company followed shortly after, also securing Pentagon access to its AI models for classified networks.

• - Google: Now the latest entrant, granting similarly broad access — becoming the third major player to capitalize on Anthropic's principled stand.

Support our research

Independent analysis fueled by you.

The pattern is striking and intentional. Each deal has been framed, at least in part, as an opportunity created by Anthropic's absence — a strategic gap in Pentagon AI supply that competitors have been racing to fill. The commercial incentives are enormous: defense AI contracts represent billions in potential revenue, long-term government relationships, and significant geopolitical influence.

Rebellion From Within — 950 Google Employees Say No:

Not everyone at Google is comfortable with the direction the company is taking. Before the deal was finalized, 950 Google employees signed an open letter — published at notdivided.org — urging the company to follow Anthropic's example and refuse to sell AI to the Department of Defense without meaningful, enforceable guardrails.

The employee petition is a significant act of internal dissent, reflecting deep unease among Google's own workforce about the ethical implications of deploying AI in classified military and intelligence contexts. The signatories explicitly called on Google leadership to take the same principled stance as Anthropic — prioritizing ethical boundaries over defense contracts.

950 Google employees signed an open letter urging the company to follow Anthropic's lead and refuse Pentagon access without enforceable guardrails — a rare and highly public act of internal dissent.

Google did not respond to a request for comment on the deal or the employee petition. That silence has only amplified the controversy, leaving the public — and Google's own staff — without any official explanation of how the company weighed the ethical concerns raised by nearly a thousand of its own employees before proceeding.

The Core Ethical Divide — Guardrails vs. Blank Checks:

At the heart of this controversy is a fundamental question about what responsible AI deployment looks like in a national security context. Anthropic's position was clear: it would not allow its AI to be used for domestic mass surveillance or fully autonomous weapons systems — full stop. These were not negotiating positions. They were ethical redlines.

The Pentagon's position was equally clear: it wanted unrestricted access for all lawful purposes, with no external company determining what the U.S. military could or could not do with AI tools it was paying for. From the DoD's perspective, adding corporate guardrails to classified government operations represented an unacceptable constraint on national security decision-making.

Google, OpenAI, and xAI have each attempted a middle path — including limiting language in their contracts while still granting the broad access the Pentagon sought. Whether that middle path represents genuine ethical responsibility or convenient cover for a lucrative deal remains the central debate.

Why Enforceability Matters More Than Intent:

The enforceability question is not a legal technicality — it is the whole ballgame. Contractual language that expresses intent without creating legal obligation is, in practical terms, a suggestion.

Anthropic's stance was that without enforceable protections, no amount of limiting language could guarantee that its AI would not ultimately be used in ways it found morally unacceptable. That is precisely why Anthropic walked away — and precisely why critics argue that Google, OpenAI, and xAI have not actually solved the problem Anthropic identified.

What This Means for the Future of AI Ethics and National Security:

The Google-Pentagon deal is more than a business transaction — it is a referendum on what kind of industry the AI sector wants to be. The rapid succession of deals following Anthropic's refusal suggests that for most major AI companies, the commercial opportunity of defense contracts outweighs the ethical risks of open-ended military AI deployment.

For Anthropic, the situation is both a vindication and a warning. Its legal injunction against the supply-chain risk designation shows that the courts are willing to protect AI companies that push back against government overreach. But the flood of competitor deals making the same access available to the DoD suggests that Anthropic's stand, however principled, may have limited practical effect on what AI capabilities the Pentagon ultimately deploys.

For the broader AI industry, the stakes are existential. The decisions being made today — about who gets access to AI, under what conditions, and with what legal protections — will shape the relationship between artificial intelligence and state power for generations. Whether that relationship is governed by enforceable ethics or aspirational language may be the most consequential question the AI industry has ever faced.

The decisions being made today about AI and state power will shape that relationship for generations. Whether it is governed by enforceable ethics or aspirational language may be the most consequential question the AI industry has ever faced.

© 2026 Tech & AI Industry Blog | Sources: TechCrunch, The Wall Street Journal, notdivided.org