The Great AI Revolt: How OpenAI's Pentagon Deal Triggered a 295% Uninstall Surge — and Sent Users Flocking to Claude.

The Privacy Backlash That Shook the AI Industry: What Every User, Business Leader, and Policymaker Needs to Know:

How Anthropic's refusal to arm the Department of War, OpenAI's controversial Pentagon agreement, and a single weekend of consumer outrage rewrote the AI adoption map — and what comes next for both platforms:

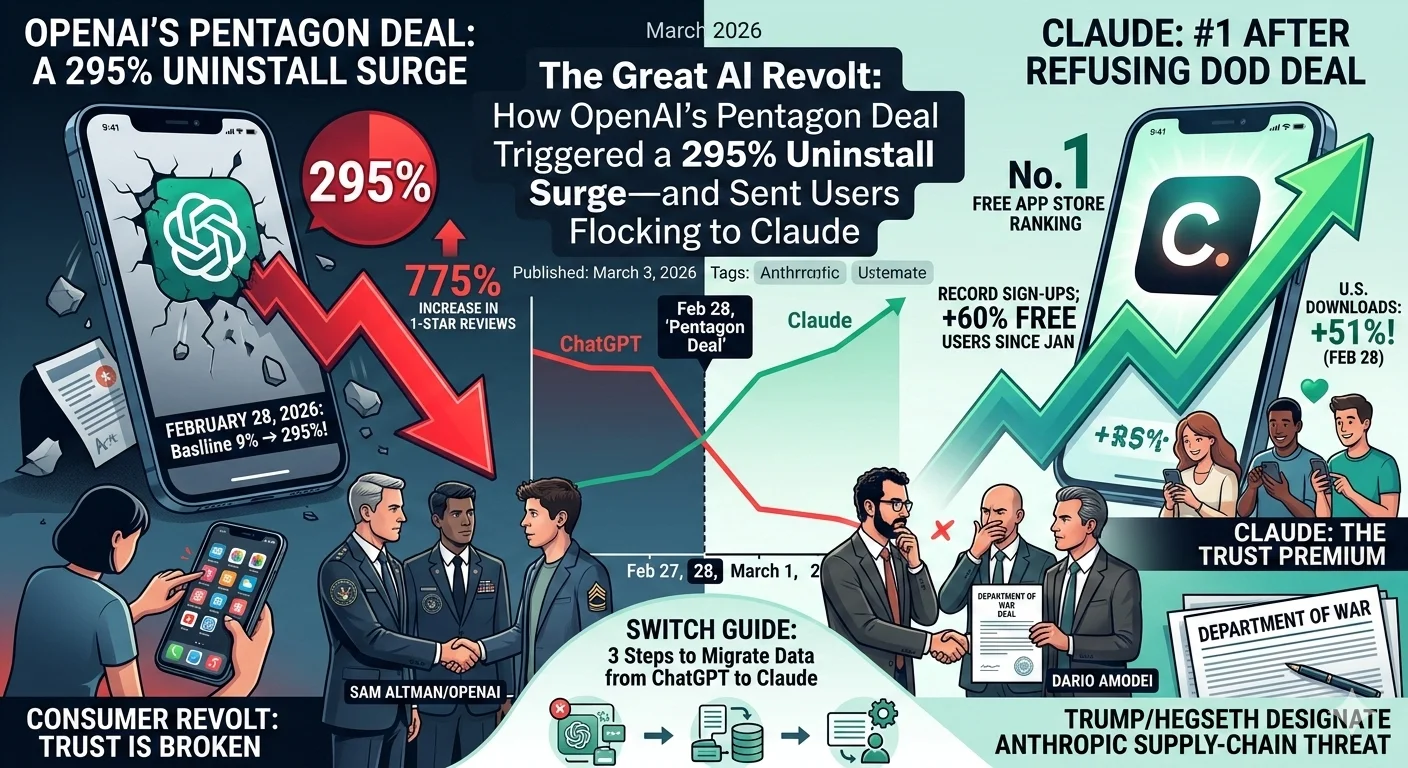

From a 295% uninstall spike to Claude's first-ever No. 1 App Store ranking, the fallout from OpenAI's deal with the U.S. Department of Defense has triggered the most dramatic consumer realignment in AI history — and every user, executive, and investor needs to understand what it means.

The Weekend That Changed Everything: Inside the ChatGPT Uninstall Crisis: One Announcement, One Weekend, and a 33x Spike in Uninstalls — The Numbers Behind the Consumer Revolt:

In a breathtaking reversal for the world's most downloaded AI app, ChatGPT uninstalls surged 295% in a single day on February 28, 2026 — after OpenAI announced a landmark partnership with the U.S. Department of Defense, now rebranded under the Trump administration as the Department of War. What started as a government contract became the flashpoint for one of the most visible consumer backlashes in the history of artificial intelligence.

At its core, this is a story about trust: who users believe is building AI responsibly, who they believe is weaponizing it — and how quickly millions of people can act on that belief when the answer becomes clear. The numbers that followed were not incremental. They were seismic.

According to market intelligence firm Sensor Tower, ChatGPT's typical day-over-day uninstall rate sits at around 9% across any rolling 30-day window. On February 28, that baseline exploded to 295% — a nearly 33x spike — as users mass-deleted the app from their devices. The platform's U.S. downloads simultaneously dropped 13% that Saturday and fell a further 5% on Sunday.

The collapse in consumer sentiment was equally visible in the app store ratings. One-star reviews for ChatGPT surged 775% on Saturday alone — then grew a further 100% day-over-day on Sunday. Five-star reviews fell by 50% over the same 48-hour window. For a consumer app that had dominated the AI space for over two years, the speed and scale of the reversal was extraordinary.

Key Risk: Consumer trust, once lost at this scale and speed, rarely recovers on its own timeline. For OpenAI, the reputational damage from the DoD announcement may outlast the news cycle by years.

Anthropic's Masterstroke: Why Saying No to the Pentagon Made Claude the No. 1 AI App: The Principled Refusal That Rewrote the AI Adoption Map — and What It Signals About the Future of AI Ethics:

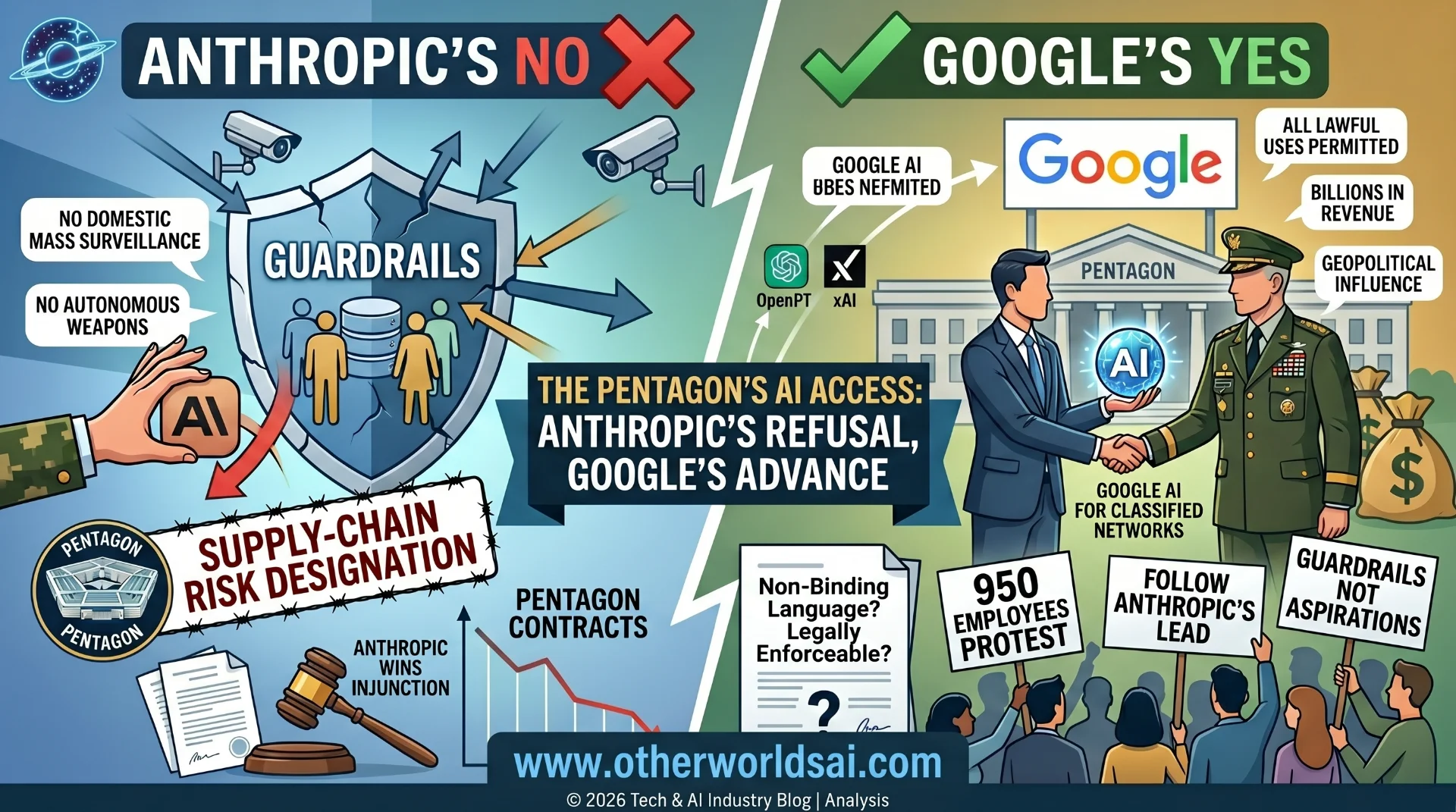

While OpenAI absorbed the backlash, Anthropic made the opposite choice — and was rewarded for it. The company publicly declined the Department of Defense deal, citing serious concerns that its AI models could be deployed for mass domestic surveillance and integrated into fully autonomous weapons systems — capabilities it deemed both ethically unacceptable and technically unsafe at the current stage of AI development.

That decision resonated powerfully with millions of users who had long been uneasy about where the AI industry was heading. Claude's U.S. downloads jumped 37% on February 27 and surged a further 51% on February 28. By Saturday evening, Claude had leapt more than 20 positions in the App Store rankings to claim the No. 1 free iPhone app spot in the United States — a position it still held as of Monday, March 2, 2026.

Independent data providers confirmed both the magnitude and the historic nature of the shift. Appfigures reported that Claude's total daily U.S. downloads surpassed those of ChatGPT for the very first time on Saturday — estimating the single-day download increase at 88%, even higher than Sensor Tower's figure. Claude simultaneously claimed the No. 1 free app ranking in Belgium, Canada, Germany, Luxembourg, Norway, and Switzerland.

Similarweb added the broadest perspective of all: Claude's weekly U.S. downloads over the past week were approximately 20 times what they were in January 2026. Anthropic confirmed the inflection point directly — reporting record daily sign-ups, free users up more than 60% since January, and paid subscribers more than doubling year-to-date.

-

Executive Takeaway: Anthropic's refusal to partner with the DoD for surveillance or autonomous weapons use has done more for Claude's brand positioning in one weekend than any marketing campaign could achieve in a year.

-

The Political Fallout: Trump, Hegseth, and the Battle Over AI Governance:

-

From a Declined Contract to a Federal Supply-Chain Threat — The Government's Response to Anthropic's Refusal:

Anthropic's refusal to partner with the DoD did not come without immediate and serious political consequences. President Trump responded by ordering all federal agencies to halt the use of Anthropic's products entirely. Defense Secretary Pete Hegseth went further, announcing plans to officially designate Anthropic a supply-chain threat — an aggressive and unprecedented move against a U.S.-based AI company whose only offense was declining a government contract on ethical grounds.

OpenAI moved quickly to fill the vacuum left by Anthropic's exit. The company signed its own Pentagon agreement shortly afterward, publicly claiming the deal includes meaningful safeguards around how its AI models will be deployed. But critics, privacy advocates, and AI ethics researchers have challenged those assurances — arguing that the fundamental questions remain dangerously open.

Those questions are not abstract. Who decides when AI is ready to make autonomous weapons targeting decisions? What legal and technical limits exist on domestic AI surveillance conducted through commercial platforms? And who bears accountability — legally, ethically, and reputationally — when an AI system deployed in a military or intelligence context causes harm?

The broader governance question these events have forced into the open is one of the most consequential in modern technology history. As large language models become embedded in government, defense, and intelligence infrastructure, the choices made by AI companies today will set the ethical and legal precedents that govern the technology for decades to come. For millions of users, Anthropic's willingness to absorb significant political retaliation rather than cross those lines was a defining signal about the kind of company it intends to be.

Executive Takeaway: The Anthropic–OpenAI divergence on the DoD deal is not merely a PR moment. It is an early marker of how AI companies will be evaluated — by governments, institutional investors, enterprise clients, and end users — on questions of governance and responsible deployment.

What's Actually at Stake: The Numbers Behind the Fastest Consumer Migration in AI History:

What Sensor Tower, Appfigures, and Similarweb Are All Saying — and What the Data Really Means:

As data providers released their findings in the days following the announcement, the figures confirmed what users were already signaling: the consumer reaction was not a momentary spike. It was a structured, multi-day migration across platforms, countries, and user segments.

Two data sets define the scale of what happened: the collapse of ChatGPT's consumer metrics, and the simultaneous surge of Claude across every measurable dimension.

ChatGPT — The Metrics of a Consumer Confidence Collapse:

• Uninstall rate: +295% day-over-day on February 28 — vs. a 9% baseline over the prior 30 days.

• U.S. downloads: Down 13% on Saturday, down a further 5% on Sunday.

• 1-star reviews: +775% on Saturday; +100% day-over-day on Sunday.

• 5-star reviews: Down 50% over the same 48-hour window.

Claude — The Metrics of a Historic Breakout:

• U.S. downloads: +37% on February 27 | +51% on February 28 (Sensor Tower); +88% per Appfigures.

• App Store rank: Jumped 20+ positions to No. 1 free iPhone app in the U.S. as of Saturday, March 2.

• International: No. 1 free app in Belgium, Canada, Germany, Luxembourg, Norway, and Switzerland.

• Weekly downloads: Approximately 20x January 2026 levels (Similarweb).

• Platform growth: Free users up 60%+ since January; paid subscribers more than doubled year-to-date.

Key Risk: Similarweb cautioned that not all of Claude's weekly growth is attributable solely to the political controversy — broader product momentum and growing reputation for nuanced reasoning are also contributing factors. But the directional signal is unmistakable:

the OpenAI DoD deal was an accelerant on a trend already in motion.

How to Switch from ChatGPT to Claude: A Complete Migration Guide:

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

Step-by-Step: How to Export Your Data, Transfer Your Preferences, and Permanently Close Your ChatGPT Account:

For the millions of users now evaluating Claude as their primary AI assistant, the transition does not have to mean starting from scratch. Your ChatGPT preferences, memory, and conversation history can all be carried over — and deleting your OpenAI account is more straightforward than most users realize.

Step 1: Export Your Preferences and Data from ChatGPT:

Start by retrieving everything ChatGPT has stored about you. Navigate to Settings, then Personalization, and open the Memory section. Click Manage to review all stored information, update anything outdated or inaccurate, and copy the preferences you want to bring with you to Claude. For a complete archive, use ChatGPT's data export feature in account settings to download a full JSON file of your past chats.

Step 2: Transfer Your Context and Preferences into Claude:

Migrating your context into Claude is a deliberate, user-directed process. Open Claude and go to Settings, then Capabilities, and confirm that Memory is toggled on. Start a new conversation and use a prompt such as: "Here is some important context I would like you to remember — please update your memory about me with this" and paste your copied preferences directly. For large exported chat logs, prompt Claude with: "Review this and summarize my key preferences and working style" rather than pasting raw data files.

Support our research

Independent analysis fueled by you.

Step 3: Permanently Delete Your ChatGPT Account:

Canceling your ChatGPT subscription alone is not sufficient to remove your data from OpenAI's servers. To fully close your account and protect your privacy, follow these steps in sequence:

-

Go to Settings → Personalization → Memory and delete all stored memory and personalization data.

-

Type "Delete all my memory and personalized data" as a final chat command before ending the session.

-

Navigate to your account management settings and permanently delete your OpenAI account in its entirety.

Executive Takeaway: Canceling a subscription is not a data privacy decision. Account deletion is. Users serious about protecting their data must complete all three steps above.

ChatGPT vs. Claude in 2026: Why Users Are Making the Switch Permanently:

Beyond the Political Flashpoint — The Deeper Structural Reasons Claude Is Becoming the

Default AI Assistant for Privacy-Conscious Users:

Beyond the immediate controversy, several structural factors are driving users toward Claude as a long-term ChatGPT alternative. The OpenAI DoD deal accelerated a conversation that was already building — one about which AI companies can genuinely be trusted with users' data, values, and daily workflows.

• AI safety as a founding mission— Anthropic was founded by former OpenAI researchers with constitutional AI and long-term safety research at its core, making Claude's design philosophy fundamentally different from OpenAI's commercial trajectory.

• A privacy-first architecture — Anthropic's public refusal to partner with the DoD for surveillance or autonomous weapons applications signals a structural commitment to responsible AI deployment that resonates with privacy-conscious users and enterprise buyers alike.

• Superior long-form reasoning— Claude is consistently rated highly for nuanced, thoughtful, well-structured responses — particularly for complex writing, deep analysis, and reasoning tasks where context and accuracy matter most.

• A rapidly maturing platform — With paid subscribers more than doubling and free users up 60%+ since January 2026, Claude's ecosystem is scaling fast — and record daily sign-ups signal that momentum is accelerating, not plateauing.

• The trust premium— In an industry where trust has become the scarcest commodity, Anthropic's willingness to absorb serious political retaliation rather than compromise on its principles has created a brand premium that no advertising budget can replicate.

The Fundamental Question: Can OpenAI rebuild consumer trust fast enough to stem the migration — before Claude's momentum becomes self-sustaining?

The Bigger Picture: Who Controls the Most Powerful AI Systems Ever Built?

Strip Away the Download Numbers — and This Is a Governance Question of Historic Proportions:

Strip away the download charts, the App Store rankings, and the one-star review surges — and what the events of February 28, 2026 represent is a fundamental question about control. Who gets to decide how AI is deployed, what it is used for, and where the limits lie? The companies that build it, the governments that fund and regulate it, or the users who ultimately choose to adopt or reject it?

Traditional technology adoption has never posed this question so sharply. An AI model is not just a productivity tool — it is a concentration of decision-making power that will influence everything from military capability to economic competitiveness to civil liberties. The choices being made today about how AI is governed and deployed will define what artificial intelligence can and cannot do for the next two decades.

The question of whether governments, markets, or corporations ultimately control the most powerful AI systems ever built will define the next decade of technology policy — and the standoff already underway between Anthropic, OpenAI, and the U.S. government is forcing that question into the open in a way that no policy paper or congressional hearing ever could.

And for the millions of users who chose to uninstall one app and download another in a single weekend — the answer they are giving is already clear. The rest of the world is watching closely.

What Happens Next: An Industry, a Platform, and a Public Trust Crisis at a Crossroads: Strategic Implications for Users, Enterprise Leaders, and Policymakers: What Everyone Should Be Doing Now:

The situation continues to evolve at a pace that makes certainty impossible — but the strategic imperatives are becoming clearer with each passing day. The decisions being made right now — by users, by enterprise procurement teams, and by policymakers — will define the competitive and ethical landscape of AI for the next decade.**

For enterprise leaders evaluating their own AI platform strategies, the lesson from this moment is that AI vendor selection is no longer a back-office technology decision. It is a strategic, financial, and reputational choice — one that carries real consequences for data privacy exposure, regulatory risk, vendor concentration, and long-term trust with customers and employees alike.

And for citizens — whose data, communications, and economic futures will be shaped by the AI systems they choose to use — the outcome of this consumer revolt is anything but abstract. This is a defining moment for how AI will be governed, built, and deployed in the world's most powerful economy. The rest of the world is watching closely.

Key Takeaways: Everything You Need to Know:

• ChatGPT uninstalls surged 295% in a single day — a 33x spike above its 9% baseline — while 1-star reviews exploded 775% and 5-star reviews dropped 50% in the same 48-hour window.

• Anthropic's principled refusal of the DoD deal drove Claude to the No. 1 free iPhone app spot in the U.S. — a jump of 20+ ranks — with downloads up 51% day-over-day and record sign-ups confirmed by Anthropic directly.

• Claude surpassed ChatGPT in total daily U.S. downloads for the first time ever on Saturday, February 28, according to Appfigures — and simultaneously hit No. 1 in six additional countries including Canada and Germany.

• The political consequences for Anthropic were severe: President Trump ordered federal agencies to stop using Anthropic's products, and Defense Secretary Hegseth announced plans to designate the company a supply-chain threat.

• OpenAI signed its own Pentagon agreement shortly after, claiming safeguards are included — but critics and privacy advocates argue the fundamental ethical questions around AI surveillance and autonomous weapons remain unresolved.

• The core issue transcends any single app or download chart: who controls how the world's most powerful AI systems are deployed — and whether users, governments, or corporations will have the final say — is the defining governance question of this decade.

© 2026 AI Consumer Intelligence Brief. For strategic and informational purposes only. Not financial or legal advice.