Can an AI Company Say "No" to the President? The Legal War Between Anthropic and the White House:

Anthropic vs. The Pentagon: The AI Military Contract Battle That Shook the Industry:

The Biggest AI Story of 2026 That You Need to Understand:

Not every major moment in AI is measured in product launches or benchmark scores -- some are measured in the decisions companies make when governments come knocking. In February 2026, one such moment arrived when Anthropic CEO Dario Amodei and Defense Secretary Pete Hegseth reached what can only be described as a bitter stalemate over the terms of a contract governing how the U.S. military could use Anthropic's AI tools.

What followed was one of the most consequential -- and revealing -- standoffs in the short history of artificial intelligence. It exposed the fault lines between Silicon Valley's stated values and Washington's appetite for AI-powered military capability. It forced employees at rival companies to take sides. It triggered a political backlash at the highest levels of the U.S. government. And when it was over, the competitive landscape of the AI industry looked fundamentally different.

Here is the full story of how it unfolded, what it means, and why the implications stretch far beyond any single contract negotiation.

The Hard Line: What Anthropic Refused to Allow:

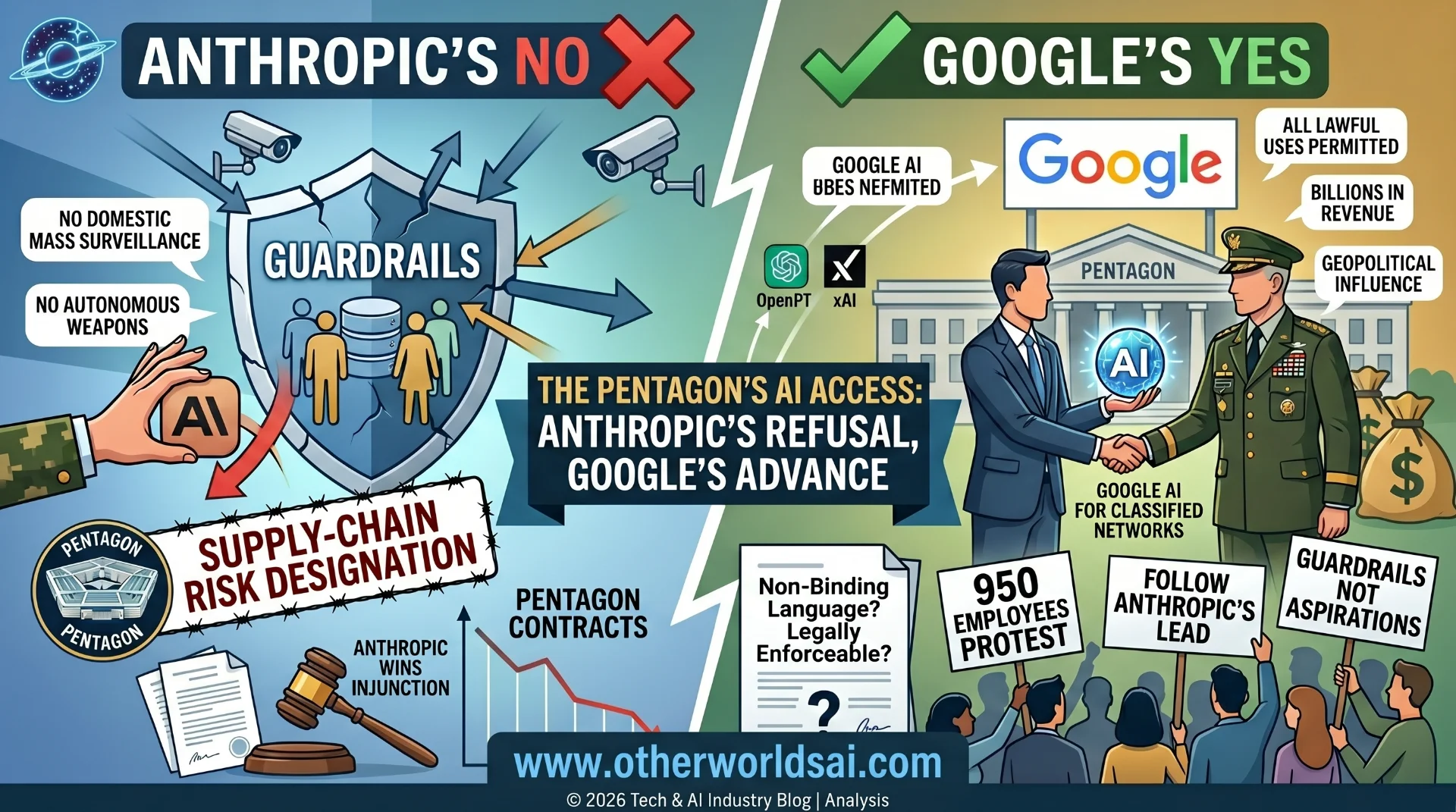

Anthropic walked into the renegotiation with a position that was both principled and, in the context of Washington politics, incendiary. The company drew a clear boundary: its AI would not be used for mass surveillance of American citizens, and it would not be used to power autonomous weapons systems capable of attacking without direct human oversight. These were not negotiating positions -- they were stated limits the company said it would not cross.

The Pentagon saw it differently. The Department of Defense -- which the Trump administration has taken to calling the Department of War -- argued that it should be permitted to access Anthropic's models for any "lawful use." Government representatives bristled at the notion that a private company could dictate terms to the U.S. military, and the negotiations quickly turned adversarial.

Amodei refused to move. In a public statement addressing the standoff, he wrote: "Anthropic understands that the Department of War, not private companies, makes military decisions. We have never raised objections to particular military operations nor attempted to limit use of our technology in an ad hoc manner.

However, in a narrowset of cases, we believe AI can undermine, rather than defend, democratic values."It was a carefully worded but unmistakably firm response -- one that drew a line between supporting the military and enabling what Anthropic viewed as genuinely dangerous applications of AI.

The Deadline, the Fallout, and Trump's All-Caps Response:

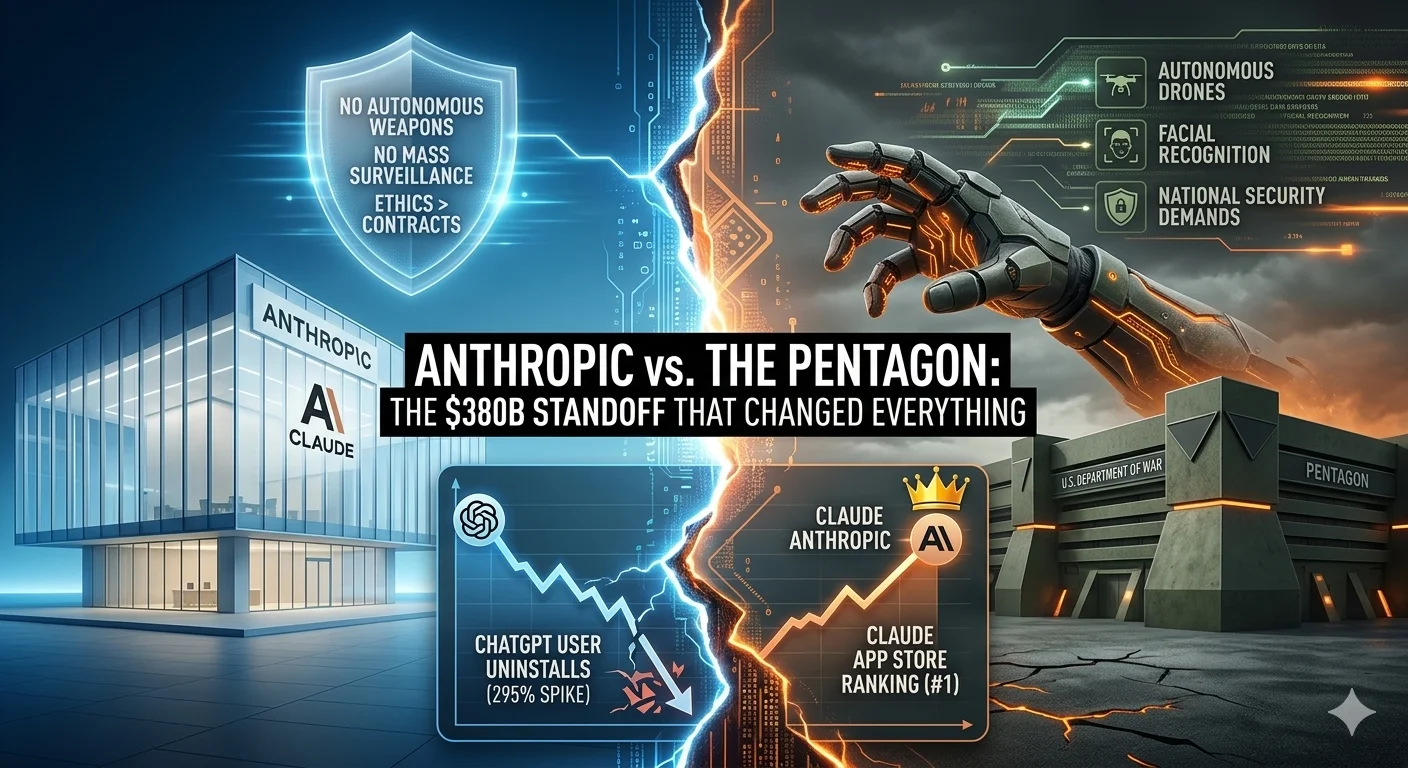

The Pentagon issued Anthropic a hard deadline to agree to its contract terms. Rather than capitulate, Anthropic let the deadline pass. The political consequences were swift and severe. President Donald Trump directed federal agencies to begin phasing out their use of Anthropic tools over a six-month transition period and lashed out at the company -- valued at $380 billion -- in an all-caps social media post, calling it a "radical left, woke company."

The retaliation escalated beyond political rhetoric. The Pentagon moved to formally declare Anthropic a "supply-chain risk" -- a designation that is typically reserved for foreign adversaries, and which would prevent any company doing business with the U.S. military from also working with Anthropic. It was an extraordinary move against a domestic AI company, and one with potentially sweeping commercial consequences. Anthropic has since filed a lawsuit to challenge the designation.

Meanwhile, something remarkable was happening across the rest of the industry. Hundreds of employees at both Google and OpenAI signed an open letter urging their own leadership to respect Amodei's red lines -- to refuse to budge on autonomous weapons or domestic surveillance, and to stand with Anthropic's position rather than exploit the opening its standoff with the Pentagon had created.

The Betrayal: How OpenAI Broke Ranks:

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

What happened next shocked the tech community. OpenAI -- widely expected by industry observers to maintain the same ethical guardrails as Anthropic -- announced that it had reached an agreement allowing its models to be deployed in classified military situations. Reports had previously indicated that OpenAI would hold firm alongside Anthropic's red lines. Instead, it moved in the opposite direction entirely.

The reaction inside OpenAI was immediate. Hardware executive Caitlin Kalinowski resigned in direct response to the deal, stating publicly that it had been "rushed without the guardrails defined." Her departure was a signal that the decision was not without internal dissent -- and that the speed and opacity of the agreement troubled people close to the process.

OpenAI, for its part, defended the deal. The company told reporters that its agreement "makes clear its redlines: no autonomous weapons and no autonomous surveillance." But the framing did little to reassure observers who felt the company had undermined a collective stand at exactly the moment it mattered most.

The Public Verdict: Claude Goes to Number One:

If the tech industry was divided over OpenAI's decision, the public was not. On the day after OpenAI announced its military deal, ChatGPT uninstalls jumped 295% day-over-day -- one of the most dramatic single-day user reactions ever recorded against a major AI platform. In the same window, Anthropic's Claude shot to the number one spot in the App Store.

The numbers told a story that no press release could spin. Consumers, at least in that moment, were paying attention -- and they were voting with their phones. Anthropic's willingness to absorb significant political and financial punishment rather than cross what it viewed as ethical lines resonated with a public that has grown increasingly wary of AI being deployed in ways that feel unaccountable, opaque, or dangerous.

Whether that consumer sentiment translates into lasting loyalty, or fades as the news cycle moves on, remains one of the more interesting questions hanging over both companies heading into the rest of 2025.

Why This Battle Will Echo for Years:

The Anthropic-Pentagon standoff is not just a corporate drama or a political footnote -- it is a preview of a conflict that the AI industry will face repeatedly and at increasing scale. As AI models become more capable, governments around the world will push harder for access to the most powerful tools available, and the question of what conditions -- if any -- AI companies can place on that access will become one of the defining legal and ethical debates of the decade.

Amodei's position established something important: that an AI company can say no to a government, absorb the consequences, and survive. Whether future companies will have the institutional resolve, the financial runway, or the public support to do the same is far less certain. The Pentagon's willingness to deploy the "supply-chain risk" designation against a domestic company -- a tool typically reserved for foreign adversaries like Huawei -- signals that the pressure governments are willing to apply is significant, and that the costs of resistance are real.

The decisions being made right now about how AI is deployed in war, surveillance, and national security will not be easily reversed. The contracts being signed, the red lines being drawn or abandoned, and the precedents being set in boardrooms and courtrooms and government offices in 2026 will shape the relationship between artificial intelligence and state power for a generation.

As this saga continues to unfold -- you know, no big deal -- the stakes could not be higher.