The Defiance of Dario: Anthropic’s CEO Refuses Pentagon Demands as Deadline Looms:

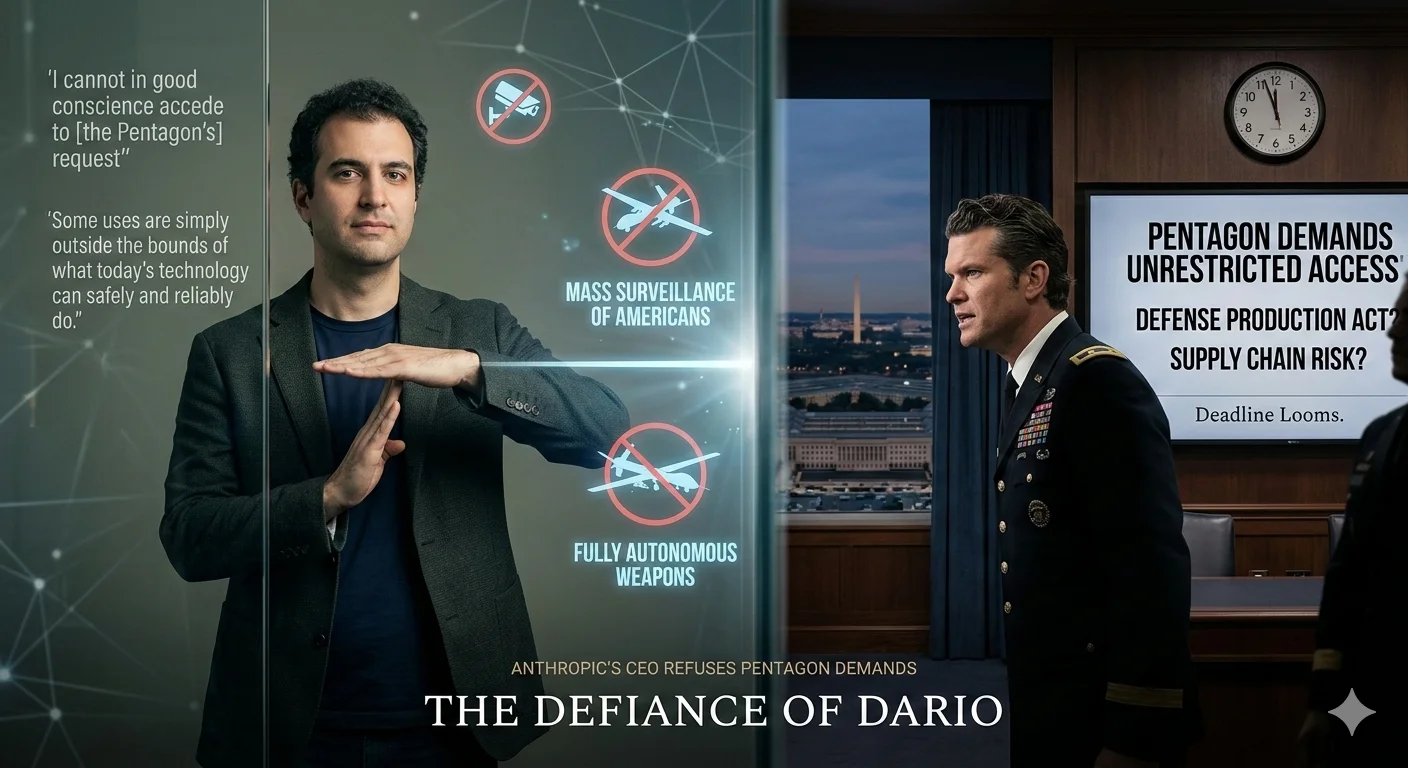

In a rare and striking moment of corporate defiance against federal pressure, Anthropic CEO Dario Amodei declared Thursday that he "cannot in good conscience accede to [the Pentagon's] request" to hand over unrestricted access to its frontier AI systems. As the only frontier AI lab with classified-ready systems deployed for active military use, Anthropic sits at the center of a confrontation that is reshaping the boundaries between private AI development and national defense.

Anthropic's position carries extraordinary weight in 2026, at a moment when every frontier AI lab is under mounting pressure to align with government priorities. Dario Amodei's refusal is not a fringe objection—it is a carefully reasoned stand from the CEO of one of the world's most consequential AI companies, signaling a shift in how tech giants interact with the state.

The Pentagon's Demands: Unrestricted Access to a Frontier AI System:

The Department of Defense's position is straightforward, if deeply controversial. It believes it should have the authority to use Anthropic's Claude model for all lawful purposes—without a private company drawing ethical boundaries around those use cases. Defense Secretary Pete Hegseth has framed this as a matter of national sovereignty, arguing that a corporation simply should not have the power to dictate how the military leverages its contracted AI tools.

Amodei responded with surgical precision, identifying exactly two scenarios where Anthropic refuses to yield regardless of political or financial pressure. The first is mass surveillance of American citizens—using Claude to monitor the domestic population at scale. The second is fully autonomous weapons systems operating with no human in the decision loop—a capability he argues today's technology cannot safely or reliably deliver. As he wrote plainly: "Some uses are simply outside the bounds of what today's technology can safely and reliably do."

The Contradiction: A Security Risk and a National Asset at Once:

The Pentagon's pressure campaign has taken a two-pronged approach that Amodei himself was quick to expose as a glaring internal contradiction. On one hand, the Department has threatened to label Anthropic a supply chain risk—a designation historically reserved for foreign adversaries and hostile state actors, not American AI companies building tools actively used by U.S. warfighters.

On the other hand, the Department has simultaneously threatened to invoke the Defense Production Act, which would give the President authority to conscript Anthropic's technology into national defense service—treating Claude not as a liability, but as an irreplaceable national asset. Amodei captured the absurdity of both threats in one devastating line: "One labels us a security risk; the other labels Claude as essential to national security."

The Offer: A Graceful Exit Over a Forced March:

Rather than escalating into open confrontation, Amodei struck a measured, statesmanlike tone throughout his statement. He acknowledged that it is entirely the Department's right to partner with contractors more aligned with its operational vision, while making Anthropic's own preference unmistakably clear: "Our strong preference is to continue to serve the Department and our warfighters—with our two requested safeguards in place."

Should the Pentagon choose to walk away, Amodei pledged full cooperation on transition, stating: "We will work to enable a smooth transition to another provider, avoiding any disruption to ongoing military planning, operations, or other critical missions." The message beneath the diplomacy was simple, calm, and firm—we can just part ways. There's no need to be nasty about it.

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

The Governance Vacuum: Why AI Regulation is Still a Trailing Indicator:

Despite the extraordinary capability of frontier AI systems in 2026, the question of who governs their use—and how—remains deeply unresolved. Governance frameworks are always a trailing indicator of capability, and the technology moves faster than the rules designed to contain it, leaving private companies like Anthropic to fill the vacuum with their own ethical frameworks—whether governments like it or not.

The primary bottleneck is not the models themselves, but the absence of clear federal frameworks governing military AI use. Without standardized policies covering autonomous weapons authorization, civilian surveillance boundaries, and accountability when AI systems cause harm, private companies will continue to set the rules by default. Anthropic's two red lines are, in effect, a governance framework the U.S. government has not yet built for itself.

The Bigger Picture: What This Moment Means for the AI Industry:

One of the most significant aspects of this standoff is what it signals to every other frontier AI lab watching from the sidelines. The Pentagon reportedly has xAI waiting in the wings as a potential replacement—suggesting that Anthropic's exit would not leave the military without frontier AI access for long. But that raises an uncomfortable question about whether the next provider will apply the same level of ethical scrutiny, or whether the race to secure lucrative defense contracts will quietly erode the safeguards that responsible AI development depends on.

Amodei's stand is more than a business negotiation. In a moment when autonomous weapons, mass surveillance, and the unchecked deployment of AI are no longer hypothetical concerns, one CEO just reminded the world—and the U.S. government—that not everything about artificial intelligence is up for sale.

Strategic Takeaways: The Realities of AI Power in 2026:

For enterprise leaders, policymakers, and AI developers watching this confrontation unfold, the Anthropic-Pentagon standoff carries implications far beyond a single contract dispute. It signals the arrival of a new era—one where the ethical boundaries of AI deployment are no longer theoretical talking points, but live, contested battlegrounds with real financial and legal consequences.

The outcome of this deadline will not just determine Anthropic's future with the Pentagon.

It may well determine who gets to define the soul of AI governance for the decade ahead—and whether the companies building the most powerful AI systems in history will be allowed to draw any lines at all.