Google's AI Creative Suite Expands: Opal Gets Automated Workflows While ProducerAI Joins Google Labs:

From Wyclef Jean to You: Google’s Lyria 3 is Bringing Grammy-Level AI Music to Your Phone.

Google Just Made App Development as Easy as Sending a Text Message. You Don't Need a Developer Anymore: How Google Opal is Changing the Creator Economy.

Google is doubling down on AI-powered creativity tools with two major announcements that could reshape how everyday users build apps and create music — and the timing couldn't be more strategic as the tech giant races to make generative AI accessible to non-technical creators.

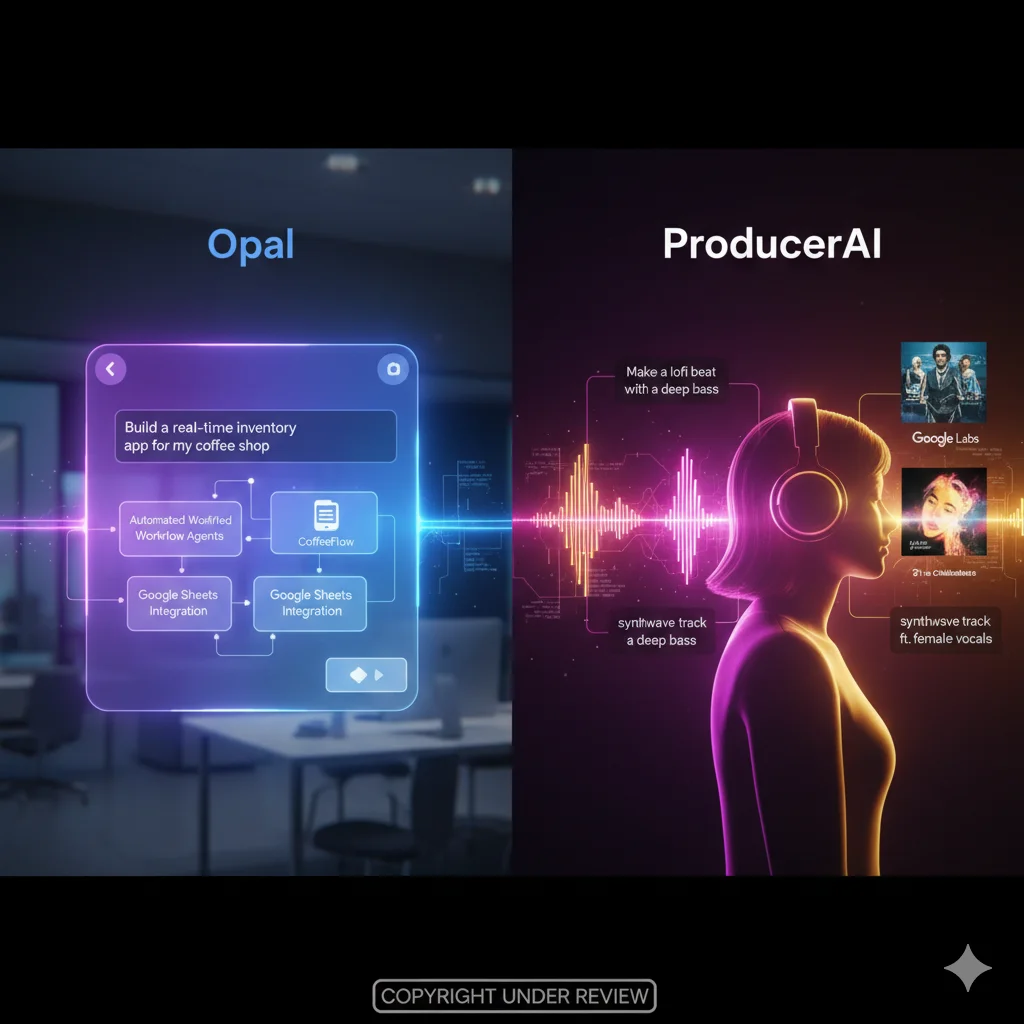

On Tuesday, Google announced significant updates to its vibe-coding app Opal, introducing automated workflow agents that let users build complex mini-apps using nothing but text prompts. Simultaneously, the company revealed that ProducerAI — a generative AI music platform backed by The Chainsmokers — is joining Google Labs, bringing advanced music generation capabilities powered by Google DeepMind's Lyria 3 model directly to everyday creators.

These announcements signal Google's aggressive push into the no-code and low-code application development space, as well as the increasingly controversial world of AI-generated music. With competitors like Lovable, Replit, and Suno gaining traction, and ongoing legal battles over AI training data threatening the entire generative AI industry, Google's moves represent both immense opportunity and significant risk.

Google Opal's New Automated Workflows: AI Agents That Build Apps for You:

Google Opal, the company's vibe-coding platform that lets anyone create mini web apps without writing code, just got significantly more powerful with the introduction of automated workflow agents. The new feature uses the Gemini 3 Flash model — Google's fast, efficient large language model optimized for real-time tasks — to automatically plan, execute, and manage complex multi-step workflows based on simple text prompts.

Here's how it works in practice: instead of manually configuring every step of an application workflow, users can now simply describe what they want in natural language, and Opal's AI agent will automatically select the appropriate tools, create the necessary data structures, and execute tasks autonomously. For example, if you're building a simple e-commerce app, the AI agent can automatically use Google Sheets to maintain a persistent shopping list across user sessions, handle inventory tracking, or manage order processing — all without the user writing a single line of code.

The agents are natively interactive, meaning they can dynamically request additional information from users when needed or present choices to help determine next steps. This conversational approach to app development dramatically lowers the barrier to entry for non-technical users who have ideas for applications but lack the programming knowledge to build them.

"With this addition, users without technical knowledge could build complex workflows within their apps," Google claims in its announcement. If the promise holds true, Opal could democratize app development in ways that previous generations of low-code tools never quite achieved — by making the AI agent itself the developer, with the user acting as product manager and creative director.

The Rapid Global Expansion of Google Opal:

Google Opal's journey from launch to global platform has been remarkably fast, reflecting both the company's confidence in the product and the intense competitive pressure in the no-code AI app builder space.

Opal was first introduced to U.S. users in July 2025, positioning the tool as a way for anyone to create mini web apps or remix existing applications using natural language descriptions and visual editing. The initial reception was strong enough that Google quickly accelerated its international rollout.

In October 2025, just three months after launch, the company expanded Opal to users in 15 additional countries, including major tech markets like Canada, India, Japan, South Korea, Vietnam, Indonesia, Brazil, and Singapore. A month later, in November 2025, Google made Opal available in over 160 countries worldwide — an aggressive global expansion that demonstrates the company's ambition to establish Opal as the dominant platform for AI-powered app creation before competitors can establish market position.

In December 2025, Google integrated Opal directly into the Gemini web app, allowing users to create custom applications through a visual editor without leaving the Gemini interface. This integration makes Opal accessible to the millions of users already interacting with Gemini for other AI-powered tasks, creating a seamless experience from ideation to app development to deployment.

The No-Code AI App Builder Wars: Google Opal vs. the Competition:

Google is far from alone in recognizing the massive market opportunity for AI-powered no-code and low-code application development platforms. A growing cohort of well-funded startups is racing to capture market share in what could become a multi-billion dollar category.

Lovable and Replit are among the most prominent competitors, both offering platforms that let users build functional web applications using natural language prompts and conversational AI interfaces. Lovable has gained particular traction for its ability to generate production-ready code that developers can easily customize and deploy, while Replit's strength lies in its integrated development environment and collaborative coding features.

But the competitive landscape extends well beyond these two players. Wabi, founded by former Replika creator Eugenia Kuyda, is building AI-powered tools for creative app development with a focus on personalization and emotional intelligence. Emergent, backed by SoftBank and Lightspeed Venture Partners, is developing enterprise-focused no-code AI platforms. Rocket.new, backed by Accel, is pursuing a developer-first approach to AI-assisted application building.

What sets Google Opal apart is its deep integration with the broader Google ecosystem — seamless access to Google Sheets, Google Cloud services, and now the Gemini AI model family — combined with Google's unmatched scale and distribution. If Opal can deliver on its promise of truly accessible app development for non-technical users, Google's existing user base of billions gives it a decisive advantage over venture-backed startups.

ProducerAI Joins Google Labs: AI Music Generation Goes Mainstream:

In a separate but equally significant announcement, Google revealed that ProducerAI — a generative AI music platform backed by electronic music duo The Chainsmokers — is joining Google Labs and will leverage Google DeepMind's advanced Lyria 3 music generation model.

ProducerAI allows users to generate original music using natural language requests as simple as "make a lofi beat" or "create an upbeat synthwave track for a workout." The platform uses Lyria 3, Google DeepMind's latest music generation model, which can convert text descriptions and even image inputs into high-quality audio outputs complete with melody, harmony, rhythm, and production.

What makes ProducerAI different from other AI music tools, according to Google Labs' senior director of Product Management Elias Roman, is that it's designed to feel like a "collaboration partner" rather than just a generation tool. Users can iteratively refine outputs, experiment with genre blends, and maintain creative control throughout the music creation process.

"ProducerAI has allowed me to create in new ways," Roman wrote in the announcement blog post. "I've experimented with new genre blends, expressed how I feel with personalized birthday songs for my loved ones, and made custom workout soundtracks for myself and friends."

Google's Lyria 3 Model: The Technology Behind AI Music Generation:

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

Google announced last week that Lyria 3 capabilities would be integrated directly into the flagship Gemini app, but ProducerAI represents a more specialized interface optimized specifically for music creation workflows. The Lyria 3 model represents years of research by Google DeepMind into audio generation, music theory, and the creative processes that human musicians use when composing.

The model can handle remarkably nuanced requests, understanding genre conventions, emotional tones, instrumentation preferences, tempo specifications, and even stylistic references to existing artists or musical eras. It can generate complete arrangements with multiple instruments, vocal melodies, backing vocals, and production effects — essentially functioning as a full virtual music production studio.

Three-time Grammy-winning rapper Wyclef Jean has already used the Lyria 3 model and Google's Music AI Sandbox on his recent song "Back From Abu Dhabi," providing a high-profile validation of the technology's creative potential.

"This is not just a machine where you're clicking a button a hundred times, and then you're done," said Jeff Chang, director of Product Management at Google DeepMind, in a promotional video. "It's a careful kind of curation where you're going through and saying, 'Oh, I think that's something we can use.'"

Jean describes using the tool to experiment with adding different instruments to tracks he had already recorded. "What I want everybody to understand […] is you're in the era where the human has to be the most creative," Jean said. "There's one thing that you have over the AI: a soul. And there's one thing that AI has over you: the infinite information."

The AI Music Controversy: Copyright, Consent, and Creative Integrity:

The introduction of ProducerAI and the expansion of Google's AI music capabilities arrives amid intense controversy and legal battles over the use of copyrighted music in training AI models. The music industry has been one of the most vocal opponents of generative AI, with hundreds of prominent musicians signing open letters and pursuing legal action against AI companies.

In 2024, hundreds of musicians including Billie Eilish, Katy Perry, and Jon Bon Jovi signed an open letter calling on tech companies not to undermine human creativity with AI music generation tools. The letter argued that these tools were trained on copyrighted music without artist consent, effectively allowing AI companies to profit from stolen creative work.

The legal landscape is becoming increasingly hostile to AI companies. A cohort of music publishers recently sued the AI company Anthropic for $3 billion, claiming the company illegally downloaded more than 20,000 copyrighted songs, including sheet music, song lyrics, and musical compositions. Anthropic has already been ordered by courts to offer a $1.5 billion settlement to authors whose books were pirated for AI training.

The fundamental legal question remains unresolved: is training AI models on copyrighted works without permission legal fair use, or is it copyright infringement? One federal judge, William Alsup, ruled last year that training on copyrighted data is legal, but pirating it is not — a distinction that may prove difficult to maintain in practice.

Two Sides of the AI Music Debate: Opposition vs. Embrace:

Not all musicians oppose AI music tools. A growing number of artists are embracing the technology, particularly for technical improvements rather than creative generation.

Paul McCartney famously used AI-powered noise reduction systems — the same technology that allows Zoom and FaceTime to filter out background noise on video calls — to clean up a decades-old, low-quality John Lennon demo recording. The resulting "new" Beatles track, "Now and Then," won a Grammy in 2025, demonstrating that AI can serve as a powerful restoration and enhancement tool rather than just a replacement for human creativity.

Meanwhile, AI-generated music is already topping charts. Telisha Jones, a 31-year-old from Mississippi, used the AI music platform Suno to turn her poetry into the viral R&B song "How Was I Supposed to Know," which gained massive traction on streaming platforms. Jones subsequently signed a record deal with Hallwood Media reportedly worth $3 million — proof that AI-generated music can achieve commercial success even amid the controversy.

These success stories complicate the narrative around AI music generation. If AI tools can help unknown artists break into an industry notoriously difficult to penetrate, or allow legendary musicians to complete unfinished work, are they fundamentally harmful? Or are they simply tools that can be used responsibly or irresponsibly, depending on implementation and ethical guidelines?

The Broader Implications: Google's AI-Powered Creativity Strategy:

The simultaneous announcements around Opal and ProducerAI reveal a coherent strategic vision from Google: dominate the emerging market for AI-powered creativity tools by making advanced generative AI accessible to non-technical users across multiple creative domains.

By lowering the barriers to app development and music creation, Google is potentially democratizing creative expression in unprecedented ways. Someone with an idea for an app but no coding skills can now build it using Opal. Someone with musical ideas but no production knowledge can create professional-sounding tracks using ProducerAI. In theory, this empowers millions of people who were previously excluded from these creative fields.

But there are legitimate concerns about what gets lost in this democratization. If anyone can build an app or generate a song with AI assistance, does that devalue the expertise and craft that professional developers and musicians have spent years developing? Does it flood markets with low-quality, derivative content that drowns out original creative work?

Google's answer appears to be that AI tools should amplify human creativity rather than replace it — that the best outcomes come from collaboration between human creative vision and AI technical capabilities. Whether that vision can be realized in practice, particularly given the copyright and ethical controversies surrounding AI training data, remains to be seen.

The Bottom Line: Google Bets Big on AI Creativity Tools:

Google's expansion of Opal with automated workflow agents and integration of ProducerAI into Google Labs represents a major strategic bet on the future of AI-assisted creativity. The company is clearly positioning itself to be the platform of choice for non-technical creators who want to build applications or generate music without traditional development or production skills.

The timing is both opportune and risky. The market for no-code AI tools is exploding, but competition is fierce and well-funded. The demand for AI music generation tools is real, but legal and ethical controversies threaten the entire category. Google's scale and ecosystem integration give it advantages, but also make it a bigger target for regulators and critics.

For everyday users, the promise is compelling: create the app you've always imagined or the soundtrack you've always heard in your head, all through simple text prompts and conversational AI. For artists and developers, the threat is real: competition from AI-powered tools that can produce "good enough" alternatives at a fraction of the cost and effort.

The next chapter in this story will be written not by press releases and product launches, but by how these tools are actually used, what they enable people to create, and how the legal and ethical frameworks around AI creativity evolve.

Google is betting billions that the future of creativity is AI-assisted. We're about to find out if they're right.