CompactifAI & HyperNova: How Multiverse Computing Is Making Compressed AI Models the New Enterprise Standard:

Introduction: Why Cloud-Dependent AI Is No Longer Enough for Business:

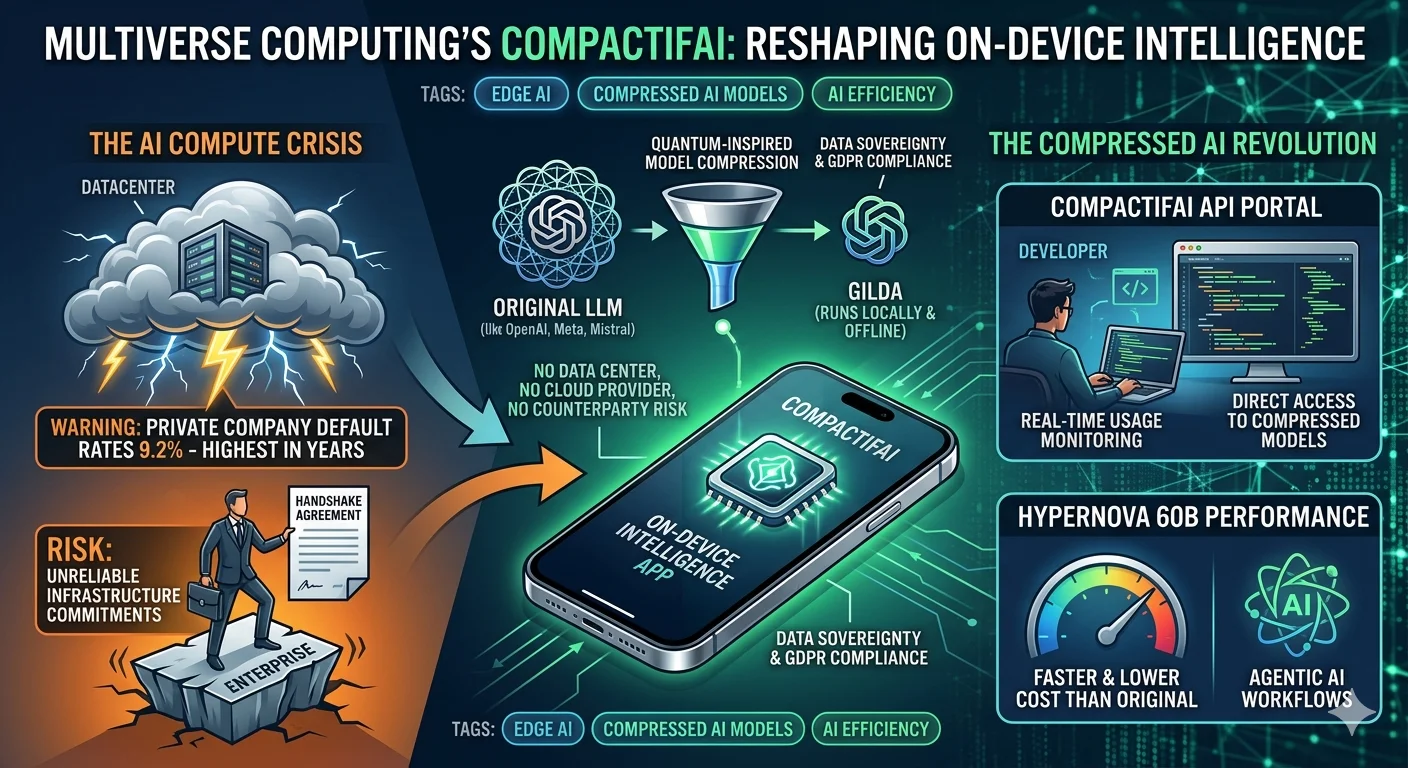

Most enterprise AI strategies are built on a foundation that is quietly cracking. The bottleneck is not intelligence — it is infrastructure. While industry giants like OpenAI and Anthropic compete for enterprise contracts through managed cloud APIs, Spanish AI innovator Multiverse Computing is advancing a fundamentally different thesis: the most powerful AI for business is AI you own, control, and run on your own terms. With the dual launch of the CompactifAI app and a self-serve API portal, Multiverse is moving compressed, on-device AI from a research curiosity to a deployable enterprise asset.

What is CompactifAI? The "Compress-and-Deploy" Breakthrough:

Announced alongside the launch of its developer API portal, CompactifAI is Multiverse Computing's flagship platform for quantum-inspired AI model compression. Unlike Retrieval-Augmented Generation (RAG), which "queries" external data at runtime, CompactifAI permanently compresses large foundation models — from OpenAI, Meta, DeepSeek, and Mistral AI — into smaller, faster versions that retain the reasoning quality enterprises require. These models can be deployed on-device, at the edge, or via API, without dependence on third-party cloud infrastructure.

"The CompactifAI API portal gives developers direct access to compressed models with the transparency and control needed to run them in production." — Enrique Lizaso, CEO, Multiverse Computing

Why CompactifAI Is Redefining Enterprise AI Strategy:

The "one-size-fits-all" cloud AI model carries risks that are becoming impossible to ignore. Private company default rates have hit 9.2% — the highest in years — prompting VC firm Lux Capital to warn enterprises to get compute capacity agreements confirmed in writing.

Financial instability in the AI supply chain is real. For companies betting their workflows on rented cloud inference, this is a structural vulnerability. CompactifAI offers a different path: compressed models that run locally, offline, and independently.

Multiverse Computing's platform bridges this gap by offering:

-

Total Compute Independence: Models run on your hardware, governed by your rules — no cloud provider risk.

-

• On-Device Privacy: Data never leaves the device, making compliance with GDPR and sector-specific regulation straightforward.

-

• Zero Counterparty Risk: No dependency on external API availability, pricing changes, or vendor deprecation cycles.

-

• Edge-First Architecture: Deployable in drones, satellites, and industrial environments where connectivity cannot be guaranteed.

Key Features of the CompactifAI Ecosystem:

Feature: Description:

Quantum-Inspired Compression: :Reduces LLMs from labs including OpenAI, Meta, DeepSeek, and Mistral to a fraction of their original size without quality degradation.

Gilda (On-Device Model): :A compressed model small enough to run locally and offline on supported mobile devices — no internet connection required.

Ash Nazg Routing: :Intelligent system that automatically routes queries between local inference and cloud API depending on device capability.

HyperNova 60B 2602: :Latest flagship compressed model built on gpt-oss-120b; delivers faster responses at lower cost than the original foundation model.

Self-Serve API Portal: :Direct developer access to compressed models with real-time usage monitoring — no AWS Marketplace required.

Agentic Coding Support: :Optimized for multi-step autonomous programming workflows where speed and cost efficiency are critical.

Strategic Use Cases: Who is CompactifAI Built For?:

-

Government & Defense: Sovereign AI deployments with strict data residency requirements and offline operational needs.

-

Financial Services: Compliance-first environments where no proprietary data can transit external infrastructure.

-

Advanced Manufacturing & Energy: Embedded AI for industrial sensors, smart grids, and field systems — including clients like Bosch and Iberdrola.

-

Aerospace & Autonomous Systems: AI inference on drones, satellites, and autonomous vehicles where connectivity is intermittent or absent.

-

Enterprise Software Development: Agentic coding workflows powered by HyperNova 60B at lower compute cost than standard LLM APIs.

The Enterprise AI Race: Multiverse Computing vs. The Cloud Incumbents:

Multiverse Computing's focus on compressed, open-weight models is paying off where it matters most: regulated industries.

While OpenAI and Anthropic build market share through managed APIs, Multiverse is winning the trust of organizations that cannot afford to rent their intelligence. Its customer roster already exceeds 100 global enterprises, including the Bank of Canada, Bosch, and Iberdrola — a validation set that spans banking, industrial manufacturing, and energy.

- • Annual Recurring Revenue Growth: Multiverse's momentum mirrors the broader market shift toward AI efficiency and sovereignty.

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

-

• Series B Funding: $215 million raised in 2024, with a rumored €500 million raise now underway at a valuation above €1.5 billion.

-

• The Ownership Advantage: In an era of AI agents and autonomous workflows, Multiverse is betting that the winners will own their intelligence — not rent it.

The Next Frontier: Agentic AI on the Edge:

CompactifAI is not just about making chatbots smaller; it is the foundation for agentic AI in the physical world.

Support our research

Independent analysis fueled by you.

HyperNova 60B is purpose-optimized for agentic coding workflows— environments where AI autonomously completes complex, multi-step programming tasks without human intervention at each step. By delivering these capabilities at lower cost and with faster response times than the original gpt-oss-120b model it was derived from, Multiverse is making autonomous AI agents economically viable at enterprise scale.

The implications extend well beyond software development. Compressed agentic models embedded in drones, satellites, and autonomous vehicles can execute decision-making workflows without a live data connection — an architectural requirement that cloud-dependent LLMs fundamentally cannot meet.

CompactifAI vs. Cloud LLM APIs: Which is Right for Your Organization?

While both approaches offer world-class AI intelligence, they solve for fundamentally dfferent business needs.

Cloud LLM APIs are the "Premium Managed Utility" — ready to deploy, highly polished, and easy to scale for general productivity use cases. CompactifAI is the "Sovereign Edge Platform" — it gives enterprises the tools to run powerful AI on their own infrastructure, in their own environments, under their own governance.

Head-to-Head Comparison (2026):

Feature: CompactifAI (Multiverse) Cloud LLM APIs (OpenAI / Anthropic)

Core Philosophy: Sovereign AI: Compressed, owned, on-device. Managed AI: Access powerful ready-made models.

Data Privacy: Total Sovereignty: Data never leaves your device. Managed Privacy: Data transits provider cloud. Connectivity Required: No: Full offline inference on supported devices. Yes: All inference requires live API connection.

Model Access: Compressed Open Weights: Full model ownership. Closed API: Interaction via endpoints only.

Deployment Edge-First: Drones, satellites, mobile, on-prem. Cloud-First: Optimized for API-based scale.

Cost Model: Lower inference cost; no per-token cloud charges. Per-token pricing; scales with usage volume.

Primary Use Case: Regulated industries, edge environments, agentic coding. General productivity, SaaS, rapid prototyping.

Strategic Breakdown: The Choice Is Yours:

The Case for CompactifAI:

Choose CompactifAI if your operations cannot afford cloud dependency.

If you are a defense contractor, a central bank, an energy grid operator, or an industrial manufacturer, your AI cannot go offline when a cloud provider has an outage. It cannot leak operational data across an external API. And it cannot incur unpredictable per-token costs as agentic workflows scale. CompactifAI delivers AI that functions in the conditions your business actually operates in — not idealized cloud-connected conditions.

The Case for Cloud LLM APIs:

Choose cloud APIs if your goal is general productivity at speed.

If your team needs high-level reasoning for document analysis, customer-facing assistants, marketing workflows, and multi-modal tasks across a large workforce — and your data is not sensitive enough to require on-device processing — cloud LLM APIs remain the most frictionless path. They are optimized for productivity today, rather than sovereign infrastructure tomorrow.

The "Agentic" Factor:

The most consequential difference in 2026 is how these platforms handle autonomous AI agents. Cloud LLM providers offer powerful assistant ecosystems that excel at following instructions across general tasks. CompactifAI focuses on compressed inference for agentic workflows — autonomous agents trained to operate within specific technical domains, execute multi-step tasks, and function in environments where a cloud connection is a liability rather than an asset. For organizations deploying AI in the field — literally and figuratively — this is not a minor distinction.

Conclusion: Owning the Future of Intelligence:

The era of experimental edge AI is over; the era of production-grade compressed AI has begun.

Multiverse Computing's CompactifAI platform and API portal give enterprises the tools to run powerful AI on their own terms — offline, on-device, and at lower cost than the foundation models they are derived from.

With HyperNova 60B outperforming its parent model, more than 100 enterprise customers already live, and a €500 million funding round reportedly underway, Multiverse is not asking whether compressed AI belongs in your infrastructure stack. It is making the case that it already does.