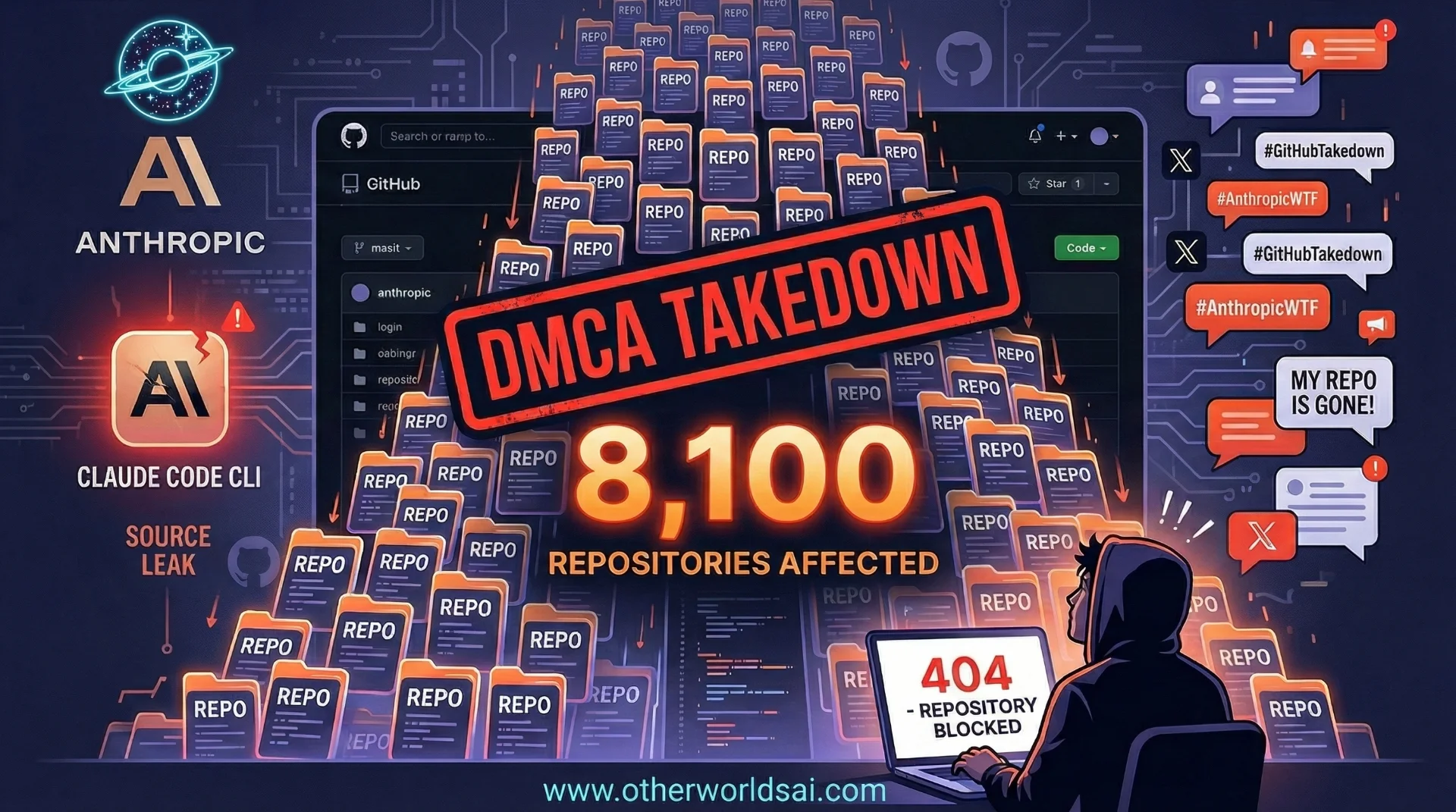

Anthropic's GitHub Takedown Disaster: How a Copyright Notice Took Down 8,100 Repositories:

The Incident Begins: A Routine Release Goes Terribly Wrong:

What started as an ordinary software update quickly spiraled into one of the most embarrassing slip-ups in Anthropic's history. On Tuesday, a software engineer made a startling discovery: Anthropic had accidentally included access to the source code for its category-leading Claude Code command line application in a recent public release. The code — the internal engine behind one of the most powerful AI coding assistants on the market — was suddenly out in the open for anyone to see.

The developer community moved fast. AI enthusiasts and engineers began poring over the leaked code almost immediately, hunting for clues about how Anthropic harnesses the large language model (LLM) that powers Claude Code. Repositories sharing and discussing the code spread rapidly across GitHub, with forks multiplying by the hour.

The Takedown Notice: DMCA Enforcement Spins Out of Control:

Anthropic's legal team acted quickly — but the cure turned out to be nearly as damaging as the disease. The company issued a takedown notice under the U.S. Digital Millennium Copyright Act (DMCA), formally requesting that GitHub remove any repositories hosting the offending source code. On paper, it was the right move. In practice, it became a catastrophic overshoot.

According to GitHub's records, the notice was executed against some 8,100 repositories — a number that stunned the developer community.

Among those swept up in the mass GitHub takedown were repositories that had done nothing wrong. Legitimate forks of Anthropic's own publicly released Claude Code repository — maintained by developers who had simply cloned the official public project — were suddenly blocked without warning. These were not bad actors hoarding leaked code. They were everyday developers whose work got caught in the crossfire of a poorly calibrated enforcement action.

Irate Social Media: Developers Push Back Loudly:

The backlash was swift, sharp, and very public. Irate social media posts flooded developer forums and tech Twitter within hours, with engineers expressing outrage at finding their legitimate repositories blocked. For many, this was not a minor inconvenience — it disrupted active projects, broke CI/CD pipelines, and interrupted workflows that depended on uninterrupted access to those repositories.

The anger was understandable, and the optics were terrible. Here was Anthropic — a company that presents itself as a thoughtful, safety-first AI lab — hammering thousands of innocent developers with a blunt legal instrument. The incident raised uncomfortable questions about whether the company's internal processes were as sophisticated as the AI systems it builds.

Boris Cherny Steps In: Damage Control and Retraction:

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

Anthropic moved to contain the damage once the scale of the mistake became clear. Boris Cherny, Anthropic's head of Claude Code, stepped forward publicly and said the mass takedown was accidental. He announced that Anthropic would retract the bulk of the DMCA notices, narrowing the action to just one repository and its 96 forks that had actually hosted the accidentally released source code.

"The repo named in the notice was part of a fork network connected to our own public Claude Code repo, so the takedown reached more repositories than intended." — Anthropic Spokesperson

An Anthropic spokesperson elaborated further in a statement confirming: "We retracted the notice for everything except the one repo we named, and GitHub has restored access to the affected forks." The explanation made technical sense — GitHub's fork networks mean that a takedown targeting one repo can ripple outward to thousands of connected repositories. But understanding why it happened did not erase the disruption it caused.

The IPO Factor: Why This Blunder Hits Differently:

For any tech company, accidentally nuking thousands of developer repositories would be embarrassing. For Anthropic — which is reportedly planning an IPO — it is something far more consequential. Going public demands meticulous attention to execution, compliance, and governance. Investors, underwriters, and regulators scrutinize everything. Leaking proprietary source code, then triggering a mass copyright enforcement action that sweeps up innocent developers, is exactly the kind of operational failure that raises red flags. Leaking your source code as a public company? You better believe there's a shareholder lawsuit coming.

The incident raises serious questions that Anthropic will need to answer convincingly before any public offering. How did proprietary source code end up in a public release in the first place? What internal review processes failed to catch the error?

Why did the DMCA enforcement mechanism reach 8,100 repositories instead of a targeted handful? And what liability does the company face from developers whose legitimate work was disrupted? These are not small questions. They are the kind that appear in IPO prospectus risk sections.

Conclusion: A Human Failure at a Very Inopportune Moment:

Anthropic builds some of the world's most sophisticated AI systems — and this week, it was undone by a very human kind of mistake. Accidentally shipping source code, then overcorrecting with a blunt copyright takedown that swept up thousands of innocent developers, is a story of compounding errors. The quick response from Boris Cherny and the company's spokesperson suggests Anthropic is aware of the reputational stakes and took corrective action as fast as it could.

But with an IPO reportedly on the horizon, the window for stumbles like this is narrow. Investors and regulators will be watching closely to see whether this incident is an isolated slip — or a sign of deeper operational cracks in a company racing to grow at breakneck speed.

For now, the 8,100 developers who briefly lost access to their repositories have their code back. Whether Anthropic gets its credibility back quite as easily remains to be seen.