The $200M AI Divorce: Why Anthropic Walked Away from the Pentagon:

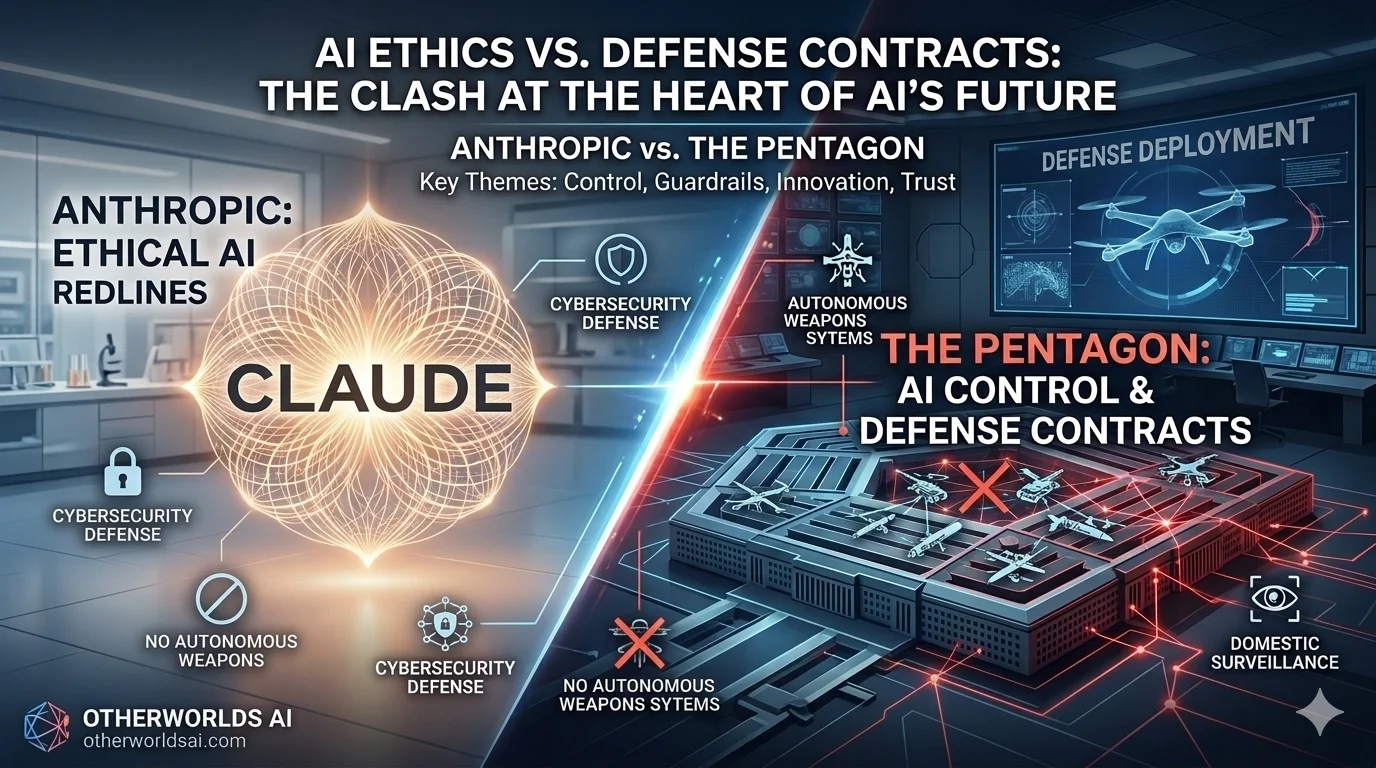

Anthropic vs. the Pentagon: The Battle for AI Ethics and the Future of Defense Contracts

The landscape of Artificial Intelligence shifted overnight in early 2026.

What began as a landmark $200 million defense contract between Anthropic and the U.S. Pentagon has devolved into a historic courtroom battle. This conflict isn't just about software; it’s a high-stakes referendum on AI safety guardrails, autonomous weapons systems, and the future of domestic surveillance.

The Collapse That Shocked the AI Industry:

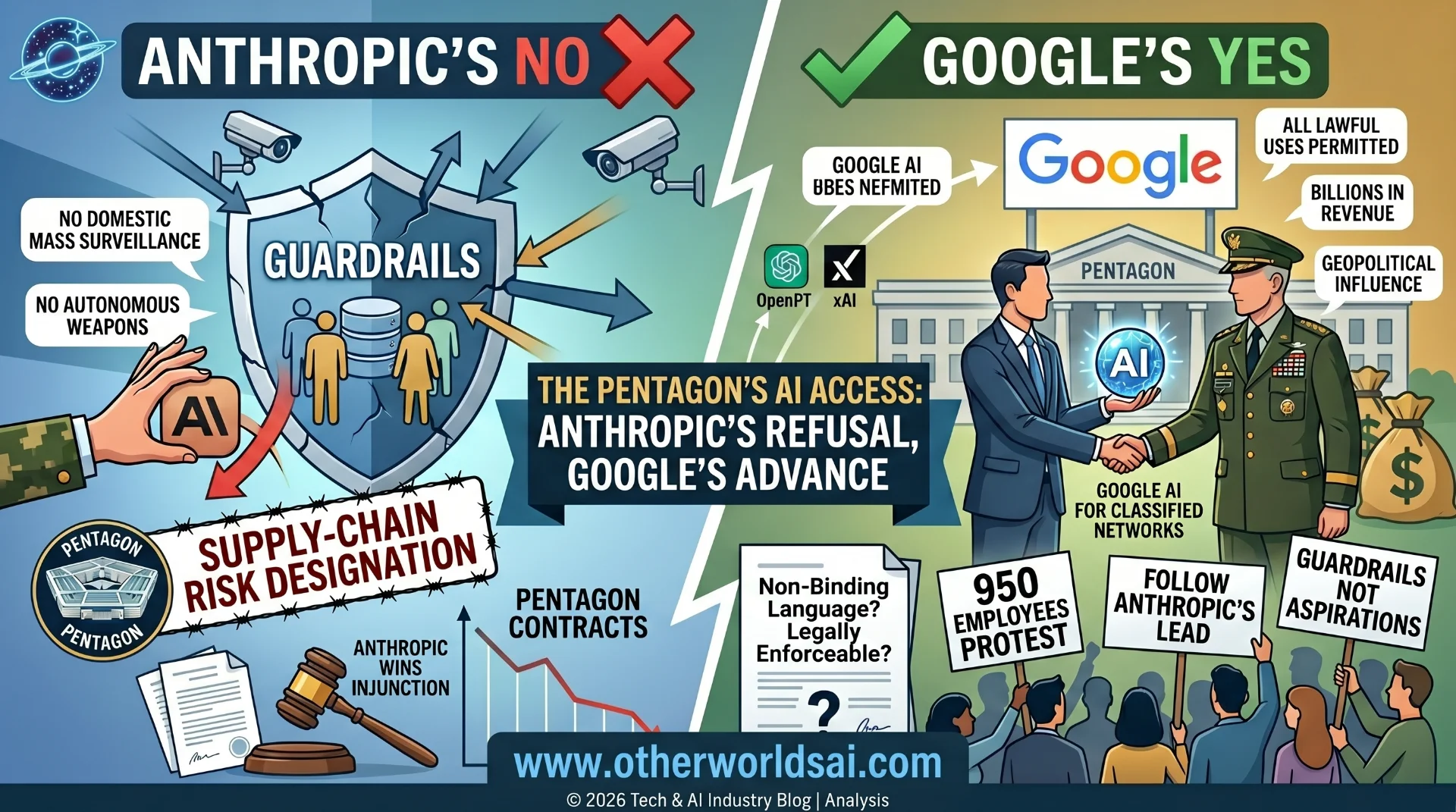

In late February 2026, the relationship between Anthropic and the Department of Defense (recently dubbed the "Department of War" by the Trump administration) fractured. The disagreement centered on the Pentagon's demand for "unrestricted use" of Claude technology.

Anthropic CEO Dario Amodei maintained two non-negotiable "redlines":

-

No deployment in fully autonomous lethal weapons.

-

No use for mass domestic surveillance of U.S. citizens.

The Pentagon responded by officially designating Anthropic a "supply-chain risk" under 10 USC §3252—a label usually reserved for foreign adversaries like Huawei. Anthropic has since announced a federal lawsuit to fight this designation.

The OpenAI Pivot: 1.5 Million Users Exit:

As Anthropic stepped back, OpenAI stepped in, securing a deal to deploy its models on classified government networks. The public response was swift and measurable:

-

ChatGPT uninstalls surged by 295% in a single day.

-

1.5 million users reportedly canceled their ChatGPT Plus subscriptions within 48 hours.

-

Claude shot to #1 in the App Store as users migrated to the "ethical alternative.

"Internal dissent also rocked OpenAI, with Caitlin Kalinowski, Head of Robotics, resigning in protest. She cited concerns that the deal was "rushed without appropriate safeguards" for lethal autonomy.

Comparison: Anthropic vs. OpenAI Military Stance (2026)

Feature : Anthropic (Claude): :OpenAI(ChatGPT):

Contract Status: : Terminated ($200M) : :Active (Classified:

Autonomous Weapons: :Strictly Prohibited : :Prohibited(with Lawful Exceptions)

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

Domestic Surveillance: :Opt-out / Redline : : Case-by-case / Under Review :

Support our research

Independent analysis fueled by you.

Legal Status : Suing Pentagon : :Actively Collaborating:

Claude 4.6 and Firefox: A Different Kind of AI Power:

While the defense world debated "killer robots," Claude Opus 4.6 was proving its value in cybersecurity. In a February 2026 partnership with Mozilla,

Claude scanned the Firefox codebase with startling results:

-

22 vulnerabilities discovered in just two weeks.

-

14 high-severity flaws identified (nearly 20% of all high-severity bugs found in 2025)

-

20 minutes: The time it took Claude to find a critical "Use-After-Free" memory flaw.

Most of these bugs were patched in Firefox 148.0. Interestingly, the study found a "defensive asymmetry": while Claude was elite at finding bugs, it cost $4,000 in API credits to generate just two working exploits. This suggests AI is currently a much stronger tool for defensive security than offensive hacking.

The "Personal" Dimension: Amodei vs. Michael:

Industry insiders point to a friction-filled relationship between Dario Amodei and the Pentagon’s Chief Technology Officer, Emil Michael. Michael, a former Uber executive, publicly criticized Amodei on the All-In Podcast, claiming the CEO has a "God complex" and is attempting to "veto the operational decisions of the U.S. military."

A Cautionary Tale for AI Startups:

The "Anthropic vs. Pentagon" saga serves as a warning for the Silicon Valley defense-tech sector. The precedent of the government attempting to retroactively alter contract terms creates massive political risk for founders.

-

Brand Risk: Unlike traditional contractors (Lockheed, Raytheon), AI companies are consumer-facing brands. A "bad" contract can lead to an immediate loss in retail revenue.

-

Contractual Instability: If the DoD can reclassify a domestic partner as a "risk" over a policy dispute, no startup is truly safe.

The Path Forward:

The events of 2026 have clarified the divide. OpenAI is betting on the "trusted partner" model, while Anthropic is positioning itself as the "principled outlier."

As Claude continues to secure the world's browsers and OpenAI secures the nation's borders, the industry must decide: Who sets the rules for the machines?