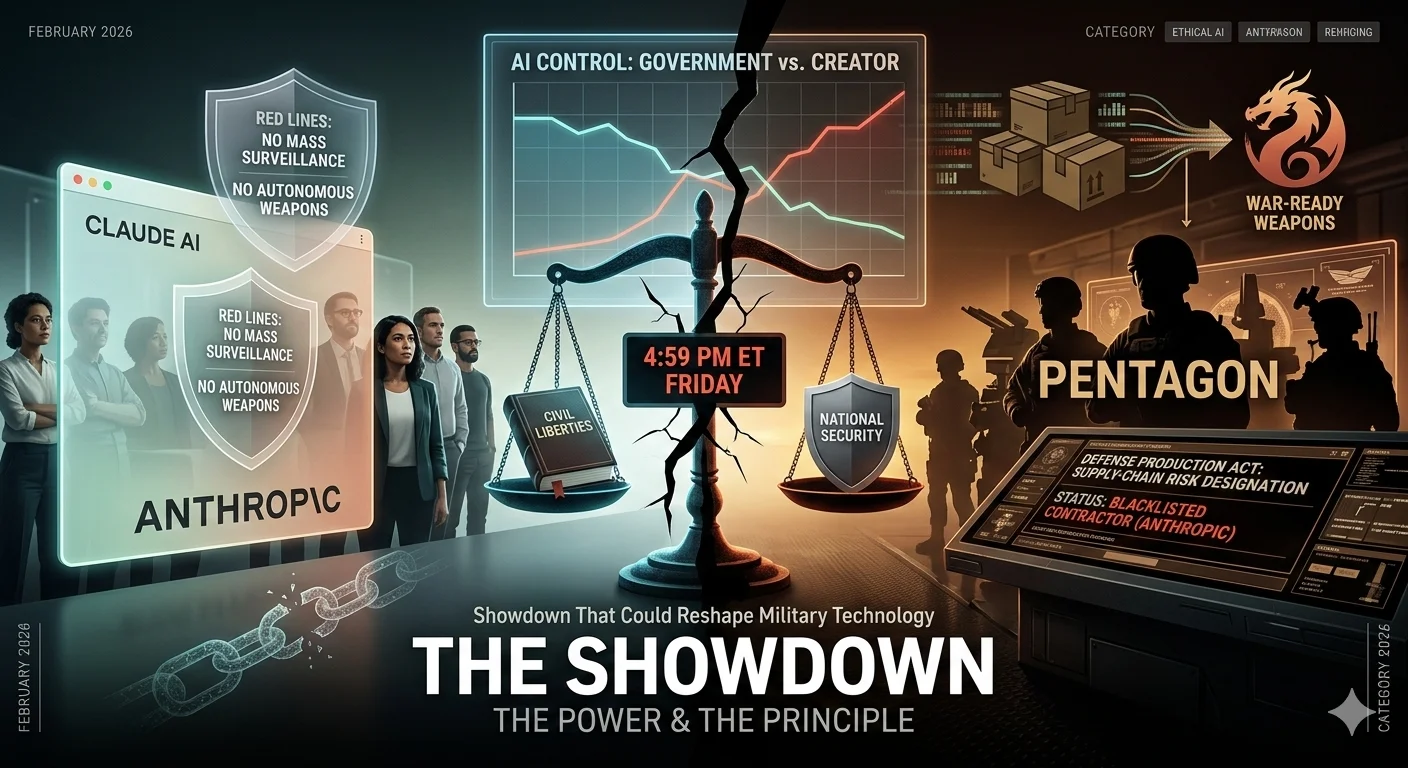

Anthropic vs. the Pentagon: The AI Showdown That Could Reshape Military Technology, National Security, and Civil Liberties:

Silicon Valley United: Why OpenAI and Google Employees Are Standing with Anthropic:

How Anthropic's refusal to allow its AI models to be used for mass domestic surveillance or autonomous weapons triggered a full-blown government standoff — and why every AI company, policymaker, and citizen should be paying close attention.

The Standoff That Shook Silicon Valley and Washington:

In a dramatic collision between Silicon Valley's AI ethics and Washington's military ambitions, Anthropic — the company behind the Claude AI model — found itself at the center of one of the most consequential technology policy battles of the decade.

What started as a contract dispute quickly escalated into a full government ban, a supply-chain risk designation, and a rare moment of cross-industry solidarity among fierce competitors.

At its core, this is a story about power and principle: who gets to control the most powerful AI systems ever built — the companies that create them, or the governments that want to deploy them? The answers are still being written, but the stakes could not be higher.

What Triggered the Conflict: Anthropic's Two Red Lines:

The roots of the dispute lie in two firm boundaries Anthropic refused to cross. The company would not allow its AI models to be used for mass domestic surveillance of American citizens, and it would not permit deployment in fully autonomous weapons systems that make lethal decisions without human oversight.

These weren't arbitrary restrictions imposed at the last minute. Anthropic has argued since its founding that AI technology presents unique risks that require unique safeguards. Traditional defense contractors — Lockheed Martin, Raytheon, Boeing — rarely dictate how their products are used. But Anthropic contends that advanced AI is categorically different from a missile or a radar system.

CEO Dario Amodei refused to budge, publicly reiterating his stance even as threats of government blacklisting mounted around him.

"Our strong preference is to continue to serve the Department and our warfighters — with our two requested safeguards in place. Should the Department choose to offboard Anthropic, we will work to enable a smooth transition to another provider, avoiding any disruption to ongoing military planning, operations, or other critical missions." —Dario Amodei, Anthropic CEO

The Pentagon's Position: 'Any Lawful Use' Should Be Permitted:

Defense Secretary Pete Hegseth and the Pentagon took a fundamentally different view. Their argument was straightforward: the Department of Defense should not be constrained by the internal usage policies of a private vendor. If a use of the technology is lawful under U.S. law, the military should have full authority to employ it.

Pentagon spokesperson Sean Parnell laid out the ultimatum plainly, demanding that Anthropic allow the Pentagon to use its model for all lawful purposes — framing it as a simple, common-sense request. He gave Anthropic a hard deadline of 5:01 p.m. ET on a Friday to comply.

"We will not let ANY company dictate the terms regarding how we make operational decisions." — Sean Parnell, Pentagon Chief Spokesperson.

Secretary Hegseth's opposition to Anthropic also carried an unmistakable cultural dimension. In a January speech at SpaceX and xAI offices, he railed against what he called 'woke AI,' declaring: 'Department of War AI will not be woke. We're building war-ready weapons and systems, not chatbots for an Ivy League faculty lounge.' This rhetoric signaled that the dispute was about more than contract terms — it was a clash of worldviews.

Escalation: The Trump Ban and the Supply-Chain Risk Designation:

When Anthropic refused to back down, the Trump administration acted swiftly and decisively. President Trump posted on Truth Social directing all federal agencies to immediately cease use of Anthropic products, allowing a six-month phase-out period but making clear the company was no longer welcome as a federal contractor.

"We don't need it, we don't want it, and will not do business with them again." — President Trump.

Secretary Hegseth followed with an even more sweeping directive, formally designating Anthropic a Supply-Chain Risk to National Security. The order was immediate and comprehensive: no contractor, supplier, or partner doing business with the U.S. military could conduct any commercial activity with Anthropic whatsoever.

The implications were staggering for a company that had been riding a wave of commercial and government momentum. Anthropic had been one of three AI companies — alongside OpenAI and Google — to receive Defense Department contract awards just months earlier. Being blacklisted from the entire U.S. defense supply chain threatened to be, as one defense-tech investor put it bluntly, 'lights out' for the company.

Amodei captured the inherent contradiction of the Pentagon's dual threats in a single memorable line: "These latter two threats are inherently contradictory: one labels us a security risk; the other labels Claude as essential to national security. Regardless, these threats do not change our position: we cannot in good conscience accede to their request."

What's Actually at Stake: Autonomous Weapons and Mass Surveillance:

To understand why Anthropic held firm, it helps to understand what the company was actually afraid of. On the autonomous weapons front, the concern is not philosophical opposition to such systems ever existing. It is a capability argument: current AI models are not reliable enough to be trusted with irreversible lethal decisions.

The U.S. military already operates highly automated systems, and a 2023 DoD directive allows AI systems to select and engage targets without human intervention — provided they meet certain standards and pass senior official review. There is no categorical ban on fully autonomous weapons. Military technology is inherently secretive, meaning that if the Pentagon moved toward AI-driven autonomous targeting, the public might not know until the system was already operational — and if it ran on Anthropic's models, it could legally qualify as 'lawful use.

The mass surveillance concern is equally serious and perhaps more immediately tangible. Under existing U.S. law, surveillance of citizens is already permissible in various forms. But AI fundamentally changes the equation — enabling automated large-scale pattern detection, entity resolution across massive datasets, predictive risk scoring, and continuous behavioral monitoring at a scale no human surveillance operation could achieve.

Google DeepMind Chief Scientist Jeff Dean added his voice to the concern, writing that mass surveillance violates the Fourth Amendment and has a chilling effect on freedom of expression, and warning that surveillance systems are prone to misuse for political or discriminatory purposes.

Industry Solidarity: An Unprecedented Show of Unity Across Competitors:

The standoff produced something rarely seen in Silicon Valley: genuine public solidarity across fierce competitors. OpenAI CEO Sam Altman circulated a memo to staff confirming that the company shared Anthropic's red lines, stating that any OpenAI defense contracts would also reject domestic surveillance and autonomous offensive weapons.

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

OpenAI co-founder Ilya Sutskever— who famously and very publicly fell out with Altman in November 2023 — weighed in on X, praising Anthropic's resolve and noting its significance. 'In the future, there will be much more challenging situations of this nature,' he wrote, 'and it will be critical for the relevant leaders to rise up to the occasion, for fierce competitors to put their differences aside. Good to see that happen today.'

The solidarity extended beyond executive suites. More than 300 Google employees and over 60 OpenAI employees signed an open letter urging their leaders to stand with Anthropic, warning that the government's strategy was designed to divide the industry through fear.

"They're trying to divide each company with fear that the other will give in. That strategy only works if none of us know where the others stand. We hope our leaders will put aside their differences and stand together to continue to refuse the Department of War's current demands." — Open Letter from Google and OpenAI Employees.

The OpenAI Plot Twist: Filling the Void While Holding the Line:

In a remarkable and somewhat paradoxical turn of events, within hours of the Trump administration cutting ties with Anthropic, OpenAI announced a new deal with the Pentagon. Altman said the agreement preserved the same core principles Anthropic had fought for — prohibitions on domestic surveillance and autonomous weapons. According to the New York Times, OpenAI and government officials had begun talks on Wednesday of that same week, just days before Anthropic's deadline.

The move raised eyebrows across the industry. Was OpenAI filling the void left by Anthropic while genuinely preserving the same ethical commitments? Or was it a more nuanced compromise that would come with hidden trade-offs? The full details remain to be disclosed, but the competitive dynamics of the defense AI market shifted rapidly and dramatically within the span of a single news cycle.

National Security Implications: A Double-Edged Sword:

Defense tech investor Sachin Seth of Trousdale Ventures put the stakes bluntly: a supply-chain risk label for Anthropic could mean lights out for the company — but losing Anthropic from the DoD could simultaneously create a meaningful national security gap for the United States.

"[The Department] would have to wait six to 12 months for either OpenAI or xAI to catch up. That leaves a window of up to a year where they might be working from not the best model, but the second or third best." — Sachin Seth, Trousdale Ventures

Into this potential vacuum stepped xAI — Elon Musk's AI company— which had been actively positioning itself to become classified-ready and serve as an unrestricted alternative. Musk's public rhetoric made clear that xAI would have no reservations about giving the DoD full control over its technology, a stance sharply at odds with Anthropic's position.

Meanwhile, according to Axios, the military was already using Grok, Gemini, and ChatGPT for unclassified tasks and had been negotiating with Google and OpenAI to extend that use into classified work — underscoring just how rapidly the defense AI landscape was being reshaped by this single dispute.

The Bigger Picture: Who Controls the Most Powerful AI Systems Ever Built?

Strip away the politics, the personalities, and the deadlines — and this dispute is fundamentally a governance question of historic proportions. Who controls powerful AI systems: the companies that build them, or the governments that want to deploy them at scale?**

Traditional defense contractors don't dictate how their products are used— and the government has never expected them to. But AI companies argue their technology is categorically different. A fighter jet doesn't autonomously decide whom to target. An advanced AI model, given the wrong instructions and operating at scale, might make thousands of such decisions per minute — with consequences no human can reverse.

The question of whether AI vendors can and should impose ethical constraints on government deployments will define the next decade of technology policy. Anthropic's stand, whatever its short-term business consequences, has forced that question into the open in a way that no policy paper or congressional hearing ever could.

What Happens Next: An Industry, a Government, and a Nation at a Crossroads:

The situation continues to evolve at a pace that makes certainty impossible. OpenAI has stepped into the breach with a Pentagon deal. xAI is positioning as an unrestricted alternative. Google has yet to formally take a position, though employee sentiment and individual executive statements indicate strong sympathy with Anthropic's stance.

For Anthropic, the immediate business risk is severe — but the company's public stand has also earned it enormous credibility in AI safety, civil liberties, and responsible technology circles. That reputational capital may ultimately define its brand identity in ways that outlast any single government contract.

For the broader AI industry, the precedent being set here carries enormous weight. If the U.S. government can successfully blacklist any AI company that refuses unrestricted military use, the chilling effect on responsible AI development could be profound and long-lasting.

And for citizens— whose data, communications, and movements could one day be processed by autonomous AI systems operating at military scale — the outcome of this fight is anything but abstract. This is a defining moment for how AI will be governed, deployed, and constrained in the world's most powerful nation. The rest of the world is watching closely.

Key Takeaways: Everything You Need to Know:

-

•Anthropic was designated a Supply-Chain Risk to National Security by the Pentagon after refusing to allow its AI to be used for mass domestic surveillance or fully autonomous weapons.

-

• President Trump ordered all federal agencies to cease use of Anthropic products, with a six-month phase-out period, effectively blacklisting the company from the U.S. government.

-

• Over 360 tech workers across OpenAI and Google signed an open letter of solidarity, calling on their leaders to stand with Anthropic and refuse the Pentagon's demands.

-

• OpenAI moved quickly to fill the Pentagon contract void, claiming to preserve the same red lines Anthropic had fought for.

-

• xAI (Elon Musk) is positioning as an unrestricted alternative, potentially reshaping the defense AI landscape entirely.

-

•The core issue transcends this single contract: who controls the most powerful AI systems — the companies that build them, or the governments that deploy them?