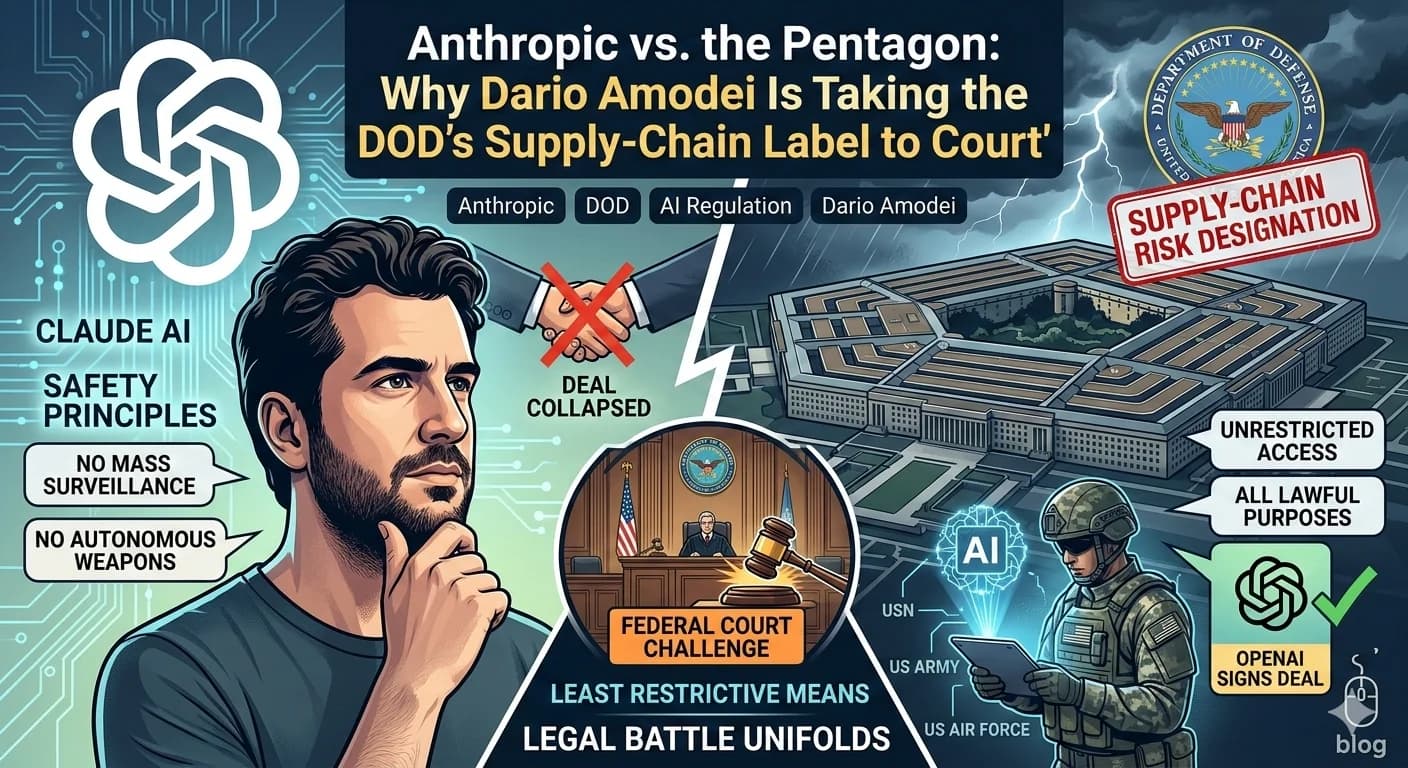

Anthropic vs. the Pentagon: Why Dario Amodei Is Taking the DOD's Supply-Chain Label to Court.

The Breaking Point:

The standoff between Anthropic and the U.S. Department of Defense reached a tipping point on Thursday. Just hours after the Pentagon officially designated the AI company a supply-chain risk — a label that can bar a firm from working with the military and its contractors — CEO Dario Amodei stepped forward with a clear and defiant message: Anthropic plans to challenge the designation in court.

The roots of the conflict run deeper than a single label. At the heart of the dispute is a fundamental disagreement over how much control the U.S. military should have over AI systems. Amodei drew a firm line — Claude will not be used for mass surveillance of American citizens, nor for fully autonomous weapons systems. The Pentagon, for its part, demanded unrestricted access to Claude for what it described as "all lawful purposes." Neither side was willing to yield.

What a Supply-Chain Risk Designation Actually Means:

For most companies, a supply-chain risk designation is a career-ending government relationship. Once applied by the Department of Defense, it can cut a vendor off from Pentagon contracts and, critically, restrict other DOD contractors from working with that vendor as part of their government-facing operations. It is one of the most powerful tools the military has to distance itself from a private technology partner.

Amodei, however, moved quickly to reframe what the designation actually covers. In his Thursday statement, he argued that the ruling is far narrower in scope than initial reporting suggested: "It plainly applies only to the use of Claude by customers as a direct part of contracts with the Department of War, not all use of Claude by customers who have such contracts." In other words, the vast majority of Anthropic's commercial customers — even those who hold DOD contracts — remain unaffected as long as their use of Claude is unrelated to those specific government contracts.

The Legal Argument Anthropic Will Make:

Anthropic's court challenge will likely hinge on a principle baked into the very law the DOD used against it. Amodei made the case plainly: the law requires the Secretary of Defense to use the least restrictive means necessary to protect the supply chain. The designation, he argued, exists to protect the government — not to punish a supplier. And under that standard, Anthropic believes the Pentagon overreached.

The legal terrain is difficult, but not impassable. Dean Ball, a former Trump-era White House adviser on AI who has publicly criticized the DOD's handling of Anthropic, put it in clear terms: "Courts are pretty reluctant to second-guess the government on what is and is not a national security issue… There's a very high bar that one needs to clear in order to do that. But it's not impossible." Any federal case — likely filed in Washington — will test whether courts are willing to scrutinize the Pentagon's broad national security discretion when a private company's livelihood and principles are on the line.

A Leaked Memo, an Apology, and a Derailed Negotiation:

The path to court was not inevitable — and Amodei knows it. By his own account, Anthropic had been having productive conversations with the DOD over the days leading up to the designation. Those talks appeared to collapse after an internal memo he had written to staff was leaked publicly. In it, Amodei described rival OpenAI's deal with the Department of Defense as "safety theater" — a characterization that quickly ignited a media firestorm and, many believe, accelerated the Pentagon's decision.

Amodei was candid about the memo and its fallout. He said it was written within hours of a rapid-fire sequence of announcements — a presidential Truth Social post declaring Anthropic would be removed from federal systems, Defense Secretary Pete Hegseth's formal supply-chain designation, and the Pentagon's announcement of a new AI deal with OpenAI, all landing in close succession.

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

"It is not in our interest to escalate the situation," he said, apologizing for the tone and calling the memo "a difficult day for the company." Written six days before his public statement, he described it as an "out-of-date assessment" — signaling that both the situation and Anthropic's posture have evolved.

OpenAI Steps In — and Faces Its Own Backlash:

While Anthropic prepares for a legal fight, OpenAI has moved into the space Anthropic left behind. The company has signed a deal to work with the Department of Defense in Anthropic's place — a move that has not gone uncontested within OpenAI itself. The deal has sparked significant backlash among OpenAI staff, raising uncomfortable questions about the ethical lines AI companies are willing to draw — or not draw — when government contracts are on the table.

The contrast between the two companies could not be starker. Anthropic walked away from a Pentagon partnership rather than compromise its stated limits on AI use. OpenAI stepped in. How the broader AI industry, the public, and eventually the courts respond to that divergence will shape the norms of AI governance for years to comme.

Anthropic's Commitment While the Legal Battle Unfolds:

Even as it prepares to challenge the government, Anthropic has made clear it will not abandon the soldiers and national security professionals currently relying on its tools. The company is actively supporting ongoing U.S. operations in Iran and has committed to continuing to provide its models to the DOD at nominal cost for as long as necessary to ensure a smooth transition. It is a striking posture — adversarial in court, cooperative in the field — and one that speaks to Amodei's effort to separate the legal dispute from the human stakes on the ground.

The situation remains fluid, and the outcome is far from certain. But Anthropic has drawn its line: AI will not be a tool for unchecked government surveillance or fully autonomous warfare — and the company is willing to fight that principle all the way to federal court.

What This Means for the AI Industry:

The Anthropic-DOD dispute is not just a corporate legal story — it's a referendum on the future of AI in government. For AI companies with federal ambitions, it raises an urgent question: how much ethical autonomy can a private AI firm hold onto when the U.S. military is the client? For policymakers, it exposes the blunt power of supply-chain risk designations as a tool of coercion — and the legal ambiguity around how far that power extends.

And for anyone watching the intersection of artificial intelligence, national security, and corporate ethics, this case — whenever it lands in federal court — is one of the most consequential to follow in the coming months. The rules of engagement between AI companies and the U.S. government are being written right now.

Follow our blog for ongoing coverage of AI regulation, enterprise AI strategy, and the evolving relationship between Silicon Valley and Washington.