Inside the San Francisco Lab Where Robots are Starting to Surprise Their Own Creators.

A Robot Brain That Surprises Its Own Creators:

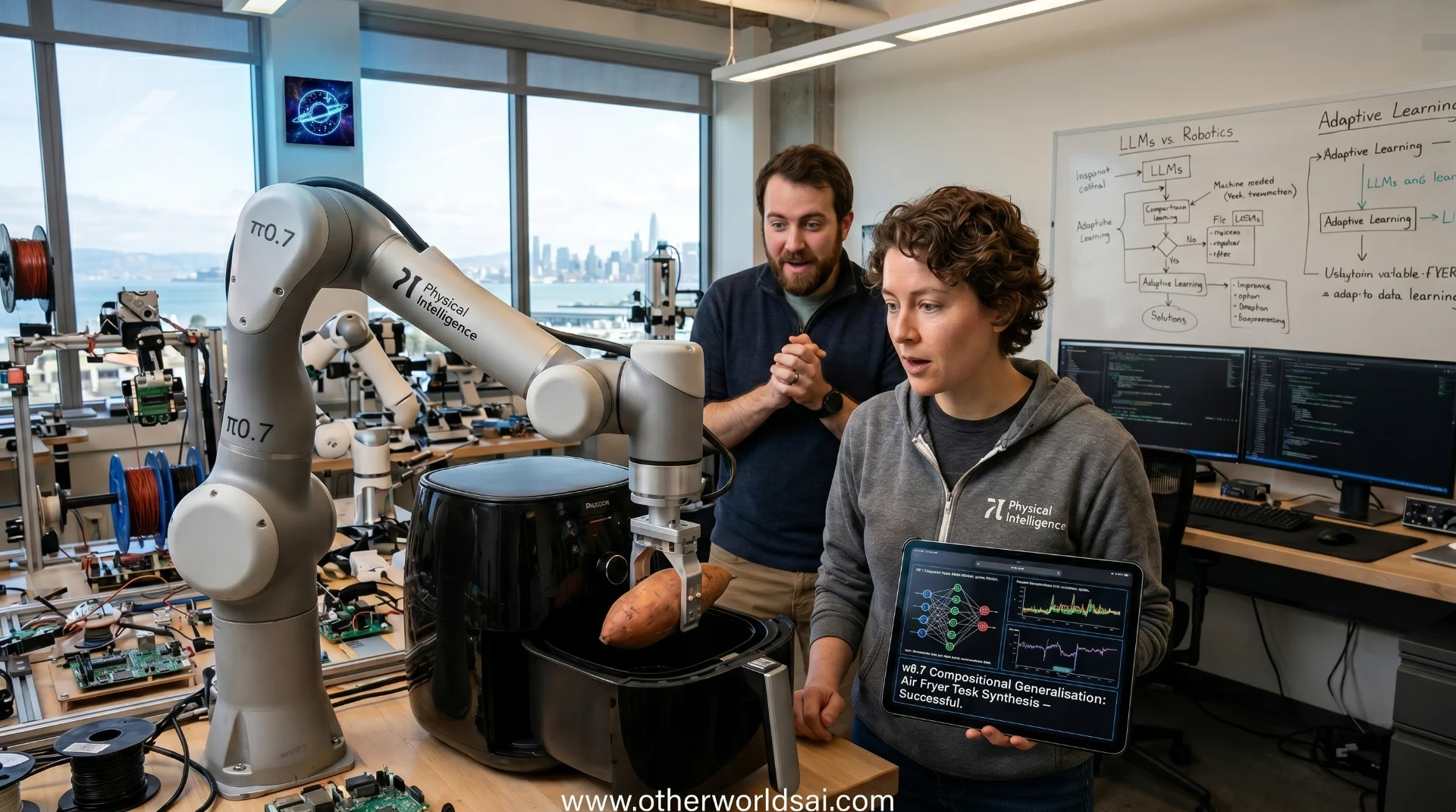

Something unusual is happening inside Physical Intelligence's San Francisco lab — and even the researchers there weren't fully prepared for it. The two-year-old robotics startup, already one of the most closely watched AI companies in the Bay Area, has published new research revealing that its latest model, π0.7, can direct robots to perform tasks they were never explicitly trained on.

The implications for general-purpose robotics, AI-powered automation, and the future of intelligent machines are profound — and the researchers themselves admit they didn't see it coming.

This is not incremental progress — it represents a potential inflection point for the entire field. Physical Intelligence's findings suggest that robotic AI may be approaching the same kind of compound capability growth that the world witnessed with large language models — where abilities begin to scale in ways that dramatically outpace what the underlying training data would seem to make possible. If the research holds up to outside scrutiny, the robot intelligence landscape may never look the same again.

What Is Compositional Generalization — And Why Does It Matter:

At the heart of the π0.7 breakthrough is a concept called compositional generalization — and understanding it is key to understanding why this research is significant. Traditional robot training methods have historically relied on what amounts to rote memorization: collect specific data for a specific task, train a specialist model on that data, then repeat the entire process for every new task the robot needs to learn. It's expensive, time-consuming, and doesn't scale. π0.7 breaks that pattern entirely.

Compositional generalization means the model can combine skills learned in entirely different contexts to solve problems it has never encountered before. Think of it the way a human learns: you don't need to be explicitly taught how to open every door you've ever seen — you develop an understanding of doors in general, and you apply that knowledge flexibly. Physical Intelligence's research claims that π0.7 is beginning to do something analogous — building a flexible, remixable understanding of the physical world rather than a rigid library of memorized routines.

"Once it crosses that threshold where it goes from only doing exactly the stuff that you collect the data for to actually remixing things in new ways, the capabilities are going up more than linearly with the amount of data." — Sergey Levine, Co-Founder, Physical Intelligence.

The Air Fryer Test: A Striking Proof of Concept:

The most compelling demonstration in the research paper involves a household appliance — an air fryer — and a result that genuinely stunned the team. When researchers examined the training dataset to understand how π0.7 managed to use an air fryer it had essentially never seen, they found only two relevant episodes in the entire dataset:

one where a different robot merely pushed the air fryer closed, and another from an open-source dataset where a robot placed a plastic bottle inside one on someone's instructions. That was it. And yet the model synthesized those fragments — combined with broader web-based pretraining data — into a functional understanding of how the appliance works.

The test results were remarkable — and instructive about the role of human communication in AI-robot collaboration. With zero coaching, the model made a passable attempt at using the air fryer to cook a sweet potato. But when a researcher walked the robot through the task step by step — essentially explaining it the way you might coach a new employee — the model performed successfully. The improvement from verbal instruction underscores an important capability of π0.7: it can be guided through unfamiliar tasks in plain language, in real time, without needing additional data collection or model retraining.

One of the most telling details from the experiment speaks to the importance of prompt engineering in robotics AI. Physical Intelligence researcher Lucy Shi — also a Stanford computer science Ph.D. student — described an early attempt that produced just a 5% success rate. After spending approximately 30 minutes refining how the task was explained to the model, that number jumped to 95%. As Shi put it:

"Sometimes the failure mode is not on the robot or on the model. It's on us. Not being good at prompt engineering."

How π0.7 Compares to Traditional Specialist Robot Models:

One of the most significant claims in the Physical Intelligence research is that a generalist model can now match the performance of purpose-built specialist systems. The company measured π0.7 against its own previous specialist robot models — systems trained individually for single tasks — and found that the new generalist AI model matched their performance across a range of complex, real-world activities including making coffee, folding laundry, and assembling boxes. This is a milestone, because specialist models have long held the advantage over generalist approaches in task-specific performance.

That said, the model has clearly defined limitations that the researchers are transparent about. π0.7 is not yet capable of executing complex, multi-step autonomous tasks from a single high-level command. Co-founder and UC Berkeley professor Sergey Levine was direct about this boundary:

"You can't tell it, 'Hey, go make me some toast.' But if you walk it through — 'for the toaster, open this part, push that button, do this' — then it actually tends to work pretty well."

The absence of standardized robotics benchmarks is another honest limitation the team acknowledges. Unlike vision or language models, there are no universally accepted evaluation frameworks for general-purpose robot intelligence — making external validation difficult. The company's comparisons are largely internal, which is a caveat worth noting when evaluating the strength of the claims.

The Moment of Genuine Surprise — And Why It Matters for Robotics AI:

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

What makes the π0.7 research especially compelling is not any single demo — it's the degree to which the results surprised researchers who are paid to know exactly what the model should be capable of. Research scientist Ashwin Balakrishna described his experience in terms that signal something has genuinely shifted in machine learning for robotics:

"My experience has always been that when I deeply know what's in the data, I can kind of just guess what the model will be able to do. I'm rarely surprised. But the last few months have been the first time where I'm genuinely surprised. I just bought a gear set randomly and asked the robot, 'Hey, can you rotate this gear?' And it just worked."

Levine drew a direct parallel to one of the most celebrated moments in the history of large language models. He recalled the first time researchers encountered GPT-2 generating an unexpected story about unicorns in the Andes — a combination of concepts so specific and novel that it signaled the model had developed genuine compositional understanding, not just pattern-matching. Seeing that same quality of surprise emerge in robotics, he says, is "really special." It suggests that robot learning may be entering a new phase — one where capabilities compound rather than merely accumulate.

Real-World Deployment: When Will AI Robots Be Ready:

The question everyone wants answered — when will robots based on this technology be ready for real-world deployment — is one that Levine declines to answer directly. The paper is careful to use hedging language throughout, describing π0.7 as showing "early signs" of generalization and "initial demonstrations" of new capabilities. These are research results, not a deployed commercial product. Still, Levine acknowledges the pace of progress has exceeded his own expectations:

"I think there's good reason to be optimistic, and certainly it's progressing faster than I expected a couple of years ago. But it's very hard for me to answer that question." The coaching model that π0.7 enables does, however, point toward a plausible near-term deployment pathway.

If robots can be placed in new environments and guided through unfamiliar tasks by human operators using plain language — without requiring expensive robot retraining or new data collection — the barrier to deploying AI-powered robots in industries like warehousing, healthcare, food service, and manufacturing drops significantly. That's a commercial proposition worth watching closely.

Addressing the Skeptics: Generalization vs. Showmanship:

Critics of AI robotics research have long argued that impressive demos don't translate to useful, general-purpose systems — and Levine knows exactly what pushback to expect. He anticipates the criticism that the tasks demonstrated are, frankly, boring: the robot isn't doing a backflip; it's using an air fryer. But Levine reframes this directly— and compellingly.

The distinction between a choreographed robot stunt and a genuinely generalizing robotic AI system is precisely the point. Generalization will always look less dramatic than a carefully designed demo — but it is, he argues, considerably more useful.

There is also the fundamental data asymmetry that no amount of clever prompting fully resolves. Large language models had the entire internet to learn from. Robots learning from physical world data face an inherently harder challenge — the volume of available robot training data is vastly smaller than the text corpora that powered the LLM revolution.

Physical Intelligence is betting that web-based pretraining, combined with compositional generalization and real-time verbal coaching, can bridge enough of that gap to make generalist robots viable.

Physical Intelligence: The Billion-Dollar Bet on General-Purpose Robotics:

The investor community has already placed a substantial wager on Physical Intelligence's vision. The company has raised over $1 billion to date and carries a valuation of $5.6 billion — making it one of the most generously funded AI robotics startups in the world. A significant driver of that institutional confidence is co-founder Lachy Groom, a veteran Silicon Valley angel investor whose early bets on Figma, Notion, and Ramp established him as one of the sharpest talent-spotters in the Bay Area startup ecosystem.

What's striking is that investors have committed this capital without a clear commercialization timeline. Physical Intelligence has deliberately refused to offer one — a choice that speaks to the long-horizon nature of the general-purpose robot AI problem they're tackling. The message to the market seems clear: this is a foundational technology play, not a near-term product company. Whether π0.7 represents the beginning of the payoff on that bet — or simply a promising early data point on a much longer road — remains to be seen.

What π0.7 Tells Us About the Future of Intelligent Machines:

The story of π0.7 is ultimately a story about the nature of intelligence— and how unexpectedly it can emerge. If Physical Intelligence's findings are validated by independent researchers, they suggest that robotic AI is beginning to exhibit something that looks less like sophisticated automation and more like adaptive, generalizable understanding. That's a meaningful distinction — and one with enormous implications for how industries think about deploying AI robots in the real world.

The question is no longer whether robots will eventually be able to figure things out on their own — the question is how fast that capability matures, and who builds the infrastructure to harness it.

Physical Intelligence, with its $5.6 billion valuation, world-class research team, and a model that is already surprising its own creators, is one of the companies most likely to find out first.