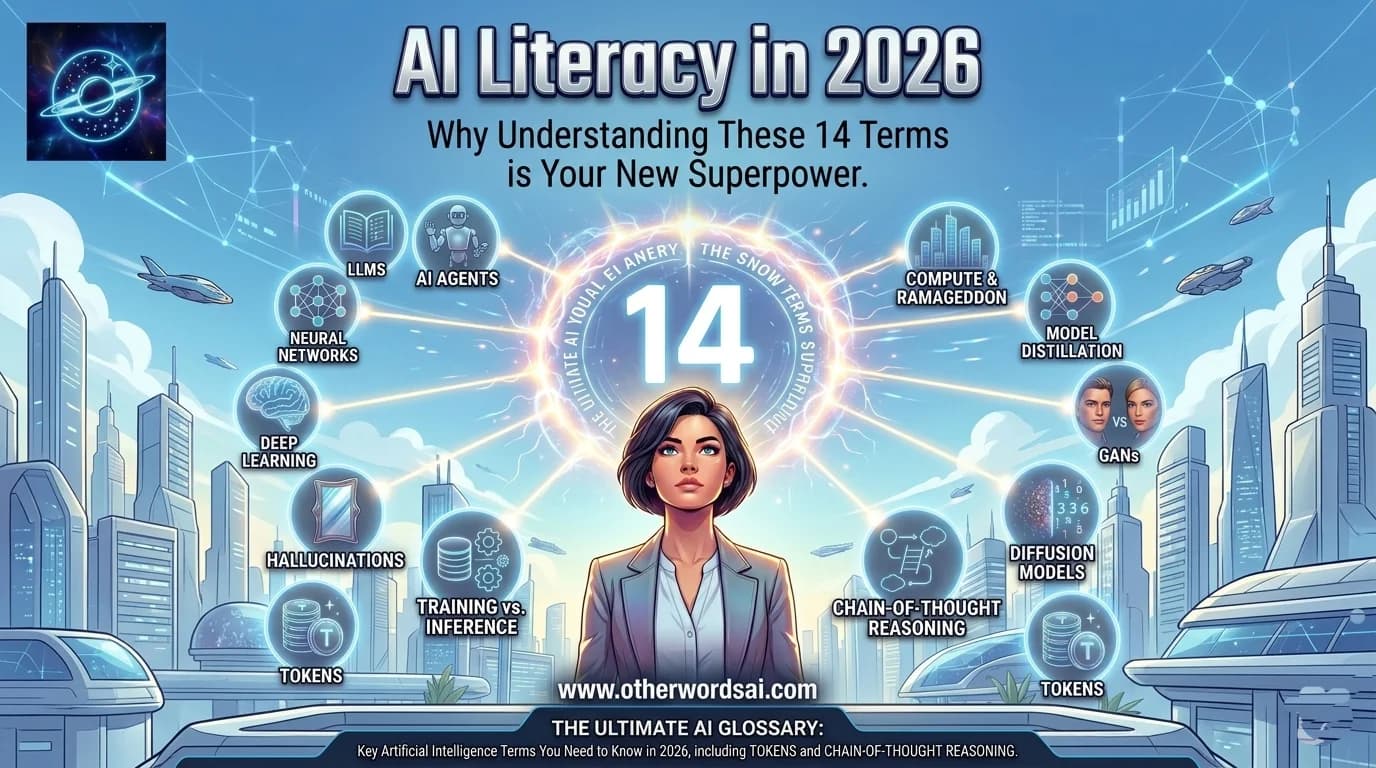

AI Literacy in 2026: Why Understanding These 14 Terms is Your New Superpower:

The Ultimate AI Glossary: Key Artificial Intelligence Terms You Need to Know in 2026:

Understanding AI starts with the vocabulary. From large language models (LLMs) to hallucinations, neural networks to AI agents — this beginner-friendly AI glossary breaks down the most important artificial intelligence terms in plain English. Whether you're a developer, business leader, or curious learner, this guide is your essential reference for navigating the rapidly evolving world of AI technology.

AGI (Artificial General Intelligence): The Holy Grail of AI Research:

Artificial General Intelligence, or AGI, is one of the most debated concepts in modern AI. While no universal definition exists, AGI broadly refers to AI that matches or exceeds average human capability across a wide range of tasks — not just one narrow domain. OpenAI describes it as "highly autonomous systems that outperform humans at most economically valuable work," while Google DeepMind frames it as AI capable of handling most cognitive tasks at a human level. Researchers continue to debate exactly where the AGI threshold lies — proof that even the world's top AI experts are still figuring this one out.

1- AI Agents: Beyond Chatbots — Autonomous AI That Acts on Your Behalf:

An AI agent is far more powerful than a basic chatbot. While a chatbot answers questions, an AI agent performs multi-step tasks autonomously — booking travel, managing expenses, writing and maintaining code, or orchestrating complex workflows. Think of it as hiring a digital employee that draws on multiple AI systems to complete goals end-to-end. AI agents represent one of the most exciting and fast-growing frontiers in artificial intelligence today, with infrastructure still being built to unlock their full potential.

2- Chain-of-Thought Reasoning: How AI Models Solve Complex Problems Step by Step:

Chain-of-thought (CoT) reasoning is the AI equivalent of showing your work on a math test. Rather than jumping straight to an answer, large language models that use CoT break complex problems into smaller, logical intermediate steps — dramatically improving accuracy in areas like mathematics, coding, and multi-step reasoning. Reasoning models are trained specifically for this style of thinking using reinforcement learning, taking longer to respond but delivering far more reliable results on difficult tasks.

3-Large Language Models (LLMs): The Engine Behind ChatGPT, Claude, and Gemini:

Large language models are the core technology powering today's most popular AI assistants. ChatGPT, Claude, Google Gemini, Meta Llama, and Microsoft Copilot are all built on LLMs — deep neural networks with billions of numerical parameters trained on vast amounts of text data. When you send a prompt, the LLM predicts the most probable sequence of words to generate a relevant, coherent response. The result is a sophisticated "map" of language relationships built from billions of books, articles, and transcripts.

4-AI Hallucinations: Why AI Sometimes Makes Things Up — And Why It Matters:

Hallucination is arguably the most critical challenge in generative AI today. It refers to AI models confidently producing information that is factually incorrect — inventing citations, misquoting statistics, or fabricating entire events. This occurs primarily because no training dataset is comprehensive enough to cover every possible question a user might ask. The consequences range from minor embarrassment to serious real-world harm, particularly in healthcare, legal, and financial contexts. This is why most AI platforms now include disclaimers urging users to verify AI-generated content.

5-Neural Networks: The Brain-Inspired Architecture Fueling the AI Revolution:

Neural networks are the foundational architecture behind virtually all modern AI. Inspired by the interconnected neurons of the human brain, these multi-layered algorithms learn to identify patterns in data — enabling breakthroughs in voice recognition, image processing, autonomous driving, and drug discovery. The real turning point came with the rise of GPUs (originally built for gaming), which proved extraordinarily well-suited to training deep, complex neural networks at scale.

6-Deep Learning: How AI Models Learn to Think in Layers:

Deep learning is a specialized subset of machine learning that uses multi-layered neural networks to make sophisticated inferences from raw data. Unlike simpler ML approaches, deep learning models can automatically identify the most important features in a dataset without human engineers defining them manually. The tradeoff: deep learning requires millions of data points and significant compute resources, making training cycles expensive — but the performance gains make it the dominant paradigm in modern AI.

7- Training vs. Inference: The Two Pillars of How AI Models Work:

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

Training and inference are the two fundamental phases of any AI model's lifecycle. Training is the process of feeding data into a model so it learns patterns — starting from random parameters and iteratively adjusting until outputs match the desired goal. Inference is what happens when you actually use the model — prompting it to generate a response based on everything it learned during training. Training is typically expensive and time-consuming; inference can run on everything from smartphones to cloud servers with specialized AI chips.

8-Fine-Tuning & Transfer Learning: Making General AI Models Domain-Specific:

Fine-tuning and transfer learning are powerful techniques that allow developers to adapt pre-trained AI models for specialized tasks. Fine-tuning involves further training an existing LLM on domain-specific data — for instance, training a general model on medical records to create a healthcare AI assistant. Transfer learning reuses knowledge from previous training cycles to shortcut development when data is limited. Together, these approaches are how AI startups rapidly build competitive, specialized products on top of foundation models from OpenAI, Anthropic, Google, and others.

9-Tokens: The Currency of Human-AI Communication (and AI Pricing):

Tokens are the fundamental unit of how AI models process and generate language. Through a process called tokenization, raw text is broken into discrete chunks — words, word-parts, or characters — that an LLM can interpret and respond to. There are input tokens (your prompts), output tokens (the AI's responses), and reasoning tokens (generated during complex problem-solving). For enterprise users, token usage directly determines cost — most AI providers including OpenAI and Anthropic charge per token consumed, making token efficiency a key business consideration.

10-Diffusion Models: The Technology Behind AI-Generated Images, Music, and Art:

Diffusion models are the secret engine behind today's most impressive AI-generated media. Inspired by physics, these systems progressively add random noise to training data until the original structure is completely destroyed — then learn to reverse this process to reconstruct images, audio, or video from pure noise. This "reverse diffusion" capability is what allows models like Stable Diffusion and DALL-E to generate photorealistic images from simple text prompts.

11-Model Distillation: How AI Labs Build Smaller, Faster Models From Larger Ones:

Distillation is a model compression technique that transfers knowledge from a large, powerful "teacher" model to a smaller, faster "student" model. Developers run queries through the teacher, collect outputs, and use them to train the student to approximate the teacher's performance at a fraction of the computational cost. This technique is widely believed to underpin models like GPT-4 Turbo — though using a competitor's model for distillation typically violates their terms of service.

12-Compute & RAMageddon: Why AI Is Causing a Global Hardware Crisis:

Compute — the raw processing power that trains and runs AI models — has become the scarcest and most valuable resource in the tech industry. GPUs, TPUs, and custom AI accelerators form the backbone of every major AI lab's infrastructure. But the AI boom has triggered what the industry now calls "RAMageddon" — a severe shortage of random access memory (RAM) chips as tech giants race to build out AI data centers. The ripple effects are hitting consumer electronics, gaming, and enterprise computing alike, with prices rising and supply remaining constrained with no clear end in sight.

13-GANs (Generative Adversarial Networks): The Architecture Behind Deepfakes:

Generative Adversarial Networks are a clever machine learning framework built on competition. A "generator" network creates synthetic data and passes it to a "discriminator" network that tries to detect whether it's real or fake. The two models continuously try to outdo each other — this adversarial loop pushes both toward increasingly realistic outputs without requiring additional human feedback. GANs are particularly effective for generating realistic images and videos, and they form the core technology behind many deepfake tools.

14-Stay Ahead of the AI Curve: Why This Glossary Matters for 2026 and Beyond:

Artificial intelligence is no longer a niche topic for researchers — it is reshaping every industry, profession, and daily routine. Understanding these core AI concepts — from LLMs and neural networks to hallucinations and AI agents — is now essential literacy for anyone navigating the modern world.

Bookmark this guide and check back regularly as we continue to add new AI terms, emerging safety concepts, and the latest developments from the frontier of machine learning research.