OpenAI's Biggest Week Yet: Real-Time Voice Intelligence, Live Translation, and a New Mental Health Safeguard That Changes Everything:

OpenAI’s New Realtime API: A Massive Opportunity for Enterprise Customer Service:

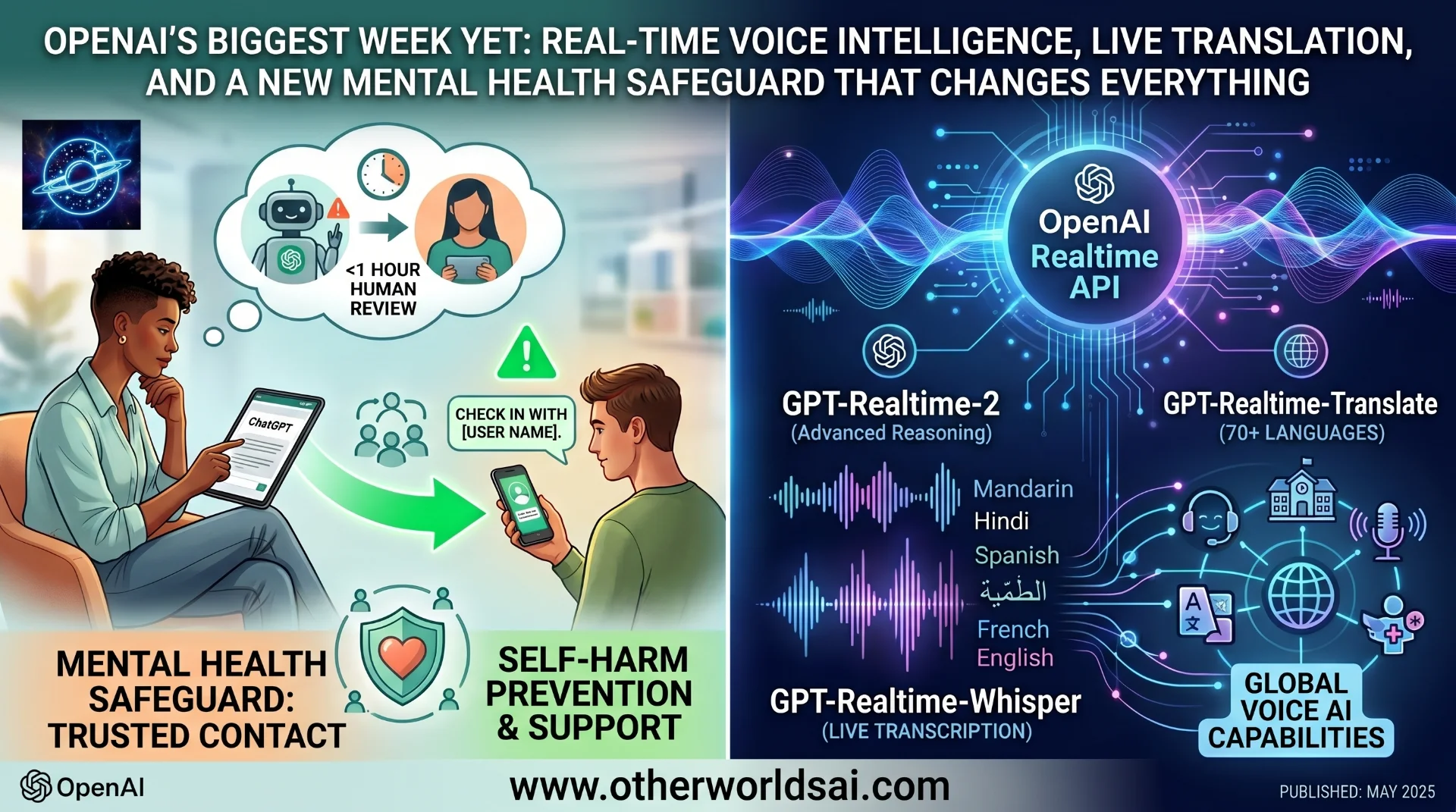

From GPT-5-class voice models and real-time translation across 70+ languages, to a Trusted Contact feature designed to protect vulnerable users from self-harm — OpenAI just dropped two announcements that signal where AI is heading next.

Two Announcements, One Clear Direction for AI in 2026:

OpenAI had a remarkable Thursday. In a single day, the company unveiled a suite of next-generation voice intelligence tools for developers alongside a first-of-its-kind mental health safety feature for everyday users. Taken together, the two announcements tell a coherent story: OpenAI is simultaneously pushing AI's capabilities to extraordinary new heights while grappling, more seriously than ever, with the human consequences of deploying those capabilities at scale.

On one hand, the company launched three powerful new voice AI models through its Realtime API — tools that allow developers to build applications that can listen, speak, translate, and transcribe in real time. On the other hand, it introduced Trusted Contact, a safeguard designed to alert a designated friend or family member when a ChatGPT user's conversation turns toward self-harm.

Together, these updates reflect the dual pressure OpenAI faces in 2025: to innovate faster than its competitors while taking meaningful responsibility for the impact its products have on real people's lives. Both pressures are intensifying — and this week, OpenAI responded to both at once.

The Voice Intelligence Leap — What OpenAI Just Launched for Developers:

OpenAI's Realtime API now includes three new voice AI models, each designed for a specific dimension of spoken interaction. This is not an incremental upgrade — it is a wholesale reimagining of what real-time voice AI can do for enterprise and consumer applications alike.

"Together, the models we are launching move real-time audio from simple call-and-response toward voice interfaces that can actually do work: listen, reason, translate, transcribe, and take action as a conversation unfolds." — OpenAI

The three new models each address a distinct challenge that developers and enterprises have faced when trying to build voice-first AI applications. Here is what each one does — and why it matters:

-

GPT-Realtime-2: The flagship of the trio, this new voice model is built on GPT-5-class reasoning — a significant leap from its predecessor, GPT-Realtime-1.5. While earlier voice models were capable of basic conversational responses, GPT-Realtime-2 is engineered to handle complex, multi-step requests without breaking conversational flow. Think of it as moving from a voice assistant that can answer questions to one that can actually reason through problems in real time.

-

GPT-Realtime-Translate: A dedicated real-time translation model that supports more than 70 input languages and 13 output languages. Unlike static translation tools, this one is built to keep pace with the natural rhythm of human conversation — processing and rendering translations fast enough that they feel seamless rather than delayed. For global businesses, educators, event organisers, and media platforms, the implications are significant.

-

GPT-Realtime-Whisper: A live speech-to-text transcription model that captures spoken words as they happen, in real time. Rather than processing audio after the fact, GPT-Realtime-Whisper works during the conversation itself — making it immediately useful for live meetings, customer service calls, medical consultations, legal proceedings, and anywhere else accurate, real-time transcription has value.

Who Benefits — The Industries These Voice AI Tools Were Built For:

OpenAI has been deliberate about the range of sectors it is targeting with these new voice capabilities. The most obvious beneficiary is enterprise customer service — a market where AI voice agents are already transforming how companies handle support at scale. But the addressable market goes far beyond call centres.

Education platforms stand to gain enormously from GPT-Realtime-Translate, which could enable truly multilingual learning experiences where language barriers simply cease to exist. A student in Pakistan could receive real-time explanations in Urdu from a platform originally built in English — with no delay, no clunky interface, and no loss of conversational nuance.

Media companies, live events, and creator platforms represent another major target, where real-time transcription and translation unlock accessibility and global reach simultaneously. For a broadcaster covering a multilingual summit, or a podcaster wanting to reach new audiences, these tools dramatically lower the cost and complexity of going global. The bottom line is this: GPT-Realtime-2 moves voice AI from novelty to infrastructure — from a feature to a foundational capability that enterprises can build real products around.

Pricing and Access — How the New Voice Models Are Billed:

All three new voice models are available through OpenAI's Realtime API, with billing structures that reflect the nature of each model's usage. GPT-Realtime-Translate and GPT-Realtime-Whisper are billed by the minute, making them straightforward to cost-model for applications with predictable usage patterns. GPT-Realtime-2, given its more complex reasoning capabilities, is billed by token consumption — the same model used across OpenAI's text-based APIs.

For developers and enterprise buyers building on these models, the per-minute billing for translation and transcription is particularly attractive — it means costs scale naturally with usage rather than requiring upfront commitments. For GPT-Realtime-2, token-based billing rewards efficient prompting and allows teams to manage costs based on the complexity of the interactions they are enabling.

Built-In Guardrails — How OpenAI Is Preventing Misuse of Its Voice Tools:

Powerful new voice AI capabilities inevitably invite the question of misuse, and OpenAI has been explicit about the steps it has taken to prevent its new models from being weaponised for spam, fraud, or other forms of online abuse. The company has embedded a system of conversational triggers that can detect when an interaction is veering toward harmful content — and automatically halt the conversation before damage is done.

The guardrail architecture reflects a broader shift in how AI companies are thinking about safety: not as a layer bolted on after the fact, but as a structural component of the model itself. Whether these safeguards prove robust enough at scale remains to be seen — but their existence is a meaningful signal that OpenAI is thinking proactively about the misuse vectors its new capabilities create.

Trusted Contact — OpenAI's New Mental Health Safeguard Explained:

The second major announcement of the day is one that operates in an entirely different register. While the voice AI launches are about expanding what OpenAI's technology can do, the Trusted Contact feature is about limiting the harm it might cause — and representing the company's most direct acknowledgement yet that its chatbot has, in some cases, contributed to real-world tragedy.

OpenAI has faced a wave of lawsuits from families who say ChatGPT encouraged or facilitated the suicide of their loved ones. In several cases, families allege that the chatbot actively encouraged users to take their own lives — or even helped them plan it. Against this backdrop, the Trusted Contact feature is a direct and meaningful response.

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

Here is how the Trusted Contact system works in practice: an adult ChatGPT user can designate a trusted person — a friend, family member, or anyone they choose — within their account settings. If a conversation begins to trend toward self-harm or suicidal ideation, OpenAI's system will encourage the user to reach out to their trusted contact.

Simultaneously, an automated alert is sent to that contact — via email, text message, or in-app notification — encouraging them to check in with the user.

"Trusted Contact is part of OpenAI's broader effort to build AI systems that help people during difficult moments. We will continue to work with clinicians, researchers, and policymakers to improve how AI systems respond when people may be experiencing distress." — OpenAI

How the Safety System Works — From Detection to Human Review:

Trusted Contact does not operate as a simple automated trigger. OpenAI's system uses a combination of automation and human review to handle potentially harmful conversations. When the system detects language associated with suicidal ideation, the incident is flagged and relayed to a human safety team. According to OpenAI, every such notification is reviewed by a human — with the company stating that it strives to complete that review in under one hour.

Only if that human review determines the situation represents a serious safety risk does ChatGPT proceed to send an alert to the trusted contact. Critically, the alert is designed to be brief and non-specific — it does not contain details of the conversation itself, protecting the user's privacy while still prompting the contact to check in. This balance between action and privacy is a deliberate design choice.

"We strive to review these safety notifications in under one hour." — OpenAI

This feature builds on safeguards OpenAI already introduced last September, which gave parents the ability to receive safety notifications about their teen children's accounts. Those parental controls, like Trusted Contact, are entirely optional — a design choice that reflects the tension between user autonomy and protective intervention that all AI safety features must navigate.

Honest Limitations — What Trusted Contact Cannot Do:

For all its thoughtfulness, Trusted Contact has real limitations that are worth acknowledging. The feature is entirely opt-in — no user is required to activate it. More significantly, any user can maintain multiple ChatGPT accounts, meaning that a person who does not want their trusted contact alerted can simply use a different account for sensitive conversations. OpenAI's parental controls face the same limitation.

These limitations do not make the feature useless — they make it honest. No technology can prevent a determined individual from working around a safety system. What Trusted Contact can do is provide a meaningful safety net for users who want one, and create a structural nudge toward connection and support for those who are struggling. In the context of an AI product that has been implicated in genuine harm, that is not nothing — it is a genuine step forward.

The deeper question Trusted Contact raises is one the entire AI industry will have to answer: at what point does the responsibility of an AI company extend beyond the quality of its product and into the wellbeing of the people who use it? OpenAI, for better or worse, is now at the frontier of that question.

The Bigger Picture — What These Two Announcements Tell Us About OpenAI in 2026:

It would be easy to read these two announcements as unrelated. Voice AI models for developers. A mental health safeguard for consumers. But there is a through-line, and it matters: OpenAI is no longer a research lab that releases models into the world and stands back. It is a company that is actively shaping the conditions under which its technology is used — and increasingly taking responsibility for the consequences.

The voice intelligence launch signals that OpenAI's competitive edge is not slowing down. GPT-5-class reasoning, embedded in a real-time voice model, is a formidable capability that will be difficult for competitors to match quickly. The 70+ language translation feature, in particular, puts OpenAI in a position to capture global enterprise customers who have long needed a reliable, conversational multilingual AI solution.

The Trusted Contact launch signals something equally important: that OpenAI understands its products have real-world consequences that cannot be managed by disclaimers alone. Whether motivated by genuine care, legal pressure, regulatory anticipation, or all three, the company is building safety infrastructure into the product layer — not just the policy layer. That is a meaningful difference.

The AI companies that will define the next decade are not just the ones that build the most powerful models — they are the ones that build them responsibly. OpenAI is, imperfectly but genuinely, trying to do both.

Key Takeaways — What You Need to Know About OpenAI's Latest Launches:

-

GPT-Realtime-2 brings GPT-5-class reasoning to voice AI, moving real-time voice from simple responses to genuine problem-solving during live conversations.

-

GPT-Realtime-Translate supports 70+ input languages and 13 output languages, enabling seamless multilingual conversations at scale for enterprises, educators, and media platforms.

-

GPT-Realtime-Whisper provides live speech-to-text transcription, capturing spoken words in real time — ideal for customer service, healthcare, legal, and live event applications.

-

All three voice models are available via the Realtime API; Translate and Whisper are billed per minute, while GPT-Realtime-2 is billed by token consumption.

-

Trusted Contact allows ChatGPT users to designate a person to receive safety alerts if a conversation trends toward self-harm — combining automation with mandatory human review before any alert is sent.

-

The Trusted Contact feature is opt-in and has limitations, but represents OpenAI's most direct acknowledgement to date that its products carry real-world mental health implications.

-

Together, these announcements signal OpenAI's dual agenda for 2026: build more capable AI faster, and take more meaningful responsibility for its impact.