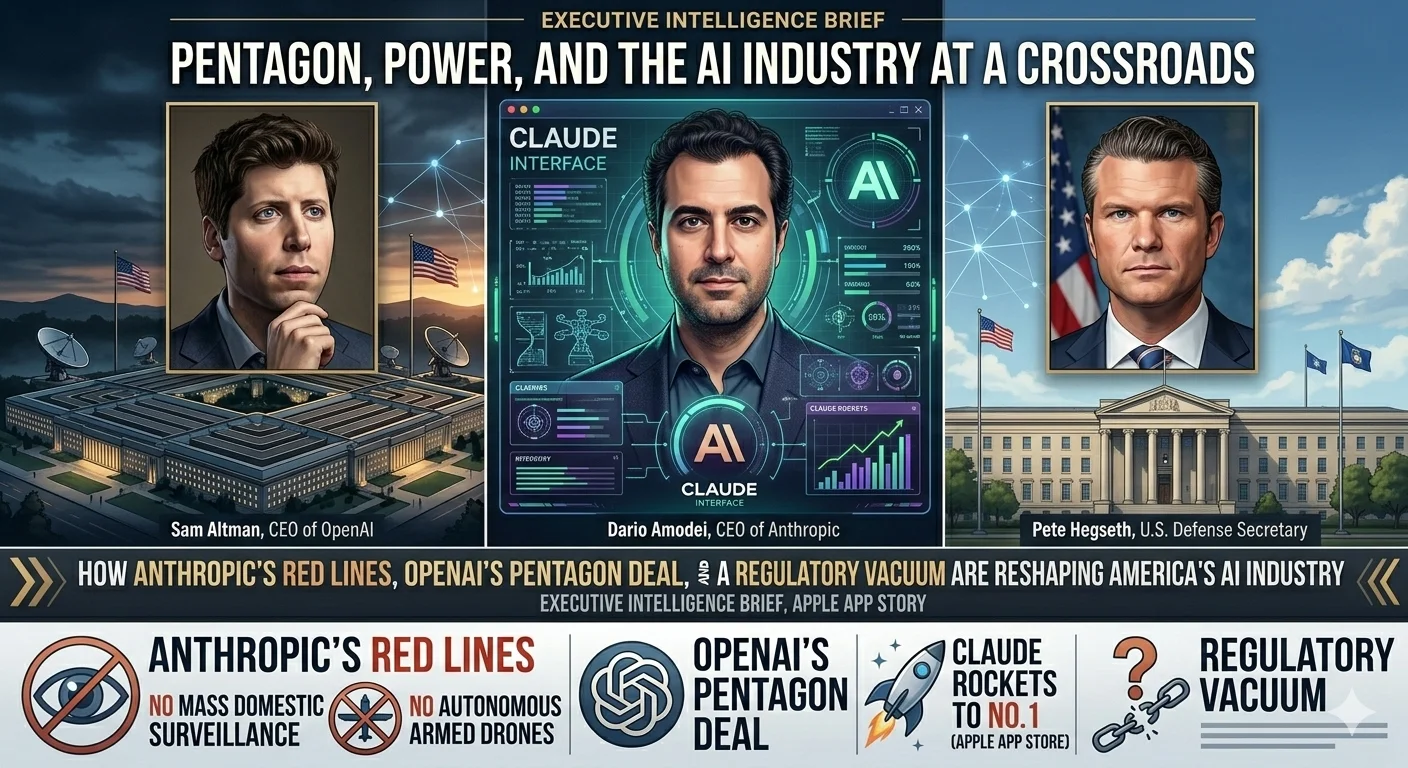

Pentagon, Power, and the AI Industry at a Crossroads:

How Anthropic's Red Lines, OpenAI's Pentagon Deal, and a Regulatory Vacuum Are Reshaping America's AI Industry:

A Week That Changed Everything:

In a single extraordinary week, the American AI industry was forced to confront questions it had long managed to avoid. After negotiations between Anthropic and the Pentagon collapsed, President Trump directed all federal agencies to immediately stop using Anthropic technology, and Defense Secretary Pete Hegseth invoked national security law to formally blacklist the company.

The reason was deceptively simple: CEO Dario Amodei refused to allow Anthropic's models to be used for mass domestic surveillance of U.S. citizens or for fully autonomous armed drones capable of selecting and killing targets without human input.

OpenAI moved fast, announcing its own Pentagon deal within hours — a decision CEO Sam Altman described as "definitely rushed" and one that generated instant, fierce backlash. Meanwhile, Anthropic's Claude rocketed to the No. 1 spot in Apple's U.S. App Store, overtaking ChatGPT on Saturday evening — a striking sign that the public understood something important was at stake.

This briefing combines reporting and analysis from across the week's defining events — the Anthropic–Pentagon dispute, OpenAI's controversial deal, the AI safety regulatory vacuum, and the broader implications for enterprise AI strategy — into a single, comprehensive account of what happened, why it matters, and what every business leader needs to understand going forward.

The Dispute That Started It All — Anthropic's Red Lines and the Pentagon:

The Collapse of Negotiations: What Anthropic Refused to Do Anthropic's position was, by the company's own framing, entirely consistent with its founding identity as a safety-first AI lab. The company drew two firm red lines in negotiations with the Department of Defense: its models could not be used for mass domestic surveillance of American citizens, and they could not be integrated into fully autonomous weapons systems capable of selecting and engaging targets without human oversight.

For Anthropic, these were not negotiating positions — they were non-negotiable constraints baked into the company's core mission.

The consequences arrived swiftly and with extraordinary force. President Trump directed every federal agency to immediately cease all use of Anthropic technology. Defense Secretary Pete Hegseth followed by designating Anthropic a supply-chain risk under national security law — a designation potentially barring the company from working not just with the DoD directly, but with its vast network of partners, contractors, and suppliers. Anthropic stands to lose a contract worth up to $200 million and has since announced it will challenge the Pentagon's decision in court.

The Trap Anthropic Built for Itself: A Decade of Self-Regulation Catches Up:

MIT physicist and AI safety advocate Max Tegmark offered an unsparing diagnosis of the moment. Anthropic, he argued, like its rivals, has spent years sowing the seeds of its own predicament. His analysis doesn't begin with the Pentagon — it begins with a decision made across the industry years earlier: the choice to resist binding regulation in favor of self-governance.

"All of these companies, especially OpenAI and Google DeepMind but to some extent also Anthropic, have persistently lobbied against regulation of AI. The result: the United States currently has less regulation on AI systems than on sandwiches." — Max Tegmark

The pattern of broken promises makes for uncomfortable reading. Google dropped its famous "Don't be evil" commitment, then dropped a longer pledge not to use AI for harm. OpenAI removed the word "safety" from its mission statement. xAI shut down its entire safety team. And earlier the same week, Anthropic itself dropped its core safety commitment — the promise not to release increasingly powerful AI systems until the company was confident they wouldn't cause harm.

The industry's resistance to binding rules has produced exactly the crisis its practitioners claimed self-governance would prevent. Without enforceable law, there is nothing to stop a government administration from simply demanding what these companies promised not to build — and nothing to protect a company that refuses. "There is no law right now against building AI to kill Americans, so the government can just suddenly ask for it. If the companies themselves had earlier come out and said, 'We want this law,' they wouldn't be in this pickle." — Max Tegmark

OpenAI's Pentagon Deal — Rushed, Contested, and Consequential:

The Deal That Divided the Industry: OpenAI's Agreement with the DoD**

Hours after Anthropic's blacklisting, OpenAI announced it had reached its own agreement with the Department of Defense for models to be deployed in classified environments. The speed of the announcement was notable — and, by CEO Sam Altman's own admission, entirely deliberate. "We really wanted to de-escalate things," Altman said, "and we thought the deal on offer was good."

The strategic logic was transparent, if not universally accepted. By stepping in where Anthropic had stepped back, OpenAI hoped to defuse what it saw as a dangerous standoff between the AI industry and the federal government. Altman acknowledged the downside risk with unusual candor: "If we are right and this does lead to a de-escalation between the DoD and the industry, we will look like geniuses. If not, we will continue to be characterized as rushed and uncareful."

The immediate market response was unambiguous. Anthropic's Claude overtook OpenAI's ChatGPT to claim the No. 1 spot in Apple's U.S. App Store on Saturday evening — a striking signal that a meaningful segment of the public interpreted OpenAI's deal as a betrayal of principle, and Anthropic's stand as something worth rewarding.

What the Agreement Actually Says: Three Red Lines, One Contested Interpretation:

Under pressure to explain itself, OpenAI published a detailed blog post outlining the terms and safeguards of its agreement. The post identified three areas where OpenAI's models explicitly cannot be used: mass domestic surveillance, autonomous weapon systems, and high-stakes automated decisions such as "social credit" systems.

The company drew a pointed contrast with competitors it said had "reduced or removed their safety guardrails." OpenAI argued its approach was superior because it was multi-layered: "We retain full discretion over our safety stack, we deploy via cloud, cleared OpenAI personnel are in the loop, and we have strong contractual protections."

Not everyone accepted this framing. Techdirt's Mike Masnick argued that the contract language "absolutely does allow for domestic surveillance" because it specifies that data collection will comply with Executive Order 12333 — the order through which the NSA conducts surveillance of U.S. persons by intercepting communications outside U.S. borders.

OpenAI's head of national security partnerships Katrina Mulligan pushed back, arguing that deployment architecture matters more than contract language, and that cloud-only deployment prevents direct integration with weapons systems or operational hardware. "Deployment architecture matters more than contract language. By limiting our deployment to cloud API, we can ensure that our models cannot be integrated directly into weapons systems, sensors, or other operational hardware." — Katrina Mulligan, OpenAI

The Regulatory Vacuum — How the Industry Created Its Own Crisis:

The China Argument: Compelling, But Not Conclusive.

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

The AI industry's standard defense against calls for binding regulation has always been the same: China. If American companies restrain themselves, the argument goes, Beijing will simply accelerate — and America will lose the race. Tegmark addressed this directly, and his response was more nuanced than the familiar counter-arguments.

China is, in fact, moving to restrict its own AI industry in ways American companies have resisted. "China is in the process of banning AI girlfriends outright," Tegmark noted — not because of American pressure, but because Chinese authorities have concluded that unconstrained AI is making Chinese youth weaker. The logic applies symmetrically: uncontrollable superintelligence is a national security threat to every government, including the American one currently funding its development.

How Close Are We to the Systems Everyone Is Arguing About?

Tegmark's assessment of the timeline is sobering, drawn from co-authored research with Yoshua Bengio, Dan Hendrycks, and other leading AI researchers. Six years ago, most AI experts predicted human-level language and reasoning were decades away — perhaps 2040 or 2050. Those predictions were wrong. According to Tegmark's research framework, GPT-4 reached 27% of the way to AGI. GPT-5 reached 57%. "Going from 27% to 57% that quickly suggests it might not be that long."

The practical implications for the workforce are already arriving. "Even if it takes four years," Tegmark told his MIT students, "that means when you graduate, you might not be able to get any jobs anymore." Last year, AI won the gold medal at the International Mathematics Olympiad — one of the most demanding intellectual benchmarks humans have ever devised.

Is There a Version Where This Ends Well?

Tegmark's answer, perhaps surprisingly, is yes — and it is grounded in a practical policy proposal rather than abstract optimism. "If we just start treating AI companies like any other companies — drop the corporate amnesty — they would clearly have to do something like a clinical trial before they released something this powerful, and demonstrate to independent experts that they know how to control it. Then we get a golden age with all the good stuff from AI, without the existential angst."

The precedent exists across every other high-stakes industry. Food safety regulation, pharmaceutical clinical trials, aviation safety standards — all represent binding frameworks that were resisted by industries until they were enacted, and which most now acknowledge as essential. The AI industry's resistance to equivalent frameworks is not just bad policy. It is a form of self-sabotage that has now produced the very crisis its practitioners claimed self-governance would prevent.

Claude Rises to No. 1 — What the Market Response Reveals:

The App Store Verdict:How Consumers Responded to the Pentagon Dispute:

While the debate raged across boardrooms, newsrooms, and government corridors, consumers delivered their own verdict — and it was unambiguous. Anthropic's Claude climbed from outside the top 100 in the U.S. App Store at the end of January, to inside the top 20 for most of February, to No. 1 on Saturday — overtaking OpenAI's ChatGPT as the most downloaded free app in America. As of Sunday morning, it still held the top position.

The internal metrics behind the rankings tell an even more striking story. A company spokesperson confirmed that daily signups broke the all-time record every single day of the week in question. Free users increased more than 60% since January. Paid subscribers more than doubled over the course of the year. Whatever the commercial calculus of Anthropic's negotiating position, its public standing was transformed by the dispute.

The Industry Moment: Who Stood With Anthropic: — and Who Stayed Quiet:

The crisis created a moment of involuntary transparency for every major AI laboratory. Sam Altman publicly stated the night before OpenAI's deal that he stood with Anthropic and shared the same red lines — a statement he made, and then complicated, within the span of 24 hours. Google, as of the moment of Tegmark's interview, had said nothing. xAI had said nothing.

"Basically, there's this moment where everybody has to show their true colors." — Max Tegmark

For companies that have spent years building elaborate public narratives around safety, responsibility, and trustworthy AI, the silence was its own kind of answer. The AI safety regulatory vacuum that the industry created — by successfully lobbying against binding rules — had produced exactly the kind of crisis its architects claimed self-governance would prevent.

Strategic Implications for Business Leaders — What Executives Must Do Now:

The events of this week are not a news story that will recede into background noise. They represent a structural shift in the relationship between AI companies, the U.S. government, and the enterprises that depend on AI infrastructure.

The following actions are no longer optional for any organization with meaningful AI exposure:

• Audit your AI vendor dependencies immediately: Anthropic's blacklisting demonstrated that a key AI supplier can be designated a national security threat overnight. Every organization needs a clear map of which models, APIs, and platforms are critical to operations.

• Reassess government and defense sector exposure: If your business operates in or adjacent to the defense supply chain, the OpenAI–Anthropic split has created new risk categories that require legal and compliance review — not next quarter, but now.

Support our research

Independent analysis fueled by you.

• Monitor the regulatory trajectory: The U.S. currently has no binding AI regulation. That vacuum will not persist indefinitely. Organizations that begin building compliant practices now — before regulation arrives — will be far better positioned than those that wait.

• Evaluate your AI providers' red lines: Procurement teams should now be asking every AI vendor: Where are your lines, and are they enforceable? The answer matters for reputational as well as operational risk.

• Watch the autonomous systems boundary: The debate over autonomous weapons and surveillance is the front line of a much larger question about where human oversight ends and AI autonomy begins. Organizations deploying AI in high-stakes decisions — hiring, lending, medical, legal — should be paying close attention.

The Anthropic–Pentagon dispute is, at its core, a story about governance arriving too late. The AI industry built extraordinary power without building the frameworks to manage it. Now the consequences — legal, commercial, reputational, and geopolitical — are compounding simultaneously. The organizations best prepared to navigate what comes next will be those that stopped waiting for the rules to be written for them, and started writing their own.

© 2026 Executive Intelligence Brief. For strategic and informational purposes only. Not financial or investment advice.