Google Cloud's Vertex AI: Dominating the Three Frontiers of Enterprise Model Capability

In the rapidly evolving landscape of 2026, the race for artificial intelligence dominance has shifted from a singular focus on "intelligence" to a complex balancing act across three distinct frontiers. As Google Cloud's Vice President of Product for Vertex AI and Agents, Michael Gerstenhaber—a former Anthropic executive—is steering the company's unified platform to address these challenges head-on.

Vertex AI has become a cornerstone of the global cloud AI market, which is valued at $133.42 billion in 2026 and projected to reach nearly $1.73 trillion by 2033. With over 4 million developers building on Gemini and platform usage surging 20x in the last year, Google is positioning its vertically integrated stack as the definitive answer for enterprise-scale AI.

The Three Frontiers of Model Capability:

Gerstenhaber identifies a new framework for evaluating AI: it is no longer just about who has the "smartest" model, but who can optimize across intelligence, latency, and cost.

1. The Intelligence Frontier: Raw Reasoning Power:

The first frontier is the one most familiar to the public: raw cognitive ability. Models like Gemini 3.1 Pro are designed for tasks where quality is the only metric that matters.

-

Use Case: Software engineering and complex data analysis.

-

The Logic: If a model is writing critical production code, developers are willing to wait. Whether it takes 30 seconds or 45 minutes, the priority is a "zero-defect" output that reduces long-term maintenance costs.

2. The Latency Frontier: The "Boredom" Threshold:

The second frontier is defined by the clock. In real-time applications, a perfect answer delivered too late is a failure.

-

Use Case: Customer support, real-time translation, and retail agents.

-

The Logic: If an agent takes 45 minutes to apply a refund policy while a customer is on the phone, the customer will hang up. Enterprises must find the "most intelligent" model that fits within a specific latency budget, often measured in milliseconds.

3. The Cost Frontier: Scalability at the Edge:

The final frontier is economic. For platforms like Reddit or Meta that need to moderate millions of posts daily, the unpredictability of human behavior creates a massive financial risk.

-

Use Case: Content moderation and global brand safety.

-

The Logic: These companies cannot risk an "enterprise-ending" bill due to a sudden spike in traffic. They require models optimized for the highest possible intelligence at a fixed, scalable cost that allows for near-infinite subject volume.

Why Agentic AI Adoption is Still a "Trailing Indicator":

Despite the technical breakthroughs of 2025 and 2026, many wonder why autonomous "agentic" systems aren't yet ubiquitous in every industry. Gerstenhaber notes that production is always a trailing indicator of capability.

The primary bottleneck is not the models themselves, but the missing infrastructure. Enterprises currently lack standardized patterns for:

-

Auditing: Verifying exactly what an agent did and why.

-

Authorization: Safely delegating data access to an autonomous system.

-

Governance: Ensuring agents comply with strict industry regulations like those in the BFSI (Banking, Financial Services, and Insurance) sector, which currently holds a 25.49% share of the cloud AI market.

The Power of Vertical Integration:

One of the key reasons Gerstenhaber made the move from Anthropic to Google was the company's unique vertical integration. In an era where hardware is as important as software, Google's control over the entire stack is a decisive advantage:

-

Infrastructure: Building data centers and even investing in power plants.

-

Custom Silicon: Developing proprietary TPUs (Tensor Processing Units) to optimize inference.

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

- Vertex AI Platform: A unified engine that provides APIs for memory, interleaved code writing, and governance.

By 2026, an estimated 80% of companies are expected to use GenAI APIs in production. For these organizations, Google Cloud isn't just providing a model; it's providing the entire "Agentic Layer" required to move from experimental demos to mission-critical operations.

Strategic Takeaways for 2026:

-

Match Tasks to Frontiers: Evaluate your AI projects based on whether they prioritize quality, speed, or cost-at-scale.

-

Focus on Infrastructure: Widespread adoption requires auditable logs and explicit data permissions.

-

Scale Gradually: Start with narrow, high-trust scopes and widen the aperture as governance patterns mature.

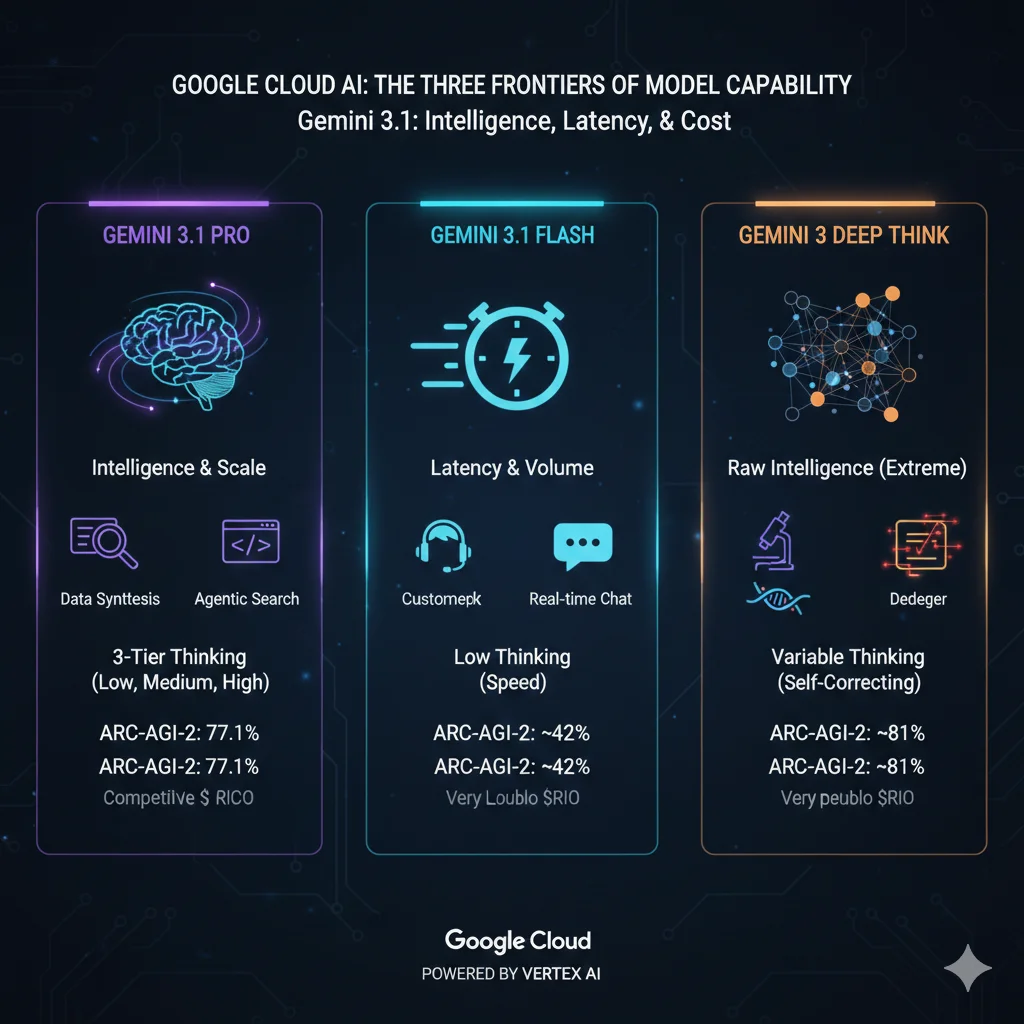

Building on Michael Gerstenhaber's Framework: The Gemini 3.1 Family:

Building on Michael Gerstenhaber's framework of the three frontiers—Intelligence, Latency, and Cost—the Gemini 3.1 family introduces a more granular way to deploy AI. With the release of Gemini 3.1 Pro in February 2026, Google has moved from a binary "fast vs. slow" system to a sophisticated three-tier "gear" system.

The following chart compares the primary models in the Gemini 3.1 family across these strategic boundaries.

Comparison of Gemini 3.1 Model Variants (2026):

| Feature | Gemini 3.1 Pro | Gemini 3 Flash | Gemini 3 Deep Think |

|---|---|---|---|

| Primary Frontier | Intelligence & Scale | Latency & Volume | Raw Intelligence (Extreme) |

| Best Use Case | Complex reasoning, agentic coding, data synthesis | Customer support, real-time chat, high-frequency tasks | Scientific research, complex multi-file debugging |

| "Thinking" Tier | 3-Tier (Low, Medium, High) | Low / Optimized for Speed | Variable (Self-correcting depth) |

| ARC-AGI-2 Score | 77.1% (Industry leading) | ~42% | ~81% |

| Context Window | 1 Million Tokens | 1 Million Tokens | 1 Million Tokens |

| Output Capacity | 64K Tokens | 32K Tokens | 64K Tokens |

| Input Price (per 1M) | $2.00 | $0.50 | $5.00+ (Premium pricing) |

| Output Price (per 1M) | $12.00 | $3.00 | $25.00+ |

Key Innovations in the 3.1 Family:

1. The Three-Tier Thinking System:

Gemini 3.1 Pro introduces the ability for developers to choose their "reasoning budget." Instead of forcing a choice between a shallow fast answer or a deep slow one, you can now toggle between three levels:

-

Low: Optimized for classification and simple lookups (Minimal latency).

-

Medium: Balanced for code review and summarization (Standard latency).

-

High: Deep reasoning for multi-step logic and abstract problem solving (Maximum latency).

2. Advanced Native Capabilities:

-

Beyond text and reasoning, Gemini 3.1 has been optimized for the "developer as a creator" era:

-

Native SVG Generation: Unlike models that just write code, 3.1 Pro can generate and animate website-ready SVGs directly, ensuring they stay crisp at any scale.

-

Vibe Coding: The model is tuned to understand "design intent," meaning it doesn't just write syntax; it interprets the "look and feel" of a project description.

-

Agentic Search (BrowseComp): Scored at 85.9%, the model can autonomously navigate the web to synthesize data into a single, cohesive view rather than just providing links.

3. Economic Advantage (The Cost Frontier):

One of the most disruptive aspects of 3.1 Pro is that Google maintained the $2/$12 pricing of its predecessor despite the 2x increase in reasoning performance.

Independent analysis shows that 3.1 Pro achieves similar or higher intelligence scores than Claude Opus 4.6 while being roughly 7.5x cheaper to run.