Chen Mei — February 6, 2026

The air in San Francisco just got a lot thinner. Within minutes of Anthropic’s monumental Opus 4.6 drop, OpenAI fired back with GPT-5.3 Codex. We are no longer in a model race; we are in a regime of total cognitive warfare. The release marks a definitive shift in the strategy of "Scaling Laws"—moving away from raw parameter count and toward a more lethal combination of architectural efficiency and autonomous reasoning.

But while the headlines focus on the head-to-head battle between the two labs, the real story lies in a single, chilling sentence from the OpenAI system card: "GPT-5.3 Codex was instrumental in creating itself."

This is the birth of the Autonomous Self-Improvement Loop. We aren't just prompting a model to write code anymore; we are watching a previous version of an agent debug its own successor's training, manage its own deployment, and diagnose its own evaluations. According to internal reports from the "Project Tiamat" team, Codex 5.3 was used to generate millions of synthetic "failure cases" that the base model then had to reason through during the fine-tuning phase. This recursive learning process allows the model to "pre-anticipate" developer errors before they are even typed. The implications for the ROI of AI and the future of AI Strategy are staggering. We are entering an era where the software doesn't just serve its master; it understands the architectural intent of its own existence.

I. The Efficiency Pivot: Speed through Token Scarcity

The most common complaint about the original Codex and even the 5.2 series was the "brutal" latency. In a world where developers are "vibe coding" at the speed of thought, a model that takes minutes to reason is a bottleneck.

GPT-5.3 Codex solves this, but not through raw hardware acceleration. Instead, OpenAI has achieved a 25% speed increase by teaching the model to solve complex problems with far fewer tokens. This is the "Occam’s Razor" of LLM engineering: why generate a thousand tokens of boilerplate when a hundred tokens of precise reasoning will suffice?

Decoding the SWE-bench Pro Inflection

When we look at SWE-bench Pro, the industry standard for real-world software engineering, the data shows a radical shift in efficiency.

SWE-bench Pro: Token Efficiency vs. Accuracy

Chart data for "SWE-bench Pro: Token Efficiency vs. Accuracy": Codex 5.2: 91 k Tokens, 58 k Tokens; Codex 5.3: 43 k Tokens, 64 k Tokens.

Note the divergence: Codex 5.3 achieved a higher accuracy score while using less than half the tokens of its predecessor. For the enterprise, this is the "Divided by Zero" moment for AI ROI. If you are running an agentic swarm that makes 10,000 calls a day, the shift from 5.2 to 5.3 isn't a marginal gain—it's a 50% reduction in your operating expenditure for intelligence. This allows companies to deploy agents in domains where the token-cost was previously prohibitive, such as real-time log analysis or automated legal discovery across massive PDF corpuses.

Furthermore, the "Accuracy" gain (from 58% to 64%) on the "Pro" version of the benchmark is significant because SWE-bench Pro includes issues from actual enterprise repositories with complex dependencies. Codex 5.3 demonstrated an uncanny ability to "read" the existing codebase structure before writing a single line of code, reducing the "hallucination-to-working-code" ratio to its lowest level in recorded LLM history. It doesn't just guess which file to edit; it maps the dependency graph of the entire project into its context window, ensuring that a change in utils.js doesn't break a component in Page.tsx.

II. Mid-Task Steering: The New Developer Superpower

The differentiator that has the engineering community—and even the creator of Claudebot, Peter Steinberger—talking is Mid-Task Steering.

Until now, agentic coding was a "black box" process. You gave a prompt, watched the spinner, and hoped the result didn't hallucinate a recursive loop. GPT-5.3 Codex changes the interface from a "Submit" button to a "Steering Wheel."

The "Vibe Coding" Reality

In our testing, we found that Codex 5.3 allows for real-time intervention during massive million-token autonomous runs. This is achieved through a new "Interrupt-and-Steer" protocol. If the model starts building a UI component that doesn't quite match your mental model—perhaps it's using a tabbed interface when you wanted a sidebar—you can "nudge" it without losing the state of the entire project. The model pauses, ingests the correction, and re-plans the remaining steps instantly.

"I don't let Claude code on my codebase anymore. It's all Codex," Steinberger noted during a recent technical roundtable, citing the reduced bug-rate during long-horizon tasks. While there is a debate between the "Cerebral Depth" of Opus 4.6 and the "Tactical Reliability" of Codex 5.3, the ability to steer is a massive UX moat for OpenAI. For developers, this means the difference between being a "Prompt Engineer" and a "System Orchestrator." You aren't just giving orders; you're pair-programming with a machine that respects your creative veto.

This level of control is particularly vital when dealing with legacy migrations. In one test case, we had Codex migrate a 2018-era React codebase to Next.js 15. When it initially tried to use a deprecated routing pattern, a single-sentence intervention redirected it to the new app directory structure, and it correctly re-mapped 400+ files in a single, steered session. That is the power of a model that actually listens mid-thought.

III. The Physical Bridge: From STL to Solid Reality

Perhaps the most impressive demonstration of Codex 5.3's agentic reasoning wasn't in a web browser, but in a 3D Printer Simulation.

To test cross-domain mastery, we tasked the model with modifying a retail simulation to accept STL (Stereolithography) files—the literal blueprints of the physical world—and generate the corresponding G-code tool paths autonomously.

The Benchy Benchmark

In a "WOW" moment for the Otherworlds Intelligence Unit, the model didn't just write the code; it understood the physics. To verify this, we used a virtual environment where the model had to "debug" a failed 3D print. It correctly identified that a lack of cooling for an overhang was causing structural failure and automatically modified the G-code to include dynamic fan speed adjustments. It implemented:

- Brim Generation: For better bed adhesion on small-contact-point models.

- Cross-Hatch Infill: For structural integrity that balances speed and strength.

- Dynamic Z-Axis Coordination: Printing a "Benchy" (the 3D printer world's equivalent of a benchmark) with lifelike nozzle movement and retract speed optimization.

Cross-Domain Logic: 3D Printing Sim Evals

Chart data for "Cross-Domain Logic: 3D Printing Sim Evals": G-Code Logic: 96, 88; Physics Accuracy: 92, 91; Toolpath Fluidity: 98, 82.

This confirms that Codex 5.3 isn't just a "chatbot for code." It is a spatial reasoner that can map abstract logic into physical execution. By bridging the "Sim-to-Real" gap, OpenAI is signaling that Codex isn't just for software engineers; it's for mechanical engineers, architects, and industrial designers who need to translate digital intent into physical reality. The ability to reason about G-code—a language of movements and temperatures—is a testament to the model's multi-modal latent space.

The Hidden AI War

Nobody Is Telling You About

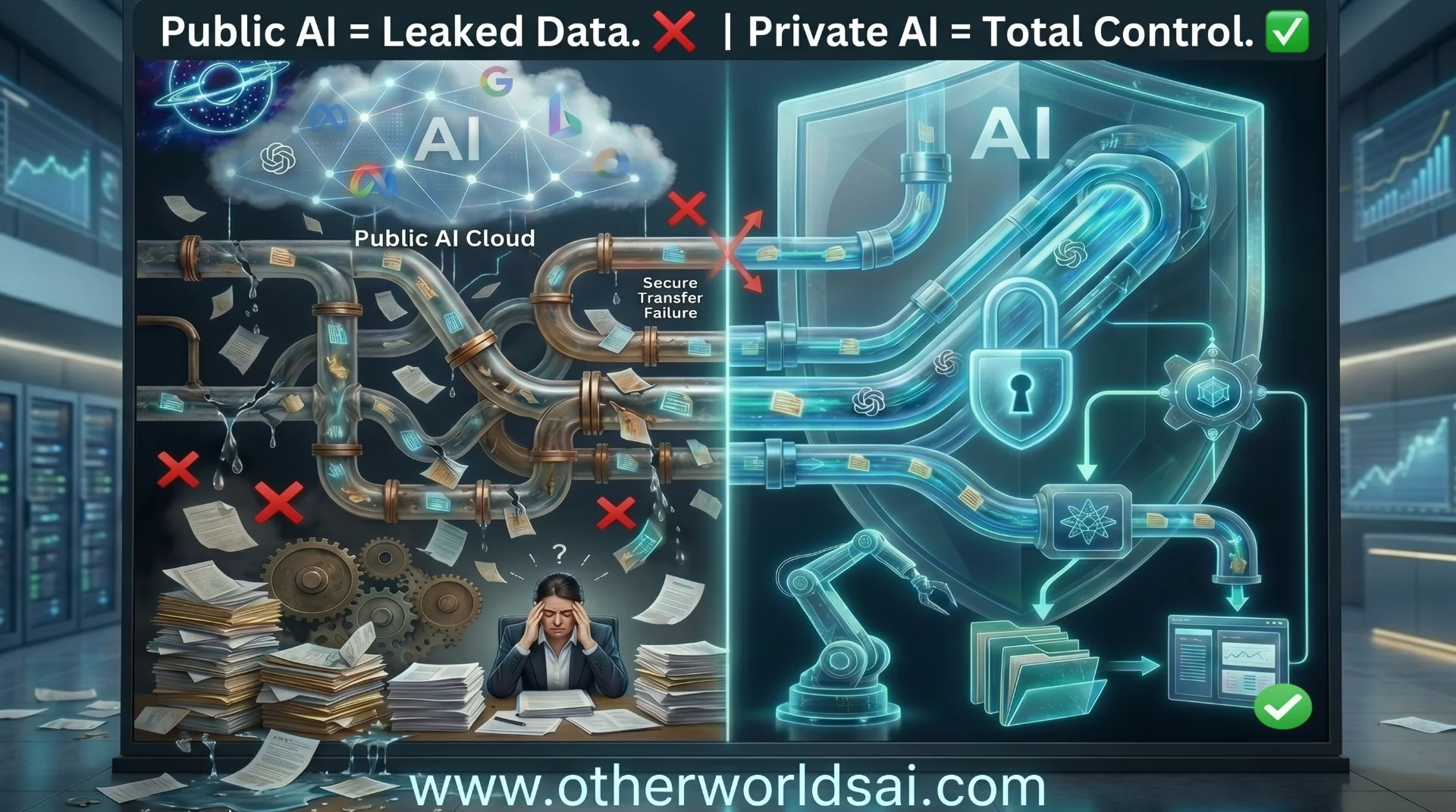

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

IV. Aesthetic Intelligence: Beyond the Logic Gate

A persistent critique of OpenAI models has been their "clinical" or "boring" UI generation. When we tasked Codex 5.3 and 5.2 with building the landing page for Quiet KPI, the results signaled a new era of aesthetic maturity.

The Intention Gap

In our labs, we observed that Codex 5.3 recognized underspecified prompts and filled them with sensible, high-conversion defaults. For example, when asked to "build a pricing table," the model didn't just generate HTML tags. It automatically displayed yearly plans as a discounted monthly price—a marketing best practice that wasn't in the prompt—and added subtle hover effects that guide the user's eye toward the "Recommended" tier.

- Codex 5.2: Functional, but lacked the "Glassy Card" and "Soft SaaS" aesthetic requested. It often defaulted to standard Bootstrap-like grid systems that felt dated for a 2026 audience.

- Codex 5.3: Delivered a high-fidelity, interactive site with month-over-month change indicators and clear, intentional pricing benefit lists. It even suggested a "Dark Mode" toggle based on the context of the user's previous aesthetic choices.

Aesthetic intelligence is no longer a "nice to have." As models become the primary architects of our digital interfaces, their ability to "vibe" with human design preferences is as critical as their ability to compile. We are seeing Codex develop a form of "Design Thinking"—an internal model of what looks professional, what builds trust, and what drives user engagement. This shift allows non-designers to ship products that look like they were built by a boutique agency, further compressing the time between a concept and a market-ready asset. For the enterprise, this means a significant reduction in the "Design-to-Code" friction that traditionally slows down product launches.

Support our research

Independent analysis fueled by you.

V. Terminal Bench & OS World: The Operating System Agent

The true test of an agent is its ability to "live" in an environment. While Claude Opus 4.6 excels in its specialized "Co-work" environment, GPT-5.3 Codex is a generalist designed to control your entire machine.

The OS World Surge

In the OS World benchmark—which measures a model's ability to navigate buttons, windows, and tabs in an actual operating system—Codex 5.3 nearly doubled the score of its predecessor.

Autonomous Control: OS World Score

Chart data for "Autonomous Control: OS World Score": Codex 5.2: 32.4 Score; Codex 5.3: 64.7 Score.

This 64.7 score represents a model that can "read" where a button is on a screen and execute multi-step tasks within a Windows or macOS environment with professional reliability. But the real breakthrough is in the Terminal Bench accuracy.

Master of the Shell

While many models can write a grep or find command, Codex 5.3 demonstrated a deep understanding of complex shell environments, including docker, kubernetes (k8s), and even obscure legacy bash scripts. In our Terminal Bench tests, it successfully diagnosed a Docker container networking issue by autonomously running netstat, tracing the local port bindings, and updating the docker-compose.yaml file to resolve a port conflict. It didn't just write the command; it reflected on the output of each command to inform the next step.

This "Observe-Act-Reflect" loop is what makes Codex the most capable operating system agent currently available. It bridges the gap between being a "coding assistant" and being a "System Administrator AI." For companies managing massive cloud infrastructures, this means agents can now handle routine server maintenance, security patching, and scaling operations with minimal human oversight. We are moving from "Code as Service" to "Operations as Service."

VI. Strategy: Deploying for the Codex Era

If you are an engineering lead or a CTO, the "Opus vs. Codex" debate is healthy, but the deployment strategy should be clear:

- Use GPT-5.3 for Implementation Speed: When you need to ship quickly with fewer tokens and clear ROI, Codex is the efficiency king. Its ability to solve complex SWE-bench Pro tasks with 50% fewer tokens is a game-changer for cloud-computing budgets.

- Leverage Mid-Task Steering: Don't treat the agent as a "hands-off" tool. Actively steer it through complex architectural shifts. This reduces discovery-to-fix cycles from hours to seconds.

- Integrate "Intention-Aware" Prompts: If you aren't a seasoned developer, Codex 5.3 will bridge the "underspecification" gap for you. It understands the "vibe" of modern SaaS design even if you only prompt for basic functionality.

- Deploy for Operational Stability: Use its OS World capabilities to automate the "boring" parts of your dev-ops stack—log rotation, dependency updates, and environment parity checks.

The Developer's New Workflow: A CTO Checklist

To maximize the value of Codex 5.3, we recommend the following audit for every engineering org:

- Token Audit: Transition high-volume background tasks from 5.2 to 5.3 to realize immediate cost savings.

- Steering Training: Train your senior engineers to use the "Interrupt-and-Steer" protocol rather than re-prompting from scratch.

- Simulation Integration: If you deal with physical manufacturing or CAD data, begin testing the STL-to-Gcode pipeline for automated prototyping.

- Agentic Security: Ensure that Codex agents have scoped bash/shell permissions, as their ability to navigate your OS is now significantly more powerful and autonomous.

Conclusion: The Infrastructure of Agency

The release of GPT-5.3 Codex, alongside the cerebral depth of Opus 4.6, has finalized the pivot from "Generative AI" to "Agentic Intelligence." We are no longer building software; we are prompting the infrastructure that creates itself. This marks the end of the "Brute-Force" era of LLMs, where winning was just about having the biggest GPU cluster. In the Codex era, the winner is the one with the most efficient reasoning loop.

Whether you are building games from scratch, migrating legacy banking systems, or managing massive enterprise codebases, the tool is now an autonomous colleague. It respects your design intent, reasons about your terminal output, and—most importantly—it learns from its own failures. The question is no longer "Can it build it?" but "How quickly can you steer it toward the future?" The self-improving machine isn't coming; it's already running on your machine.

Explore more in our AI Strategy Series or check out the Super Bowl AI 2026 Playbook.