Anthropic Hands Claude Code More Control — But Keeps It on a Leash: Inside the New Auto Mode for AI-Powered Development:

Anthropic Moves One Step Closer to Fully Agentic AI with New Claude Code Update:

Introduction: The Vibe Coding Dilemma That's Slowing Down Developers:

For developers leaning on AI tools today, the experience often feels like a frustrating binary choice: babysit every action the model takes, or hand over the wheel entirely and hope for the best. This tension — between control and speed — has become one of the defining friction points of the modern AI-assisted development workflow. And now, Anthropic is making a bold move to resolve it.

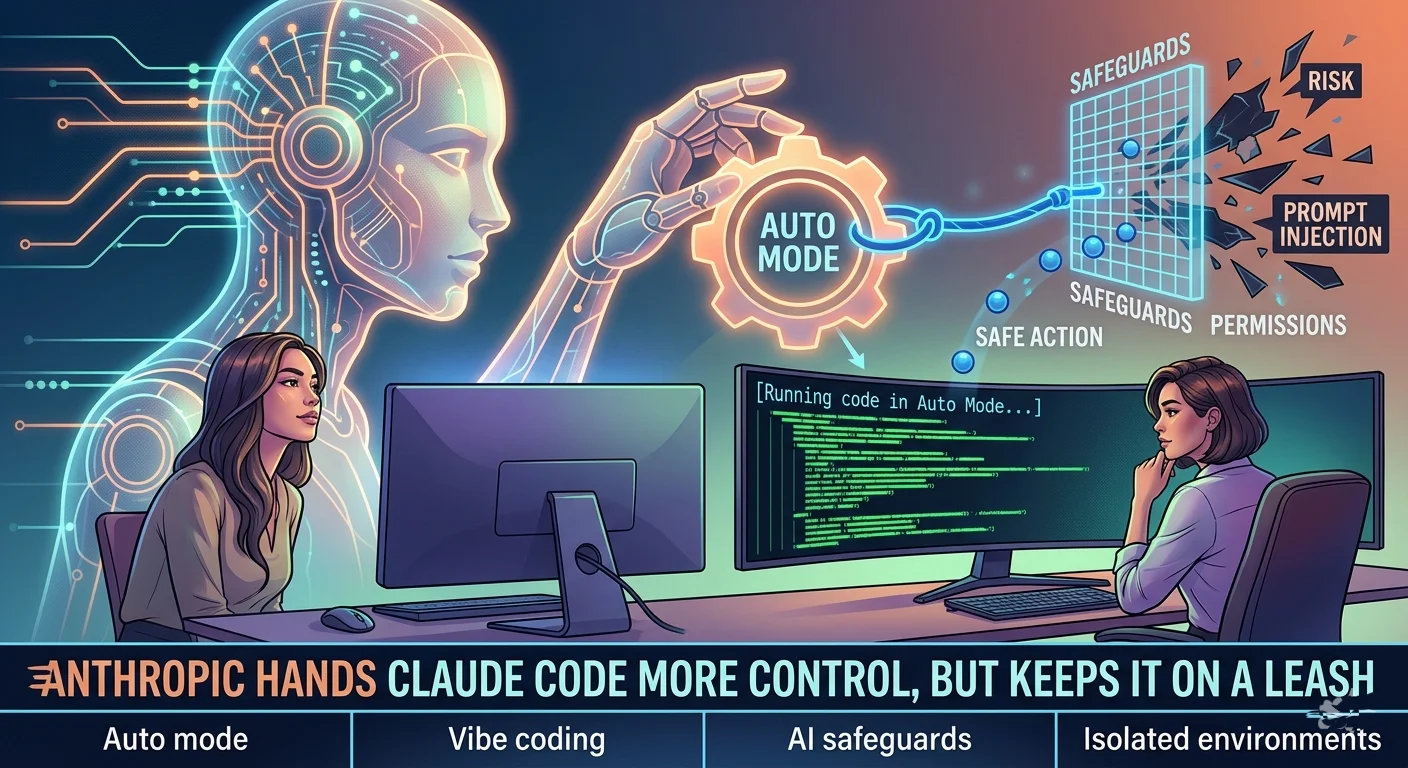

The company's latest update to Claude Code introduces a new feature called 'auto mode,' currently available in research preview, that aims to eliminate the need for constant developer oversight without sacrificing safety. The idea: let the AI itself decide which actions are safe to take autonomously — and which ones require a human in the loop.

It's a significant philosophical shift, and one that puts Anthropic at the leading edge of a fast-moving industry trend toward autonomous, permission-light AI agents. But as with any step toward greater AI autonomy, the details — and the guardrails — matter enormously.

What Is Auto Mode? Claude Code's New Autonomous Decision-Making Layer:

At its core, auto mode is an intelligent safety filter that sits between Claude Code and the actions it takes on your behalf. Before executing any command, the system reviews the action against a set of AI-powered safeguards, checking for two key red flags: behavior the user didn't request, and signs of prompt injection.

Prompt injection — for those unfamiliar — is a type of cyberattack where malicious instructions are hidden inside content that the AI is processing, causing it to take unintended or harmful actions. It's one of the most pressing security concerns in agentic AI systems, and the fact that Anthropic has baked protection against it directly into auto mode is a meaningful design choice.

Actions that pass the safety review proceed automatically without interrupting the developer's workflow. Actions flagged as potentially risky are blocked before they can execute. The result, in theory, is a system that moves at the speed of full automation while maintaining a meaningful layer of human-aligned oversight.

Think of it as the logical evolution of Claude Code's existing 'dangerously-skip-permissions' command — which previously handed all decision-making authority directly to the AI — but now with a protective intelligence layer built on top. Same speed, significantly more guardrails.

The Broader Industry Shift: Autonomous AI Agents Are Becoming the Norm:

Anthropic's auto mode doesn't exist in a vacuum — it's part of a sweeping industry movement toward agentic AI tools that can execute complex, multi-step tasks on a developer's behalf without requiring constant approval. GitHub's Copilot Workspace and OpenAI's Codex-based agents have both pushed in this direction, allowing AI systems to write, test, and even deploy code autonomously.

But Claude Code's auto mode takes the concept a meaningful step further by relocating the permission decision itself. Rather than asking the user to define in advance which actions are allowed, the system dynamically evaluates each action at runtime and decides whether to proceed or pause. The question of 'when to ask for permission' moves from the developer's settings panel to the AI's own judgment.

This shift is subtle but consequential. It represents a move from rule-based autonomy — where developers pre-define boundaries — toward judgment-based autonomy, where the AI applies contextual reasoning to make real-time decisions. For enterprise development teams running complex workflows, this could dramatically reduce the operational overhead of AI-assisted coding.

What Anthropic Hasn't Said: The Transparency Gap Around Safety Criteria:

Here's where thoughtful developers should pump the brakes before going all-in on auto mode: Anthropic has not publicly detailed the specific criteria its safety layer uses to distinguish safe actions from risky ones. That's a significant omission for a feature that is, by design, making consequential decisions without human input.

For individual developers experimenting in sandboxed environments, this ambiguity may be tolerable. For enterprise teams considering deploying auto mode in more sensitive workflows, the lack of documented decision logic is a legitimate concern. Before broad adoption, developers will rightly want to understand: What counts as 'risky'? How does the system handle edge cases? Can the safety layer itself be manipulated or bypassed?

Anthropic has acknowledged these open questions and is actively engaging with the developer community for feedback during the research preview phase — which is precisely why the feature carries that label rather than being released as a finished product. The 'research preview' designation signals that this is a living experiment, not a hardened production feature.

Part of a Bigger Picture: Claude Code Review and Dispatch for Cowork:

Auto mode doesn't arrive as a standalone announcement — it's the latest in a series of capability expansions that are rapidly positioning Claude Code as a comprehensive AI development platform rather than just a coding assistant.

Anthropic recently launched Claude Code Review, an automatic code reviewer designed to catch bugs before they reach the codebase. Where traditional code review relies on human colleagues to spot errors, logic flaws, and security vulnerabilities, Claude Code Review applies AI analysis to flag issues early — reducing the cognitive load on engineering teams and accelerating the review cycle.

Alongside that, Dispatch for Cowork enables users to delegate tasks directly to AI agents, which then handle the work autonomously on their behalf. Together, these three features — auto mode, code review, and Cowork dispatch — paint a picture of an AI development ecosystem that is moving decisively toward full task delegation, not just AI-assisted suggestion.

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

The cumulative effect is a platform that doesn't just help developers write code faster — it increasingly handles the workflow of software development end-to-end, from writing and reviewing code to managing tasks and executing commands autonomously.

Who Gets Access and How to Use It Safely: Rollout Details:

Auto mode is rolling out to Enterprise and API users in the coming days, with Anthropic currently limiting the feature to two of its most capable models: Claude Sonnet 4.6 and Claude Opus 4.6. This model restriction makes sense — autonomous decision-making of this kind requires the highest levels of reasoning capability and contextual judgment.

Critically, Anthropic recommends using auto mode exclusively in isolated, sandboxed environments — setups that are deliberately kept separate from production systems. The rationale is straightforward: if auto mode makes a mistake or encounters an edge case its safety layer isn't equipped to handle, the blast radius should be contained. Running it in a production environment at this stage would be inadvisable.

For developers eager to experiment, the research preview phase offers a rare opportunity to shape the feature's development. Anthropic is actively soliciting feedback, which means early adopters have real influence over how the safety criteria are refined, what edge cases get addressed, and how the feature evolves before its full release.

Key Takeaways: What You Need to Know About Claude Code Auto Mode:

Here's a quick summary of the confirmed details:

-

Feature name: Auto mode — now in research preview.

-

Core function: AI reviews each action before execution, blocking risky behavior and prompt injection attempts.

-

Built on: Claude Code's existing 'dangerously-skip-permissions' command, now with a safety layer.

-

Key protection: Guards against prompt injection and unrequested actions.

-

Availability: Rolling out to Enterprise and API users imminently.

-

Supported models: Claude Sonnet 4.6 and Claude Opus 4.6 only.

-

Recommended use: Sandboxed, isolated environments — not production systems.

-

Open question: Anthropic has not published the specific criteria for its safety decision logic.

Conclusion: A Smart Bet on AI Autonomy — With Eyes Open:

Anthropic's auto mode is a genuinely thoughtful attempt to solve one of the most persistent frustrations in AI-assisted development: the constant interruption of human approval loops. By delegating the permission decision to the AI itself — backed by real-time safety screening — it opens the door to a dramatically more fluid development experience without abandoning oversight entirely.

The feature's biggest strength is also its most interesting design choice: rather than asking developers to pre-define what the AI can and can't do, it lets the AI make that call dynamically. That's a bet on the quality of Claude's judgment — and on the robustness of its safety layer — that will be tested in earnest during the research preview phase.

For now, the smartest approach is cautious experimentation: test auto mode in sandboxed environments, pay close attention to what it blocks

and what it allows, and contribute feedback to help shape its evolution. The future of autonomous AI development is being written right now — and developers who engage early will have a hand in determining how it unfolds.