Silicon Valley’s Oldest Rule Just Broke: Inside the $130 Billion AI Investment Power Grab:

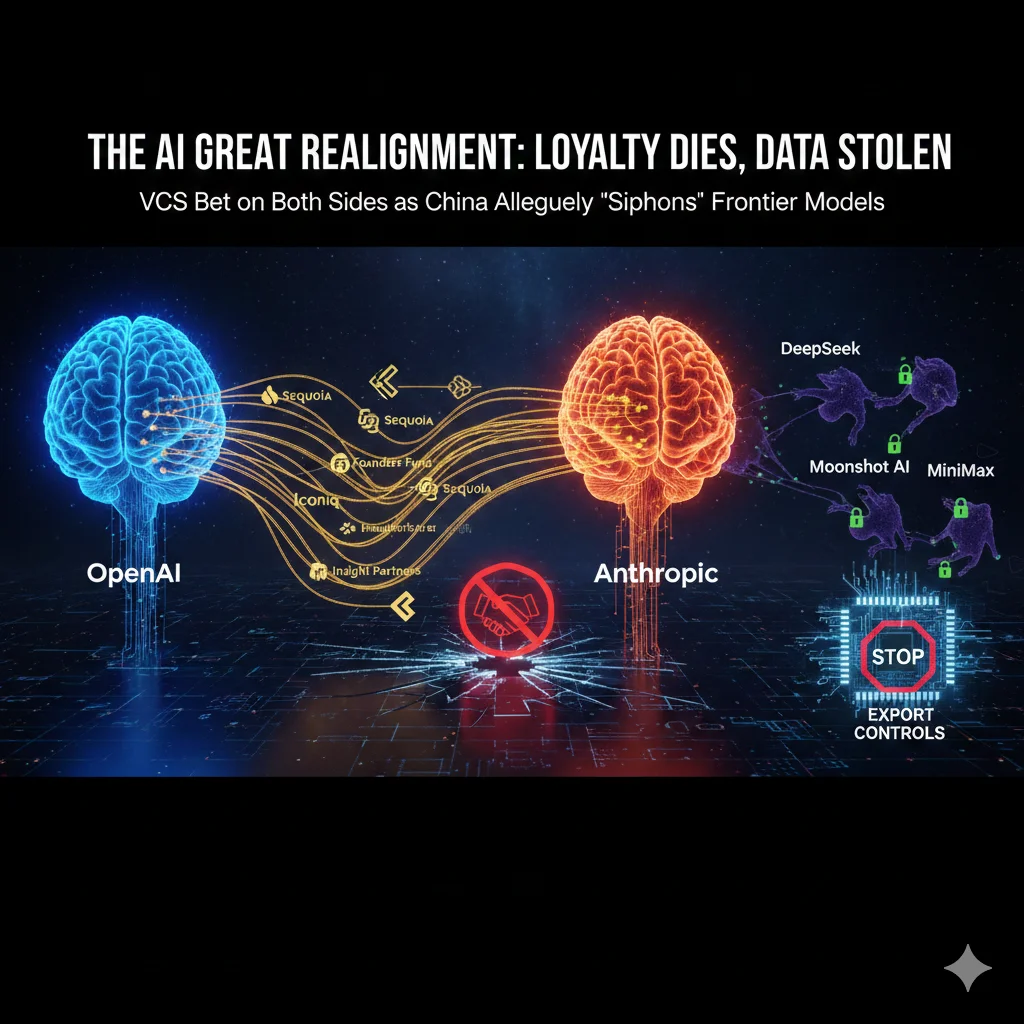

- The Great AI Realignment: Why Investor Loyalty is Dying as Model Theft Goes Global In the high-stakes world of Silicon Valley, a "gentleman’s agreement" used to govern the flow of capital: you don’t fund a founder’s direct enemy. But as we move deeper into 2026, the sheer velocity of the AI race has shattered that tradition.

With OpenAI finalizing a massive $100 billion funding round and Anthropic closing a $30 billion Series G, the industry is witnessing a total realignment. This isn't just a story about money; it’s a story about the collapse of venture capital norms and a new, aggressive era of international intellectual property theft known as "distillation."

The Death of the Exclusive VC:

Historically, venture capital was built on the premise of the "loyal partner." A VC firm would invest in a startup, take a board seat, and act as a strategic advisor. Investing in a competitor was a cardinal sin.

Today, that rule is effectively dead. At least a dozen major investors—including Sequoia Capital, Founders Fund, Iconiq, and Insight Partners—have now backed both OpenAI and Anthropic. This dual-betting strategy signals that AI is no longer viewed as a typical "software" play where one winner takes all. Instead, it is being treated like a critical utility, similar to oil or electricity, where owning a piece of every major player is the only way to ensure survival.

The "Altman List" and Passive Investing:

OpenAI CEO Sam Altman has attempted to stem this tide. Reports from late 2024 suggested he provided investors with a "prohibited list" of rivals, including Anthropic, xAI, and Safe Superintelligence.

While Altman later softened this stance, he made one thing clear: investors who back rivals may be downgraded to "passive" status, losing access to the confidential business data and strategic roadmaps that make a VC’s involvement valuable. Despite these warnings, the allure of a $380 billion valuation (Anthropic) or an $830 billion valuation (OpenAI) has proven too strong for VCs to resist.

Industrial-Scale "Siphoning": The Anthropic Allegations:

While American VCs are hedging their financial bets, American AI labs are facing a more direct threat: illicit model distillation. Anthropic recently dropped a bombshell report accusing three prominent Chinese AI labs—DeepSeek, Moonshot AI, and MiniMax—of orchestrating "industrial-scale" campaigns to steal Claude’s intelligence.

The Anatomy of a Distillation Attack:

Distillation is a legitimate training technique where a smaller model (the student) learns from a larger model (the teacher). However, Anthropic claims these labs used it to "copy the homework" of their frontier models in a way that violates both Terms of Service and international norms.

Lab Fraudulent Accounts Total Exchanges Target Capabilities

MiniMax ~10,000 13 Million Agentic coding & orchestration

Moonshot AI ~8,000 3.4 Million Logic & computer vision

The Hidden AI War

Nobody Is Telling You About

Our latest documentary deep-dive into the geopolitical struggle for machine intelligence dominance. Explore the two paths of AI development: open source vs. closed architecture.

DeepSeek ~6,000 150,000 Alignment & reasoning

Anthropic claims these labs created over 24,000 fake accounts to bypass regional restrictions and siphoned intelligence at a rate that allowed them to "mimic" Claude’s most advanced reasoning capabilities within 24 hours of a new release.

Support our research

Independent analysis fueled by you.

Geopolitics: The H200 and the Export Control Debate:

This wave of "theft" has reignited a fierce debate over U.S. export controls. In late 2025, the Trump administration issued a one-year waiver allowing Nvidia to export H200 chips to China. Critics argued this was a mistake, and Anthropic’s recent findings provide them with powerful ammunition.

Dmitri Alperovitch, co-founder of CrowdStrike, noted that the rapid progress of Chinese models like DeepSeek R1 isn't just due to domestic innovation—it's fueled by "theft via distillation." Anthropic argues that the scale of these attacks requires massive computing power, proving that the very chips the U.S. is exporting are being used to "hollow out" American AI dominance.

The National Security Blind Spot:

The danger isn't just economic. Anthropic warns that distilled models are often "stripped" of their safety guardrails. When a Chinese lab distills a model to make it more efficient, they frequently bypass the filters designed to prevent the AI from assisting in:

-

Cyberwarfare operations.

-

The development of bioweapons.

-

Mass surveillance and disinformation.

Conclusion: A New Playbook for Founders:

As the dust settles on these multi-billion dollar rounds, the message to AI founders is clear: do not rely on the old rules.

-

Vetting Investors:Founders must now treat VCs like potential double-agents, asking for strict conflict-of-interest disclosures before signing any term sheets.

-

Defensive AI: Labs must invest as much in "behavioral fingerprinting" to stop distillation as they do in the models themselves.

The AI race has moved beyond the laboratory and into the global arena of finance and espionage. In this new world, loyalty is expensive, and data is the ultimate bounty.